Twitter says flagged 300,000 'misleading' election tweets

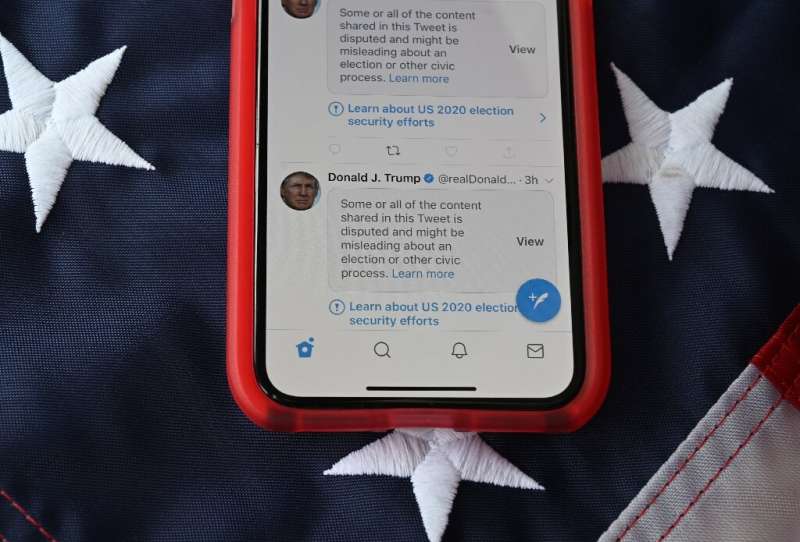

Twitter labeled 300,000 tweets related to the US presidential election as "potentially misleading" in the two weeks surrounding the vote, making up 0.2 percent of election-related posts, the company said Thursday.

The social network said the labels were issued between October 27 and November 11, one week before and after the US presidential election on November 3—which Democrat Joe Biden won over incumbent Donald Trump.

Of the 300,000 flagged tweets, 456 were covered over by a warning message and had engagement features limited—users could not like, retweet or reply to the posts, said Vijaya Gadde, Twitter's head of legal, policy and trust and safety, in a blog post.

She estimated that 74 percent of people who saw the problematic tweets did so after they had been labeled as misleading or flagged with a warning message, and sharing of the posts, as a result, declined by about 29 percent.

During the election period, Twitter posted messages on American users' pages which were seen 389 million times that "reminded people that election results were likely to be delayed, and that voting by mail is safe and legitimate," Gadde added.

Nearly half of Trump's tweets were flagged by the platform in the days following the election, as the president claimed, without evidence, that he had won and that the process had been tainted by massive fraud.

© 2020 AFP