July 7, 2021 feature

PHYSFRAME: a system to type check physical frames of reference for robotic systems

To move efficiently and safely within different environments, robotic systems typically monitor both their own movements and their surroundings as they try to navigate safely and avoid nearby obstacles. The measurements they gather generally make sense with respect to a given frame of reference, also known as a coordinate system.

For instance, in a three-dimensional (3D) coordinate system, a robot's location is inconsequential without knowledge of the frame to which this location refers to. As robots generally have modular designs, their different parts typically have different frames (e.g., camera frame, body frame, etc.) and measurements relating to one frame need to be translated back-and forth from one frame to another before they can be used to carry out computations.

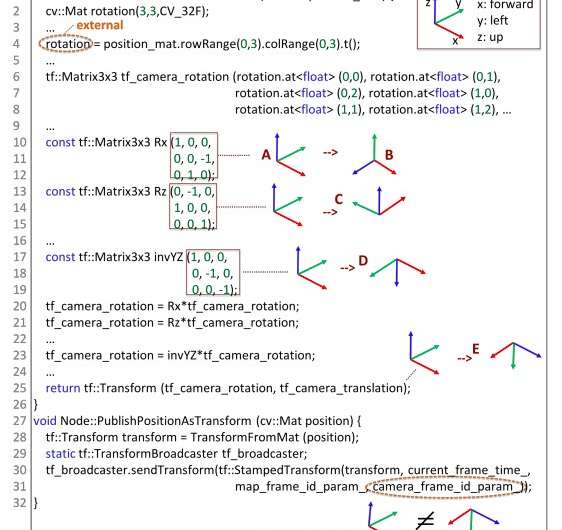

Most robotic systems are based on general purpose languages such as C/C++, which do not intrinsically support the complexity associated with the use of multiple frames. Even if some software tools, such as Robot Operating System (ROS), provide strategies to simplify translations between frames, it is ultimately up to developers to determine the reference frames of individual program variables, identify instances where translations are required and implement translations.

However, manually translating measurements across different frames can be highly challenging and these translations are often prone to errors. Some developers have thus been trying to devise methods to simplify this translation process and minimize translation-related errors.

Researchers at Purdue University and University of Virginia recently developed PHYSFRAME, a system that can automatically detect a variable's frame type and identify possible frame-related inconsistencies in existing ROS-based code. Their system, introduced in a paper pre-published on arXiv, could help to improve the effectiveness and reliability of frame translation practices in robotics.

"Since any state variable can be associated with some frame, reference frames can be naturally modeled as variable types," Sayali Kate, Michael Chinn, Hongjun Choi, Xiangyu Zhang and Sebastian Elbaum wrote in their paper. "We hence developed a novel type of system that can automatically infer variables' frame types and in turn detect any type inconsistencies and violations of frame conventions."

PHYSFRAME, the system developed by Kate and her colleagues, is a fully automated type-inference and checking technique that can detect frame inconsistencies and convention violations in programs based on ROS. The researchers evaluated their system on 180 ROS-based projects published on GitHub.

"The evaluation shows that our system can detect 190 inconsistencies with 154 true positives (81.05 percent)," the researchers wrote in their paper. "We reported 52 to developers and received 18 responses so far, with 15 fixed/acknowledged. Our technique also found 45 violations of common practices."

Using the system they developed, Kate and her colleagues already identified several inconsistencies and violations in existing ROS-based projects. In the future, PHYSFRAME could thus prove to be a very valuable tool for checking existing robotics code and identifying errors related to the translation of measurements across different frames.

More information: PHYSFRAME: Type checking physical frames of reference for robotic systems. arXiv:2106.11266 [cs.RO]. arxiv.org/abs/2106.11266

© 2021 Science X Network