August 23, 2021 feature

A vision-based robotic system for 3D ultrasound imaging

Ultrasound imaging techniques have proved to be highly valuable tools for diagnosing a variety of health conditions, including peripheral artery disease (PAD). PAD, one of the most common diseases among the elderly, entails the blocking or narrowing of peripheral blood vessels, which limits the supply of blood to specific areas of the body.

Ultrasound imaging methods are among the most popular means of diagnosing PAD, due to their many advantageous characteristics. In fact, unlike other imaging methods, such as computed tomography angiography and magnetic resonance angiography, ultrasound imaging is non-invasive, low-cost and radiation-free.

Most existing ultrasound imaging techniques are designed to capture two-dimensional images in real time. While this can be helpful in some cases, their inability to collect three-dimensional information reduces the reliability of the data they gather, increasing their sensitivity to variations in how individual physicians used a given technique.

Researchers at Technical University of Munich, Zhejiang University and Johns Hopkins University have recently developed a new robotic system that can capture good quality 3D ultrasound images. This robotic system, presented in a paper pre-published on arXiv, could allow physicians and healthcare providers to gather more reliable anatomical data using ultrasound technology.

The vast majority of 3D ultrasound imaging systems developed so far do not allow users to capture the entire artery tree of individual human limbs, as the objects that are being examined cannot be adjusted while data is being collected. If an object or limb moves during the data collection process, in fact, the quality of the 3D images collected by these systems tends to decrease substantially.

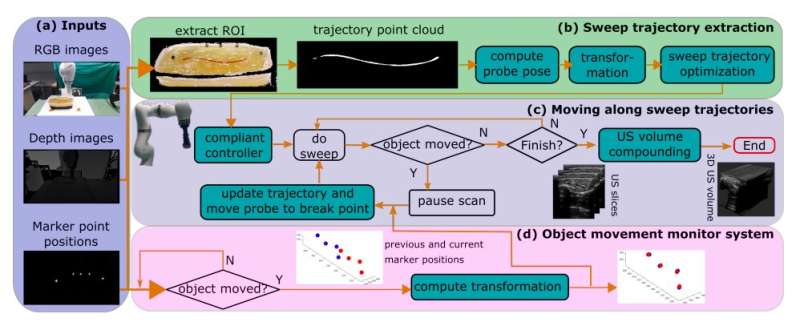

"To address this challenge, we propose a vision-based robotic ultrasound system that can monitor an object's motion and automatically update the sweep trajectory to provide 3D compounded images of the target anatomy seamlessly," Zhongliang Jiang and his colleagues wrote in their paper.

Their robotic system is based on computer vision. In fact, it initially uses a depth camera to extract the manually planned sweep trajectory of the object that is being examined. This sweep trajectory is then used to estimate the object's normal direction.

"Subsequently, to monitor the movement and further compensate for this motion to accurately follow the trajectory, the position of firmly attached passive markers is tracked in real-time," the researchers explained in their paper. "Finally, a step-wise compounding is performed."

In addition to an RGB-D depth camera, the robotic system created by Jiang and his colleagues consists of a robotic manipulator, a CPLA12875 linear probe, and a ultrasoundB interface. The robotic manipulator's movements can be controlled via a system that the team created, which is based on the robot operating system (ROS), a framework for writing robotic software.

The software that the system is running on has three main components, namely a vision-based sweep trajectory extraction technique, an automatic robotic ultrasound sweep and 3D ultrasound compounding method, as well as a movement-monitoring system. The latter is designed to update the trajectory extracted by the system and correct the 3D compounding.

To evaluate the performance of the system they created, Jiang and his colleagues carried out a series of experiments using a custom-designed and gel-based vascular phantom. Their findings were highly promising, as their system was able to capture good quality and complete 3D images of target blood vessels, even when the object it was examining was moving.

"The preliminary validation on a gel phantom demonstrates that the proposed approach can provide a promising 3D geometry, even when the scanned object is moved," the researchers wrote in their paper. "Although the vascular application was used to demonstrate the proposed method, the method can also be used for other applications, such as ultrasound bone visualization."

More information: Motion-aware robotic 3D ultrasound. arXiv:2107.05998 [cs.RO]. arxiv.org/abs/2107.05998

© 2021 Science X Network