December 6, 2021 feature

SEIHAI: The hierarchical AI that won the NeurIPS-2020 MineRL competition

In recent years, computational tools based on reinforcement learning have achieved remarkable results in numerous tasks, including image classification and robotic object manipulation. Meanwhile, computer scientists have also been training reinforcement learning models to play specific human games and videogames.

To challenge research teams working on reinforcement learning techniques, the Neural Information Processing Systems (NeurIPS) annual conference introduced the MineRL competition, a contest in which different algorithms are tested on the same task in Minecraft, the renowned computer game developed by Mojang Studios. More specifically, contestants are asked to create algorithms that will need to obtain a diamond from raw pixels in the Minecraft game.

The algorithms can only be trained for four days and on 8,000,000 samples created by the MineRL simulator, using a single GPU machine. In addition to the training dataset, participants are also provided with a large collection of human demonstrations (i.e., video frames in which the task is solved by human players).

A team of researchers at Huawei Noah's Ark Lab, Tianjin University and Tsinghua University won the NeurIPS- MineRL 2020 competition. Using a sample-efficient hierarchical artificial intelligence (AI) tool called SEIHAI, the researchers were able to outperform all other algorithms participating in the contest.

"We present SEIHAI, a sample-efficient hierarchical AI that fully takes advantage of the human demonstrations and the task structure," Hangyu Mao and his colleagues wrote in a paper outlining their AI, which was pre-published on arXiv. "Specifically, we split the task into several sequentially dependent subtasks and train a suitable agent for each subtask using reinforcement learning and imitation learning."

To obtain a diamond in Minecraft, players need to follow a series of steps. Sequentially, they need to chop a tree to create a log, then use the log to craft a wooden pickaxe, which they will then use to dig out a cobblestone. Finally, the cobblestone needs to be placed into a furnace and crafted into a stone, which could be diamond or something else. Diamond is rare in the game, which further complicates the task for MineRL participants.

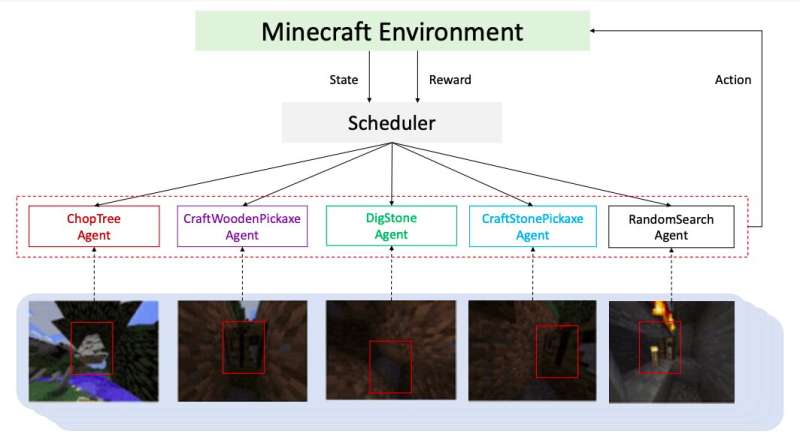

To tackle the task most effectively, Mao and his colleagues divided it into a series of subtasks, each of which required different skills and capabilities. They then trained different agents to tackle each of the subtasks individually, using reinforcement learning or imitation learning, depending on which one best suited the problem they were trying to solve.

To decide which agent was better suited for each of the different subtasks, the researchers used a scheduler, a tool that selected an agent for different situations based on the unique characteristics of the subtask that needed to be completed. The hierarchical model created by the researchers significantly outperformed all the other algorithms and models participating in the MineRL 2020 contest, achieving remarkable results.

"We won first place in the preliminary and final of the NeurIPS-2020 MineRL competition, which demonstrates the efficiency of our hierarchical method, SEIHAI," the researchers wrote in their paper. "We believe that developing methods that properly combine human priors and sample-efficient learning-based techniques is a competitive way to solve complex tasks with limited demonstrations, sparse rewards but an explicit task structure."

More information: Hangyu Mao et al, SEIHAI: A sample-efficient hierarchical AI for the MineRL competition. arXiv:2111.08857v1 [cs.LG], arxiv.org/abs/2111.08857

© 2021 Science X Network