December 14, 2021 feature

A virtual reality simulator to train surgeons for skull-base procedures

People with diseases or conditions that affect the base of the skull, such as otologic abnormalities, cancerous tumors and birth defects, might need to undergo skull base surgery at some point in their life. To successfully conduct these challenging procedures, surgeons must skillfully operate on and within a person's skull, accessing specific regions using drills.

Researchers at Johns Hopkins University (JHU) have recently developed a new system that could be used to train surgeons to complete skull base surgeries, as well as potentially other complex surgical procedures. This system, presented in a paper published in Computer Methods in Biomechanics and Biomedical Engineering: Imaging & Visualization, is based on the use of a virtual reality (VR) simulator.

"The process of drilling requires surgeons to remove minimal amounts of bone while ensuring that important structures (such as nerves and vessels) housed within the bone are not harmed," Adnan Munawar, one of the researchers who developed the system, told TechXplore. "Therefore, skull base surgeries require high skill, absolute precision, and sub-millimeter accuracy. Achieving these surgical skills requires diligent training to ensure the safety of patients."

Currently, most resident surgeons are trained to complete skull base surgeries and other procedures on cadavers or on live people under the supervision of experienced doctors. However, realistic computer simulations and virtual environments could significantly enhance the training of surgeons, offering a cost-effective, safe and reproducible alternative to traditional training methods.

In addition to allowing surgeons to practice their skills in a safe and realistic setting, simulation tools enable the collection of valuable data that would otherwise be harder to attain. This includes optimal trajectories for surgical tools, the forces that are imparted during a procedure, or the position of cameras/endoscopes.

"This data is beneficial for two purposes," Munawar explained. "Firstly, it could be used to train artificial intelligence (AI) algorithms that can assist surgeons in the operating room and make procedures safer. Secondly, by comparing surgical data from residents in training and expert surgeons, educators could individualize training and make the limited time trainees have for education more efficient."

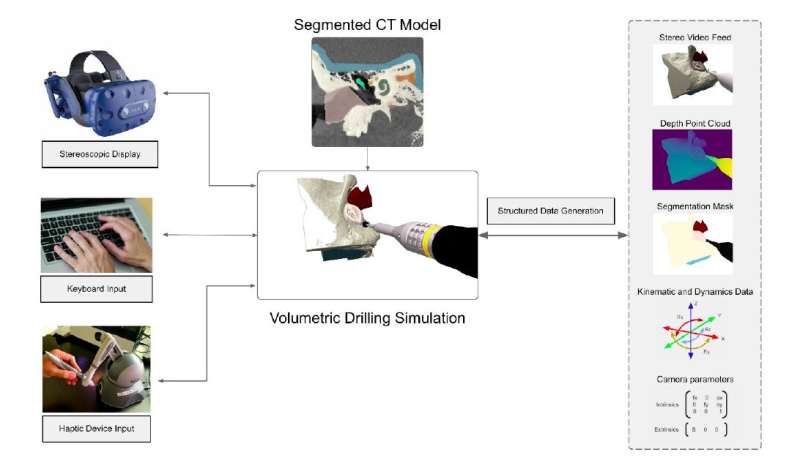

The VR-based system created by the researchers allows resident and experienced surgeons to practice complex surgical procedures within simulated environments that are based on the computer tomography (CT) scans of real patients. In addition, the simulator can be used to record structured data. This data could eventually be used to assess the skills of trainees or to train machine learning algorithms that could assist surgeons during complex procedures.

In addition to Munawar, the multidisciplinary team that developed the system included students Zhaoshuo Li, Nimesh Nagururu, Andy Ding and Punit Kunjam, as well as surgeon Francis Creighton and engineering faculty members Peter Kazanzides, Russell Taylor and Mathias Unberath. So far, the researchers used their system to specifically simulate skull base surgeries. Due to its flexibility, however, it could also eventually be applied to other surgical interventions and procedures.

"So far, our system provides an immersive simulation environment where the surgeons can interact with a virtual skull which is generated from a patient CT (Computer Tomography) scan," Munawar said. "A virtual drill that is controlled via a haptic device (or a keyboard) is used to drill through the virtual skull. The interaction between the drill and the skull is used to generate force feedback which is provided via the haptic device for realistic tactility. Finally, for visual realism and depth perception, stereoscopic video is displayed on a VR (Virtual Reality) headset."

As a surgeon is operating within the simulated environment, the system created by the team at JHU collects high-quality data in real-time. This includes information about the surgeon's hand trajectory, the forces imparted by the virtual drill on the phantom skull and stereo/video footage.

"A noteworthy aspect of our work is that it offers the possibility of incorporating real patient models into our simulation environment and then deploying them for use by skilled surgeons and residents for training," Munawar said. "Additionally, the collected data from experts can be used for AI (Artificial Intelligence) algorithm development."

In the future, the VR simulator created by this team of researchers could be introduced in medical colleges, hospitals and healthcare settings, as a means to train new surgeons before they start operating on humans. In addition, this recent work could pave the way towards the widespread integration of VR-based surgical training tools with the collection of structured data to train AI agents.

"Our immediate plan is to deploy our system in the Johns Hopkins Otolaryngology—Head and Neck Surgery department to be used by skilled surgeons as well as residents for practice and data collection," Munawar added. "The collected data will be used to establish a quantitative evaluation protocol to characterize surgical performance, which is not presented by prior works. We shall also use the data for developing artificial intelligence algorithms for computer-assisted surgeries, such as tool/tissue tracking and 3D reconstruction algorithms."

More information: Virtual reality for synergistic surgical training and data generation. Computer Methods in Biomechanics and Biomedical Engineering: Imaging & Visualization(2021). DOI: 10.1080/21681163.2021.1999331

© 2021 Science X Network