Consumer protection: Increased data sovereignty for voice assistant users

While voice assistants like Amazon Alexa or Google Assistant may be a useful part of everyday life, they are coming under fire from data protection and consumer advocates. The virtual assistants are alleged to collect user data and transfer it to clouds, where it can be transcribed and analyzed by third parties. Now, researchers from the Fraunhofer Institute for Applied Information Technology FIT are putting the issue under the microscope: In a living lab study involving 33 households, they are investigating how much voice assistants know about their households and what information they store. They are also launching a new platform to help the study participants exercise their personal data protection rights.

Voice assistants like Alexa and Google Assistant provoke starkly different reactions: While some people are excited by their potential for use in smart homes, others view them as the covert listening devices of the modern age. After all, the voice assistants (VA) in smart speakers and smartphones collect data about users' daily lives, including their interactions with other connected devices, musical preferences and unintentional interactions. Placed in sensitive locations in the home, loudspeakers with built-in voice assistants are always operational and could potentially listen in on everything their users say—including conversations with children and visitors. All interactions with the device are stored on the manufacturers' cloud systems, as well as the servers of other service providers. While the General Data Protection Regulation (GDPR) does give consumers the right to access, modify or erase their collected data, most people do not know how to do this and would not be able to work it out with any ease. Requests for access, in particular, will only return a collection of raw data that is incomprehensible to non-experts—if they return anything at all. That is why a research team from Fraunhofer FIT has launched CheckmyVA, a project that aims to bolster data sovereignty for voice assistant users and give them the support they need to better protect their privacy. In their work on the project, the researchers are collaborating closely with their project partners, the University of Siegen and the start-up company, open.INC. CheckmyVA falls under the relatively new field of consumer informatics. The project is supported by funds of the Federal Ministry for the Environment, Nature Conservation, Nuclear Safety and Consumer Protection (BMUV) based on a decision of the Parliament of the Federal Republic of Germany via the Federal Office for Agriculture and Food (BLE) under the innovation support program.

A total of 33 households from across Germany are taking part in the project, including families, couples and single-person households. The researchers will monitor the participants in an experimental learning environment known as a living lab for a period of almost three years. During that time, they will hold regular discussions with the participants about their voice assistant use and any concerns that they have on the subject. These conversations will center on questions such as the following: How do they use the voice assistant (primarily Amazon Alexa and Google Assistant)? Do they use smart home functions? Is the voice assistant always left on, or is it only activated when needed? Does their use change over the course of the project? Are the households aware of what happens to the recordings? What data protection practices do the users employ? What problems arise when they do? The discussions held around these questions will be used as a springboard for developing user-centric solutions.

-

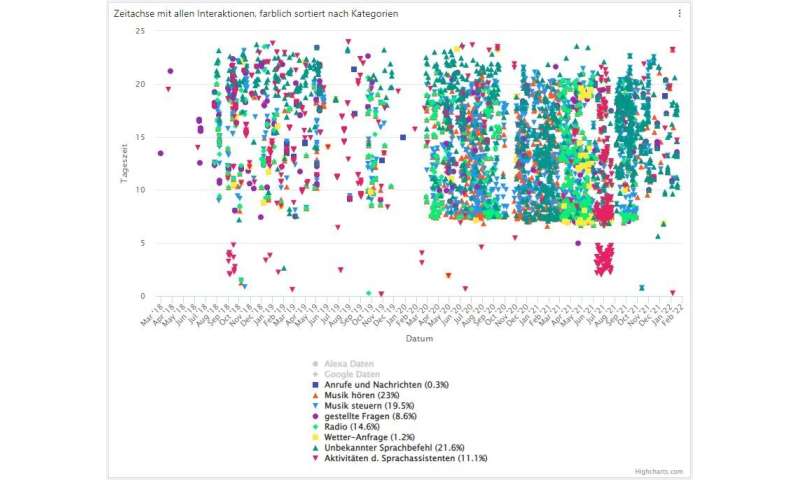

The timeline shows all stored interactions with the voice assistant from the initial use onward. Users can create categories (see legend below) in order to distinguish different types of voice commands and identify initial behavioral patterns. Credit: Fraunhofer-Gesellschaft -

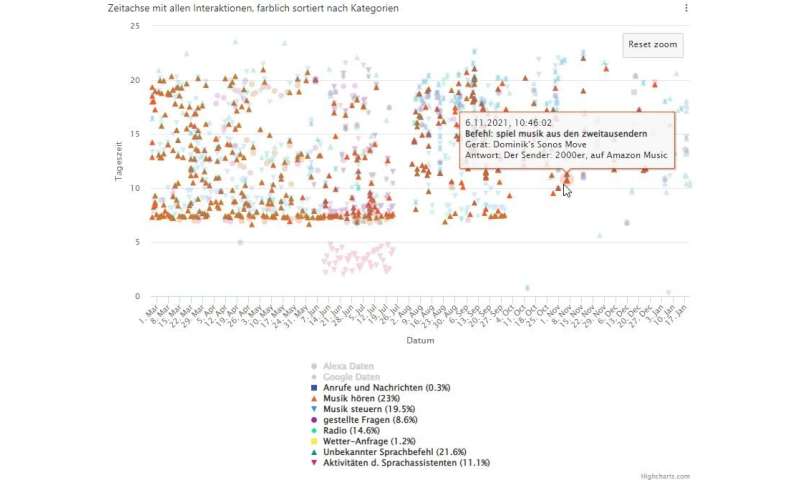

Users can select a specific period of time within the timeline and receive addi-tional information about a specific voice command (see speech bubble in the middle.). Credit: Fraunhofer-Gesellschaft

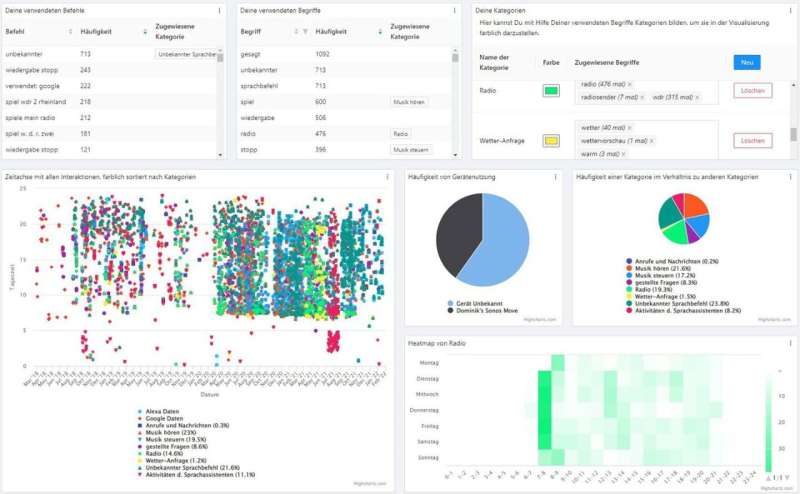

Data literacy through data visualization

The researchers' concrete aim is to develop a new platform based on Design for All principles that will support people in exercising their GDPR rights. Using established data science and AI methods, the platform will process the data and create a user-centered visualization, with the aim of making users aware of the behavioral patterns that can be deduced from their data and of the purposes for which third parties could use this information. What's more, the platform will provide them with a simple tool they can use to assert and exercise their GDPR rights, such as the right to erasure of data or the right to revoke consent to the use of their data. "The platform will have a dashboard that runs as a browser plug-in and allows users to request a copy of their data in an uncomplicated way. The link to the privacy settings is easy to find," says Dominik Pins, project coordinator and scientist in the Human-Centered Engineering and Design department at Fraunhofer FIT. The dashboard shows conversations with VAs on a time-line and makes the transcriptions transparent. For example, it shows what commands were given and how often a user gave a command unintentionally. The dashboard will also show the VA's answers and offers other functions, such as filtering and categorization, that make the data easier to manage. "The platform helps users to reflect on their data traces, which will in turn improve their data literacy," Pins explains.

The dashboard can be downloaded for Google Chrome and Mozilla Firefox free of charge with the browser settings or from the project website.