June 17, 2022 feature

A technique to teach bimanual robots stir-fry cooking

As robots make their way into a variety of real-world environments, roboticists are trying to ensure that they can efficiently complete a growing number of tasks. For robots that are designed to assist humans in their homes, this includes household chores, such as cleaning, tidying up and cooking.

Researchers at the Idiap Research Institute in Switzerland, the Chinese University of Hong Kong (CUHK) and Wuhan University (WHU) have recently developed a machine learning-based method to specifically teach robots to master stir-fry, the Chinese culinary cooking technique. Their method, presented in a paper published in IEEE Robotics and Automation Letters, combines the use of a transformer-based model and a graph neural network (GNN).

"Our recent work is the joint effort of three labs: the Robot Learning & Interaction group led by Dr. Sylvain Calinon at the Idiap Research Institute and the Collaborative and Versatile Robots laboratory led by Prof. Fei Chen Cuhk and the lab led by Prof. Miao Li at WHU," Junjia Liu, one of the researchers who carried out the study, told TechXplore. "Our three labs have been studying and working together for about ten years. We have a particular interest in making intelligent robots that can prepare food for people."

Dr. Calinon, Prof. Chen and Prof. Miao have been trying to enhance the cooking skills of robots for several years now. In their recent study, they decided to focus on the Chinese culinary arts, specifically stir-fry, a cooking technique that entails frying ingredients over high heat while stirring them, generally using a Wok pan.

"While domestic service robots have been developed considerably in recent years, creating a robot chef in the semi-structured kitchen environment remains a grand challenge," Liu said.

"Food preparation and cooking are two crucial activities in the household, and a robot chef that can follow arbitrary recipes and cook automatically would be practical and bring a new interactive entertainment experience."

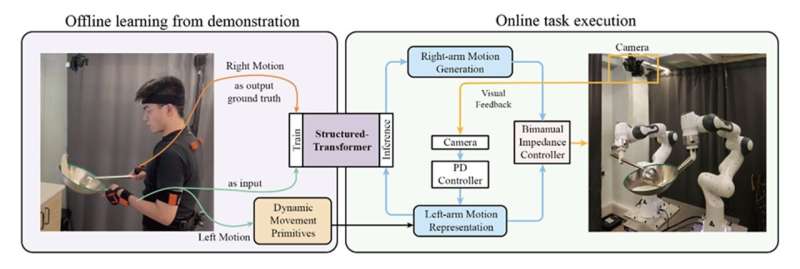

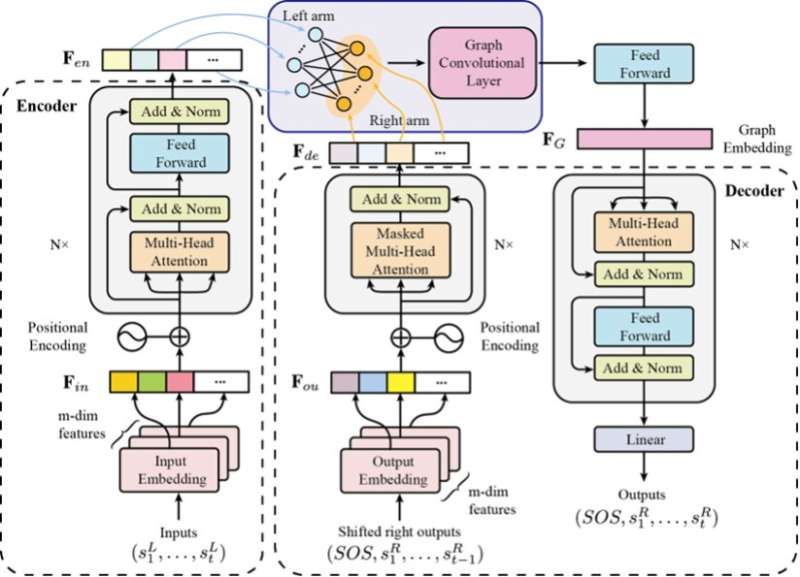

Stir-fry, the cooking style that the team focused on in their recent paper, involves complex bimanual skills that are difficult to teach to robots. To effectively do this, Liu and his colleagues first tried to train a bimanual coordination model known as a "structured-transformer" using human demonstrations.

"This mechanism regards coordination as a sequence transduction problem between the movements of both arms and adopts a combined model of transformer and GNN to achieve this," Liu explained. "Thus, in the online process, the left-arm movement is adjusted according to the visual feedback, and the corresponding right-arm movement is generated by the pre-trained structured-transformer model based on the left-arm movement."

The researchers assessed their model's performance both in simulations and on a physical two-handed robotic platform, known as the Panda robot. In these tests, their model allowed the robot to successfully and realistically reproduce the motions involved in stir-fry.

"The main contribution of this paper is to consider the coordination mechanism of bimanual robots explicitly in the form of sequence transduction," Liu said. "Compared with classical learning from demonstration methods and deep learning/reinforcement learning based methods, our decoupled framework skillfully combines both these techniques. In fact, it can have both the generalization of the former and the expressivity of the latter."

In the future, the model introduced by this team of researchers could enable the development of robots that can cook meals both in home environments and at public venues. In addition, the same approach could be used to train robots on other tasks that involve the use of two arms and hands. Meanwhile, Liu and his colleagues plan to continue working on their model, to improve its performance and generalizability.

"We will now introduce higher dimensional information to learn more humanoid motion in kitchen skills, such as visual and electromyography signals," Liu added. "The estimation of semi-fluid contents in this work was simplified as two-dimensional image segmentation, and we only used the relative displacement as the desired target. Thus, we also plan to propose a more comprehensive framework that consists of both the movements of bimanual manipulators and the state change of the object."

More information: Junjia Liu et al, Robot Cooking With Stir-Fry: Bimanual Non-Prehensile Manipulation of Semi-Fluid Objects, IEEE Robotics and Automation Letters (2022). DOI: 10.1109/LRA.2022.3153728

© 2022 Science X Network