February 22, 2022 feature

A reachability-expressive motion planning algorithm to enhance human-robot collaboration

A team of researchers at University of California, Los Angeles (UCLA)'s Center for Vision, Cognition, Learning, and Autonomy (VCLA), led by Prof. Song-Chun Zhu, recently developed an approach that could help to align a human user's assessment of what a robot can do with its true capabilities. This approach, presented in a paper published in IEEE Robotics and Automation Letters, is based on a new algorithm that simultaneously optimizes the physical cost and expressiveness of a robot's motion, to determine how well human observers would estimate its reachable workspace.

"In human society, people have different roles based on their expertise and capabilities," Xiaofeng Gao, one of the researchers who carried out the study, told TechXplore. "Such capability-aware role assignments allow humans to collaborate with each other more efficiently. We believe that when humans are working with robots, it is equally important for them to understand the robot's capability, as a failure to do so can affect their trust in and acceptance of robots."

Gao and his colleagues feel that it is crucial for humans to be able to accurately estimate a robot's capabilities. In fact, if a user underestimates a robot's abilities, he might not use it; but if he overestimates it, he could be disappointed or use it in situations where it might cause critical errors.

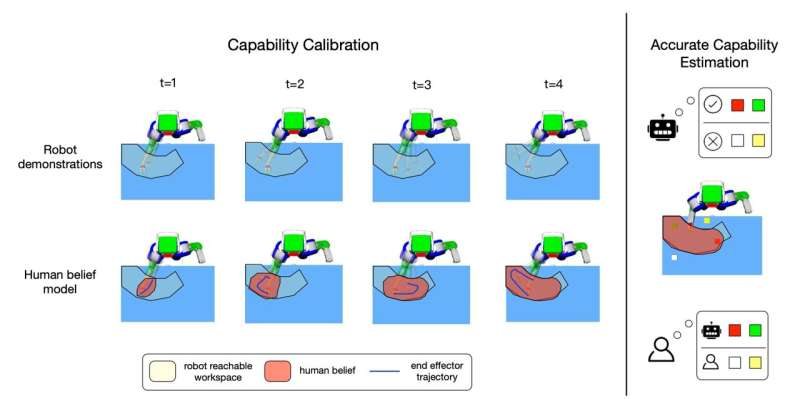

The key goal of the researchers' study was to help humans to gain a good understanding of a robot's reachable workspace, through a capability calibration process that involves several motion demonstrations. In addition, the team wanted to explore the extent to which accurately gaging the ability of robots could help humans to assign suitable roles to them in collaboration tasks.

"We propose reachability-expressive motion planning (REMP), an algorithm that generates expressive motion demonstrations to calibrate the perceived robot reachability via trajectory optimization," Gao explained. "One unique feature of REMP is that it models how the human belief of the robot's reachable workspace changes after each trajectory. As a result, it can improve the human's reachability understanding of a robot quite efficiently, as only a small number of demonstrations are necessary to achieve decent calibration."

Gao and his colleagues evaluated their algorithm in a series of experiments involving human participants. In these tests, they compared its performance with that of two baseline methods that utilize pure functional motions and randomly traversing trajectories as demonstrations. Remarkably, they found that their method could significantly improve the users' estimation of a robot's reachable workspace, while also enhancing human-robot collaboration.

"We are excited to see that when using our method, users perceive the robot more positively, as the robot is considered to be more reliable, more predictable and easier to understand," Gao said. "These results highlight the necessity of building intelligent machines that are aware of people they work with and help us envision a better future where humans and AIs can work together."

The recent project carried out by this team of researchers was funded by the DARPA Explainable Artificial Intelligence (XAI) grant. In the future, the algorithm they developed could help to enhance the collaboration skills of both existing and newly developed robotic systems.

As the team conducted their experiments online, they could so far only investigate their algorithm's performance on a 2D plane. In their next studies, however, they plan to develop their method further, ensuring that it can also be applied in 3D environments.

"As reaching is one of the most basic tasks in human-robot interaction, we believe understanding reachability greatly helps users understand robot capacities in different tasks," Gao added. "We view our work as a successful first step towards a more general capability calibration setting. We are now also interested in using a variety of other modalities (e.g., speech, gesture) as means of communicating capabilities."

More information: Xiaofeng Gao et al, Show Me What You Can Do: Capability Calibration on Reachable Workspace for Human-Robot Collaboration, IEEE Robotics and Automation Letters (2022). DOI: 10.1109/LRA.2022.3144779

© 2022 Science X Network