New tool enables comprehensive evaluation of datacenter performance

Datacenters are used by a myriad of companies, institutions and other operations, including large-scale online services such as e-commerce, search engines, online maps, social media, advertising and more. These datacenters co-locate workloads, which involves sharing the resources of the datacenter to improve server utilization.

However, this can lead to performance degradation. To study this problem and find potential solutions, researchers need to have tools for evaluating workload co-location. While such tools have been developed before, they may only measure one aspect at the expense of other factors, limiting their usefulness.

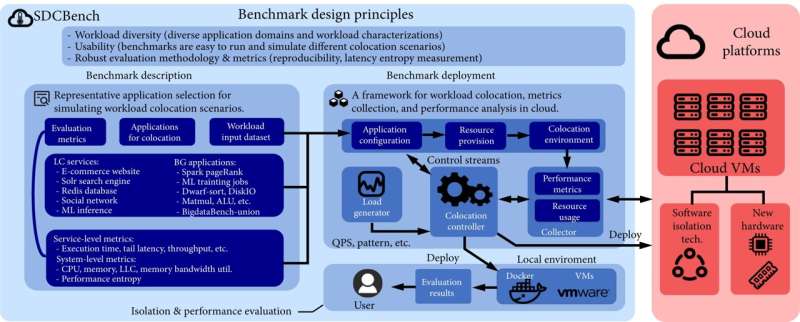

A team of researchers from Tianjin University and from Dalian University of Technology, both in China, now has developed a benchmark suite for workload co-location, called SDCBench, to address previous issues and provide a comprehensive analysis.

The research was published in Intelligent Computing on Sept. 7.

"Workload co-location can cause performance interference that can cause unpredictable performance degradation for cloud services, which not only reduces the user experience but also hurts resource efficiency in datacenters," said corresponding author Laiping Zhao, associate professor at the Tianjin Key Lab of Advanced Networking at the College of Intelligence and Computing at Tianjin University, China.

To overcome this issue, researchers try to enhance isolation ability—which refers to the privacy concerns related to resource sharing at datacenters—of cloud systems through both hardware and software approaches. However, the suggested solutions can require software updates or new hardware, which some cloud providers cannot or will not provide.

"The need for predictable service performance in datacenters brings new challenges and opportunities for cloud system design that seek to improve server-level resource utilization but do not hurt application-level performance," Zhao said.

"Unfortunately, the lack of a comprehensive suite of workload colocation benchmarks makes studying this emerging problem challenging. A workload colocation benchmark can help cloud providers understand and improve the infrastructures' isolation capabilities, thereby increasing their adoption by cloud users."

The researchers developed SDCBench, a benchmark suite for workload co-location that includes 16 latency-critical—meaning that there must be very little lag in the response time—services and applications that span a wide range of cloud scenarios.

"SDCBench enables cloud tenants to understand the performance isolation ability in datacenters and choose their best-fitted cloud services," Zhao said. "For cloud providers, it also helps them to improve the quality of service to increase their revenue."

In conjunction with introducing the new benchmark suite, the researchers propose the concept of latency entropy, inspired by the physics definition of entropy to mean the degree of disorder within a system, to measure the uncertainty of cloud systems.

"When shared resource contention occurs between different applications, system behaviors become disorderly and unpredictable," Zhao said. "To help users understand the application performance changes with observable metrics, SDCBench defines the latency entropy that describes the variations of tail latency for the measurement of system isolation ability."

The researchers demonstrated that SDCBench can simulate different cloud scenarios by co-locating workloads with simple configurations. They also evaluated and compared latency entropy in major cloud providers using their benchmark tool.

According to Zhao, one of the most exciting aspects of the research is that SDC Bench and a comprehensive framework based on it are publicly available.

"We've implemented a comprehensive evaluation framework based on SDCBench that can automatically configure, deploy and evaluate applications on cloud platforms, and that framework is open source and can be easily extended to new cloud systems," Zhao said.

More information: Yanan Yang et al, SDCBench: A Benchmark Suite for Workload Colocation and Evaluation in Datacenters, Intelligent Computing (2022). DOI: 10.34133/2022/9810691