This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

Making mind-controlled robots a reality

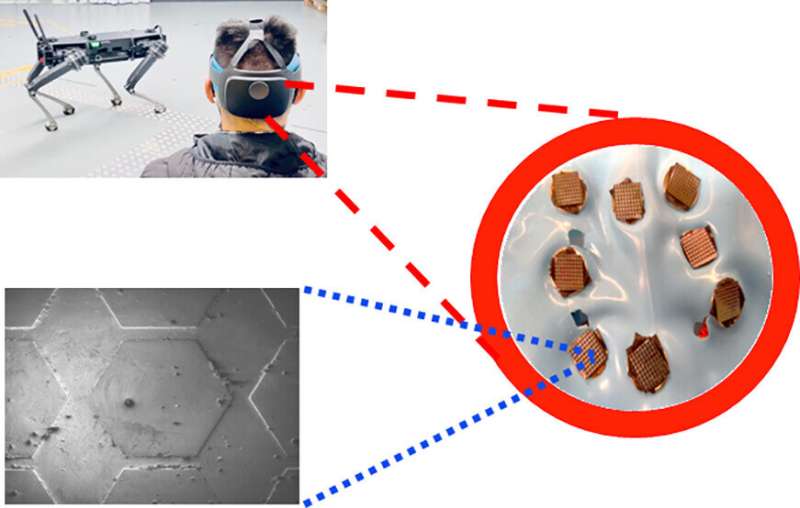

Researchers from the University of Technology Sydney (UTS) have developed biosensor technology that will allow people to operate devices such as robots and machines solely through thought control.

The advanced brain-computer interface was developed by Distinguished Professor Chin-Teng Lin and Professor Francesca Iacopi, from the UTS Faculty of Engineering and IT, in collaboration with the Australian Army and Defence Innovation Hub.

As well as defense applications, the technology has significant potential in fields such as advanced manufacturing, aerospace and healthcare—for example allowing people with a disability to control a wheelchair or operate prosthetics.

"The hands-free, voice-free technology works outside laboratory settings, anytime, anywhere. It makes interfaces such as consoles, keyboards, touchscreens and hand-gesture recognition redundant," said Professor Iacopi.

"By using cutting edge graphene material, combined with silicon, we were able to overcome issues of corrosion, durability and skin contact resistance, to develop the wearable dry sensors," she said.

A new study outlining the technology has just been published in ACS Applied Nano Materials. It shows that the graphene sensors developed at UTS are very conductive, easy to use and robust.

The hexagon patterned sensors are positioned over the back of the scalp, to detect brainwaves from the visual cortex. The sensors are resilient to harsh conditions so they can be used in extreme operating environments.

The user wears a head-mounted augmented reality lens which displays white flickering squares. By concentrating on a particular square, the brainwaves of the operator are picked up by the biosensor, and a decoder translates the signal into commands.

The technology was recently demonstrated by the Australian Army, where soldiers operated a Ghost Robotics quadruped robot using the brain-machine interface. The device allowed hands-free command of the robotic dog with up to 94% accuracy.

"Our technology can issue at least nine commands in two seconds. This means we have nine different kinds of commands and the operator can select one from those nine within that time period," Professor Lin said.

"We have also explored how to minimize noise from the body and environment to get a clearer signal from an operator's brain," he said.

The researchers believe the technology will be of interest to the scientific community, industry and government, and hope to continue making advances in brain-computer interface systems.

More information: Shaikh Nayeem Faisal et al, Noninvasive Sensors for Brain–Machine Interfaces Based on Micropatterned Epitaxial Graphene, ACS Applied Nano Materials (2023). DOI: 10.1021/acsanm.2c05546