This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

Researchers use table tennis to understand human-robot dynamics in agile environments

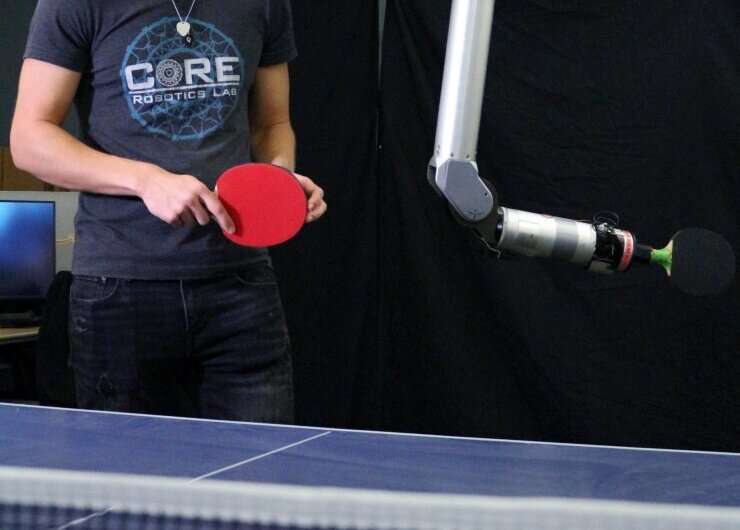

A team of researchers, led by Matthew Gombolay, an assistant professor in the School of Interactive Computing and director of the Cognitive Optimization and Relational (CORE) Robotics Lab at Georgia Tech, are using the sport of table tennis to showcase that humans may not always trust a robot's explanation of its intended action.

They have developed what is called a "cobot," which uses table tennis to demonstrate the potential areas a robot can work closely with human partners to complete tasks.

The robot, Barrett WAM arm equipped with a camera and paddle, was trained through a machine learning process called imitation learning. The researchers developed a system to give the robot positive reinforcement for successful volleys, and negative reinforcement for unsuccessful ones.

"We have also trained our robot to be a safe table tennis partner," said Gombolay. "We leveraged prior work on table tennis and 'learning from demonstration techniques' in which a human can teach a robot a skill such as, how to hit a table tennis shot or simply having the human demonstrate the task to the robot."

The project demonstrates the potential for robots to work closely with humans in physical and social capacities, a significant step forward for collaborative robotics, according to Gombolay. The development of intelligent systems that can work collaboratively with humans has numerous applications, from manufacturing, health care, to education.

In some cases, researchers found the lack of trust of human participants from explanations given by the robot and were less likely to collaborate with it as a result. A potential reason being a lack of trust that the robot may not have the same goals or motivations as the human partner. Participants in the study were more likely to trust a robot's explanation when they felt that the robot shared their goals and motivations.

"If we can figure out how to safely enable humans and robots to work together in extreme cases, that should give us insights into how to support interaction in a broad variety of settings," said Gombolay who's co-study highlights the importance of developing robots that can communicate effectively with humans in a way that builds trust and confidence. This is particularly important in settings where the consequences of a mistake or miscommunication can be severe, such as in health care or emergency situations.

"While the choice to work with a robot is ultimately an objective behavior and may vary based upon context or how risky the interaction is, it is ultimately this trust factor that is a key driving force behind your decision-making and behavior," said Gombolay. "In practice, we often find that people design and deploy impressive robotic solutions, but that robot was not designed to engender the appropriate level of trust from the human end-users."

The researchers suggest that a possible solution may be to design robots that are more transparent in their decision-making processes. By providing human partners with a clear understanding of how the robot arrived at a particular decision or action, it may be possible to build trust and confidence in the robot's abilities.

"The greater goal of the project is to understand how to design robots for fast-paced, proximate interactions in manufacturing, logistics warehouses, restaurant kitchens, and even in homes. We need to know how to design physically safe systems, of course, but we also need to know what users find intuitive and trustworthy—what makes users feel safe," said Gombolay.

"Otherwise, these robots will never make it out of the lab to coexist with people. I believe our work answers key questions in helping design robots for interaction with people, particularly involving how robots convey their intentions to their human counterparts. But, of course, the research opens even more exciting opportunities than existed before."

An approach that could eventually be a wave in effective collaboration between humans and robots in a variety of settings.

The paper will be presented at the 18th Annual ACM/IEEE International Conference on Human Robot Interaction (HRI), March 13–16, 2023, in Stockholm, Sweden.

More information: The Effect of Robot Skill Level and Communication in Rapid, Proximate Human-Robot Collaboration. DOI: 10.1145/3568162.3577002