This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

proofread

AniFaceDrawing: Delivering generative AI-powered high-quality anime portraits for beginners

Anime, the Japanese art of animation, comprises hand-drawn sketches in an abstract form with unique characteristics and exaggerations of real-life subjects. While generative artificial intelligence (AI) has found use in the content creation such as anime portraits, its use to augment human creativity and guide freehand drawings proves challenging.

The primary challenge lies with the generation of suitable reference images corresponding with the incomplete and abstract strokes made during the freehand drawing process. This is particularly true when the strokes created during the drawing process are incomplete and offer insufficient information for generative AI to predict the final shape of the drawing.

To tackle this problem, a research team from Japan Advanced Institute of Science and Technology (JAIST) and Waseda University in Japan, sought to develop a novel generative AI tool that offers progressive drawing assistance and helps generate anime portraits from freehand sketches.

The tool is based on a sketch-to-image (S2I) deep learning framework that matches raw sketches with latent vectors of the generative model. It employs a two-stage training strategy through the pre-trained Style Generative Adversarial Network (StyleGAN)—a state-of-the-art generative model that uses adversarial networks to generate new images.

The team, led by Dr. Zhengyu Huang from JAIST, including Associate Professor Haoran Xie and Professor Kazunori Miyata, and Lecturer Tsukasa Fukusato from Waseda University proposed a novel "stroke-level disentanglement," a strategy that associates input strokes of a freehand sketch with edge-related attributes, in the latent structural code of StyleGAN.

This approach allows users to manipulate the attribute parameters, thereby having greater autonomy over the properties of generated images. Dr. Huang says, "We introduced an unsupervised training strategy for stroke-level disentanglement in StyleGAN, which enables the automatic matching of rough sketches with sparse strokes to the corresponding local parts in anime portraits, all without the need for semantic labels."

This study will be presented at ACM SIGGRAPH 2023, the premier conference for computer graphics and interactive techniques and the only CORE ranking A* conference in the research fields worldwide.

Regarding the development of the tool, Prof. Xie adds, "We first trained an image encoder using a pre-trained StyleGAN model as a teacher encoder. In the second stage, we simulated the drawing process of generated images without additional data to train the sketch encoder for incomplete progressive sketches. This helped us generate high-quality portrait images that align with the disentangled representations of teacher encoder."

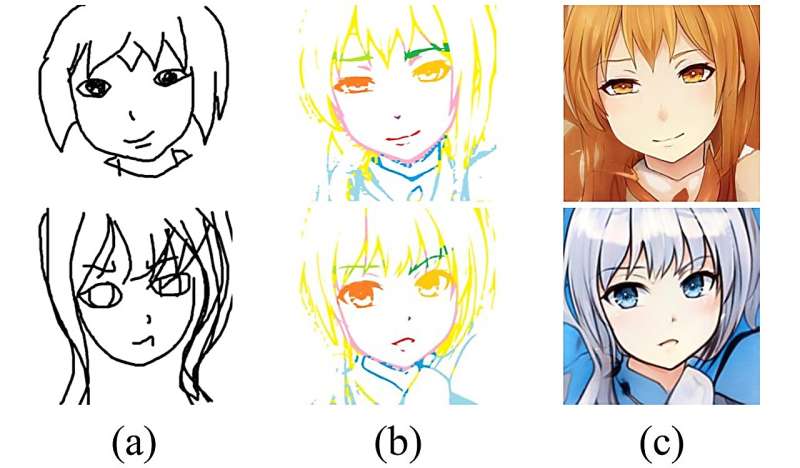

To further highlight the effectiveness and usability of AniFaceDrawing in aiding users with anime portrait creation, the team conducted a user study. They invited 15 graduate students to draw digital freehand anime-style portraits using the AniFaceDrawing tool, with the option to switch between rough and detailed guidance modes for line art.

While the former provided prompts for specific facial parts, the latter provided prompts for the full-face portrait based on the user's drawing progress. Participants could pin the generated guidance once it matched their expectations, and further refine their input sketch. This tool also allowed participants to select a reference image to generate a color portrait of their input sketch. Next, they evaluated the tool for user satisfaction and guidance matching through a survey.

The team noted that the system consistently provided high-quality facial guidance and effectively supported the creation of anime-style portraits, by not only enhancing user sketches, but also by generating desirable corresponding colored images. Prof. Fukusato remarks, "Our system could successfully transform the user's rough sketches into high-quality anime portraits. The user study indicated that even novices could make reasonable sketches with the help of the system and end up with high-quality color art drawings."

"Our generative AI framework enables users, regardless of their skill level and experience, to create professional anime portraits even from incomplete drawings. Our approach consistently produces high-quality image generation results throughout the creation process, regardless of the drawing order or how poor the initial sketches are," summarizes Prof. Miyata.

In the long run, these findings can help democratize AI technology and assist users with creative tasks, thereby augmenting their creative capacity without technological barriers.

More information: Zhengyu Huang et al, AniFaceDrawing: Anime Portrait Exploration during Your Sketching, Special Interest Group on Computer Graphics and Interactive Techniques Conference Conference Proceedings (2023). DOI: 10.1145/3588432.3591548