This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

Tennis anyone? Researchers serve up advances in developing motion simulation technology's next generation

Simon Fraser University computing science assistant professor Jason Peng is leading a research team that is raising motion simulation technology to the next level—and using the game of tennis to showcase just how real virtual athletes' moves can be.

Alongside his colleagues, Peng has created a machine learning system capable of learning diverse, simulated tennis skills from broadcast video footage.

Peng's team will present its system and corresponding research paper at the 50th SIGGRAPH conference, a global conference for computer graphics and interactive techniques, in Los Angeles, California, from August 6-10.

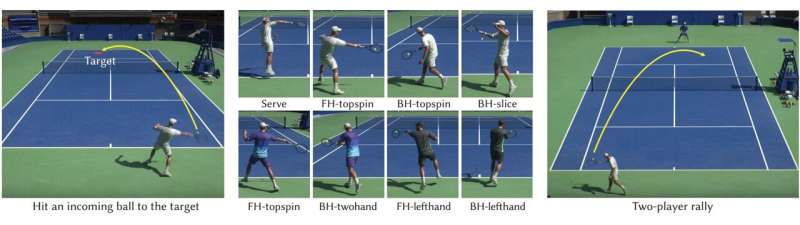

When provided with a large dataset of video clips from professional tennis players, the system learns to perform complex tennis shots and realistically chains together multiple shots into extended two-player rallies, generating long-lasting matches with realistic racket and ball dynamics between two physically simulated characters.

While popular sports video games typically use motion capture technologies to produce high-quality animations, their results are limited to the behaviors captured during specific recording sessions, limiting the diversity of movements a character can perform.

According to new developments involving Peng and researchers from Stanford University, the University of Toronto, Vector Institute and NVIDIA, it may soon become possible for video game designers to have their characters learn to move by mimicking footage of real-life athletes, and automatically simulate new variations and responsive behaviors on the fly.

The animation system developed by Peng's team allows for character movements to be procedurally generated by learning from footage of real athletes playing in sports games. The system leverages a hierarchical control model that combines a low-level imitation policy and high-level motion planning policy to guide character motions learned from broadcast videos.

Given the complexity of components involved in their system, training the simulated player models from real footage of tennis games proved to be a challenging task. The group's initial attempts generated noisy, unstable player movements, making it difficult to simulate life-like behaviors.

To address the low-quality motions extracted from broadcast videos, the team implemented a motion correction system with physics-based imitation that overrides erroneous aspects of the learned motion.

In turn, the animation system can now produce stable controllers for physically simulated tennis players that can accurately hit the incoming ball to target positions using a diverse variety of strokes, including serves (forehands, and backhands), spins (top spins and slices), and various playing styles (one or two-handed backhands, left and right-handed play).

While the motion learning system is currently limited to tennis, Peng foresees the technology applying to additional sports such as basketball, hockey, and soccer in the future. "We certainly plan to expand beyond tennis and into other sports," Peng says.

"The system itself is very general, can be applied to other sports games and activities," Peng adds. "One of our long-term goals is to find a way to implement the system as a method for robots to learn skills from analyzing videos."

More information: Learning Physically Simulated Tennis Skills from Broadcast Videos, ACM Transactions on Graphics (2023). DOI: 10.1145/3592408 , https://xbpeng.github.io/projects/Vid2Player3D/2023_TOG_Vid2Player3D.pdf