This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

Researchers use environmental justice questions to reveal geographic biases in ChatGPT

Virginia Tech researchers have discovered limitations in ChatGPT's capacity to provide location-specific information about environmental justice issues. Their findings, published in the journal Telematics and Informatics, suggest the potential for geographic biases existing in current generative artificial intelligence (AI) models.

ChatGPT is a large-language model developed by OpenAI Inc., an artificial intelligence research organization. ChatGPT is designed to understand questions and generate text responses based on requests from users. The technology has a wide range of applications from content creation and information gathering to data analysis and language translation.

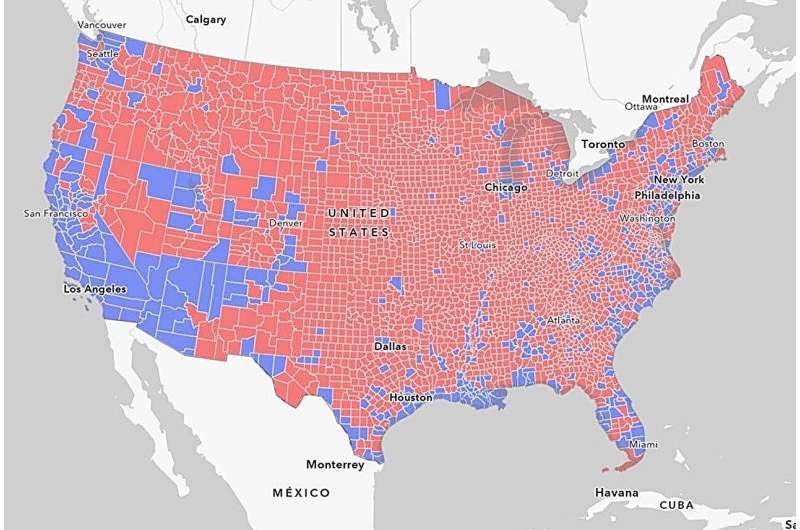

A county-by-county overview

"As a geographer and geospatial data scientist, generative AI is a tool with powerful potential," said Assistant Professor Junghwan Kim of the College of Natural Resources and Environment. "At the same time, we need to investigate the limitations of the technology to ensure that future developers recognize the possibilities of biases. That was the driving motivation of this research."

Utilizing a list of the 3,108 counties in the contiguous United States, the research group asked the ChatGPT interface to answer a prompt asking about the environmental justice issues in each county. The researchers selected environmental justice as a topic to expand the range of questions typically used to test the performance of generative AI tools. Asking questions by county allowed the researchers to measure ChatGPT responses against sociodemographic considerations such as population density and median household income.

Key findings indicate limitations

Surveying counties with populations as varied as Los Angeles County, California, with a population of 10,019,635, and Loving County, Texas, with a population of 83, the generative AI tool showed a capacity to identify location-specific environmental justice challenges in large, high-density population areas. However, the tool was limited in its ability to identify and provide contextualized information on local environmental justice issues.

- ChatGPT was able to provide location-specific information about environmental justice issues for just 515 of the 3018 counties entered, or 17 percent.

- In rural states such as Idaho and New Hampshire, more than 90 percent of the population lived in counties that could not receive local-specific information.

- In states with larger urban populations such as Delaware or California, fewer than 1 percent of the population lived in counties that cannot receive specific information.

Impacts for AI developers and users

With generative AI emerging as a new gateway tool for gaining information, the testing of potential biases in modeling outputs is an important part of improving programs such as ChatGPT.

"While more study is needed, our findings reveal that geographic biases currently exist in the ChatGPT model," said Kim, who teaches in the Department of Geography. "This is a starting point to investigate how programmers and AI developers might be able to anticipate and mitigate the disparity of information between big and small cities, between urban and rural environments."

Kim has previously published a paper on how ChatGPT understands transportation issues and solutions in the U.S. and Canada. His Smart Cities for Good research group explores the use of geospatial data science methods and technology to solve urban social and environmental challenges.

Enhancing future capabilities of the tools

Assistant Professor Ismini Lourentzou of the College of Engineering, a co-author on the paper, cited three areas of further research for large-language models such as ChatGPT:

- Refine localized and contextually grounded knowledge, so that geographical biases are reduced

- Safeguard large-language models such as ChatGPT against challenging scenarios such as ambiguous user instructions or feedback

- Enhance user awareness and policy so that people are better informed of the strengths and weaknesses, particularly around sensitive topics

"There are a lot of issues with the reliability and resiliency of large-language models," said Lourentzou, who teaches in the Department of Computer Science and is an affiliate of the Sanghani Center for Artificial Intelligence and Data Analytics. "I hope our research can guide further research on enhancing the capabilities of ChatGPT and other models."

More information: Junghwan Kim et al, Exploring the limitations in how ChatGPT introduces environmental justice issues in the United States: A case study of 3,108 counties, Telematics and Informatics (2023). DOI: 10.1016/j.tele.2023.102085