This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

With a game show as his guide, researcher uses AI to predict deception

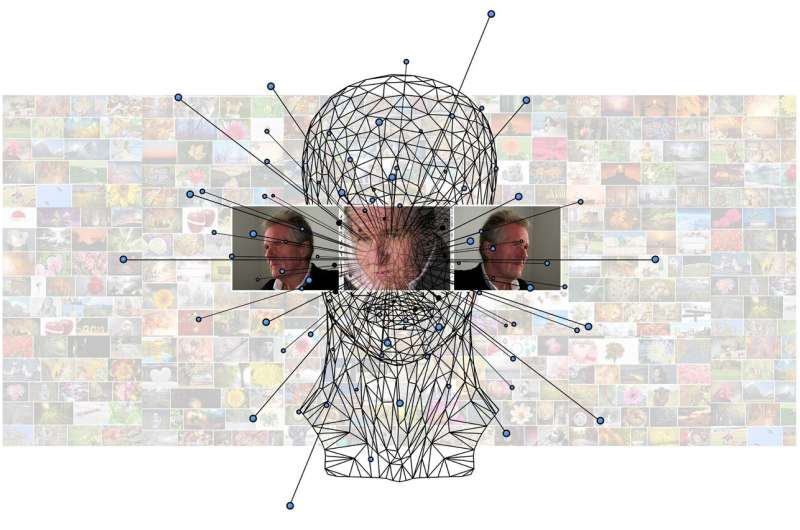

Using data from a 2002 game show, a Virginia Commonwealth University researcher has taught a computer how to tell if you are lying.

"Human behaviors are a rich source of deception and trust cues," said Xunyu Chen, assistant professor in the Department of Information Systems in VCU's School of Business. "Utilizing [artificial intelligence methods], such as machine learning and deep learning, can better exploit these sources of information for decision-making."

In one of the first papers that investigate high-stakes deception and trust quantitatively—"Trust and Deception with High Stakes: Evidence from the 'Friend or Foe' Dataset" appeared in a recent issue of Decision Support Systems—Chen and his team use a novel dataset derived from an American game show, "Friend or Foe?" which is based on the prisoner's dilemma. That game theory explores how two people could benefit from cooperating, which is challenging to coordinate, or suffer from failing to do so.

Lab experiments that have been commonly used to study trust and deception have limitations in terms of realism and generalizability. Compared with low-stakes fictitious cases, high-stakes deception found in game shows demands greater cognitive resources for behavioral management. Also, the significant gain or punishment associated with a high-stakes decision may also cause stronger emotional and behavioral variation in cues such as facial, verbal and movement fluctuations.

"We found multimodal behavioral indicators of deception and trust in high-stakes decision-making scenarios, which could be used to predict deception with high accuracies," Chen said. He calls such a predictor an automated deception detector.

This research extends the understanding toward deception and trust behaviors that could lead to substantial consequences from a scientific and quantitative perspective. Researchers and practitioners can use findings from this research to analyze human behaviors in high-stakes scenarios, such as presidential debates, business negotiations and court trials, to predict deception and the protection of self-interest.

More information: Xunyu Chen et al, Trust and deception with high stakes: Evidence from the friend or foe dataset, Decision Support Systems (2023). DOI: 10.1016/j.dss.2023.113997