May 13, 2016 report

Neural networks allow classic painting styles to be applied to modern video

(Tech Xplore)—A trio of researchers at the University of Freiburg has taken the science of using neural networks to understand the style of paintings done by human hands and applying it to modern photographs, one step further, by applying it to video. In their paper, which they have uploaded to the preprint server arXiv, Manuel Ruder, Alexey Dosovitskiy and Thomas Brox describe how they adapted photo manipulation techniques to video, how they overcame initial problems and also offer descriptions of their example videos.

Imagine if you could film a friend with your phone, as he wanders aimlessly down a road on the edges of a quaint village, then use an app to make it look like your friend was wandering through a Starry Night painting, by Vincent Van Gough? Instant art, right? While that classification might be up to conjecture, it appears the day may be coming when amateur and professional video/movie makers alike may be able to do such things with ease, courtesy of work being done by computer scientists.

The science of using neural networks to study works of art is rapidly advancing as computer scientists learn more about what it means for an object to be classified as a work of art. There are a lot of painters out there, but only those with real talent are able to create what most would call true art, something that transcends the boundaries of oil, canvass and brush strokes. Also, most people are familiar with a few of the most famous paintings by the most famous painters, Starry Night, for example, or The Mona Lisa, Girl with a Pearl Earring, etc. What the great painters all had in common was their style—it is not that difficult to recognize a Rembrandt, for example. But what intrinsic qualities in a painting distinguish one style from another? That is what researchers using neural networks have begun to ponder as they seek to capture the essence of style in a machine and apply it to something else.

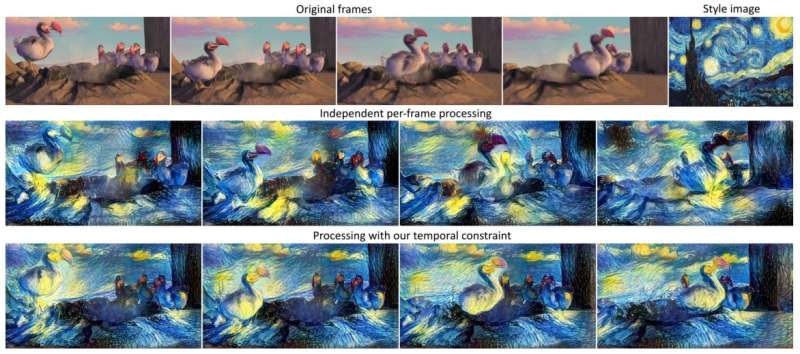

To capture something as oblique as style, researchers use deep neural networks because they are capable of learning—adding in information from a painting offers a chance for the software to see what is happening with the oil—different layers are studied and constructed in the network—as more information is entered, the software makes adjustments, until eventually, it sort of "understands" what the style of a painter is—which it can then apply to other images, offering eerie results. In this new effort, the researchers had to overcome problems such as what happens when one frame has been changed and then another frame come along—the parts that have already been rendered have to match with the new parts, which could result in choppiness, or flashing. Fortunately, as can be seen in the video samples they have created, it appears they have overcome such obstacles.

More information: Artistic style transfer for videos, arXiv:1604.08610 [cs.CV] arxiv.org/abs/1604.08610

Abstract

In the past, manually re-drawing an image in a certain artistic style required a professional artist and a long time. Doing this for a video sequence single-handed was beyond imagination. Nowadays computers provide new possibilities. We present an approach that transfers the style from one image (for example, a painting) to a whole video sequence. We make use of recent advances in style transfer in still images and propose new initializations and loss functions applicable to videos. This allows us to generate consistent and stable stylized video sequences, even in cases with large motion and strong occlusion. We show that the proposed method clearly outperforms simpler baselines both qualitatively and quantitatively.

© 2016 Tech Xplore