April 5, 2017 weblog

Monet's worlds translated into realistic photos in Berkeley effort

(Tech Xplore)—This week there are a number of interesting words and phrases to think about that lead us to what researchers in California are exploring. Welcome, first off, to a post called prosthetic knowledge.

Meaning: Information a person does not know but can access as needed using technology.

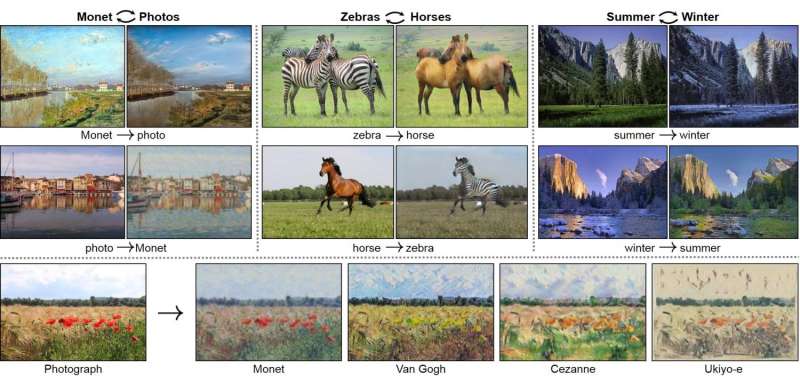

The entry under that is CycleGAN. "Berkeley Artificial Intelligence Research offers powerful image to image translation from one class or style to another."

The examples include converting Monet paintings to a photographic real world, changing horses to zebras and vice versa, turning scenes of summer to winter and vice versa, Renoir style transfer, various style transfer and photo depth of field alteration,

Wrote Adam Estes, senior editor at Gizmodo: "The Berkeley team built a new type of deep learning software, dubbed CycleGAN, that can convert impressionist paintings into photorealistic images. With a few tweaks, the tool can also turn horses into zebras, apples into oranges, and winter into summer."

The research team have a paper on the topic now on arXiv. "Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks" is by Jun-Yan Zhu, Taesung Park, Phillip Isola and Alexei Efros, all from Berkeley AI Research (BAIR) laboratory, UC Berkeley.

We hope Monet would appreciate their attention to his works if he had been around in these heady times of technology. Painters like Monet turned away from representational exactitude and instead held a mirror to the essence of things through use of color and through brush stroke techniques a sense of pulse and movement, whether showing a river or clouds or flowers.

Steve Dent, Engadget, wrote, "Thanks to researchers from UC Berkeley, you don't need to go to Normandy and wait for the perfect light. Using 'image style transfer' they converted his impressionist paintings into a more realistic photo style, the exact opposite of what apps like Prisma do."

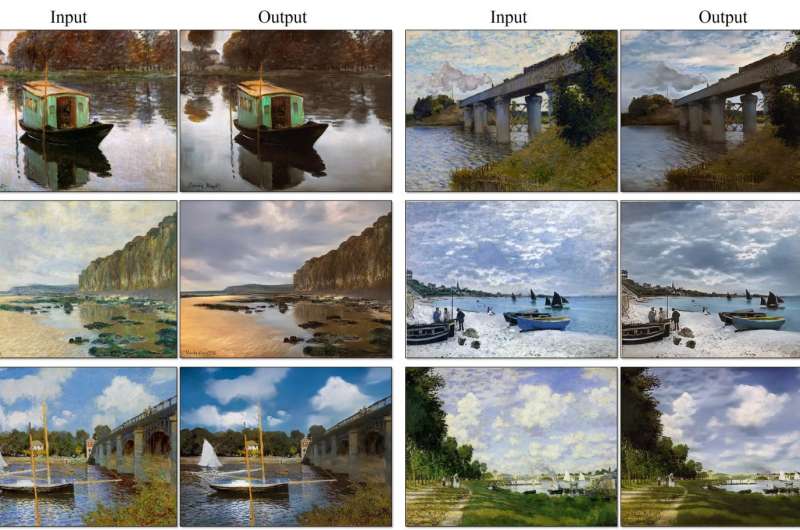

Still, using the Monet painting as an example, how would they know exactly what a photo of the scene from which Monet painted? Researchers used unpaired data. The authors wrote in their paper they were exploring capturing special characteristics of one image collection and figuring out how these characteristics could be translated into the other image collection, all in the absence of any paired training examples.

Quoted in Engadget: The team said, "we have knowledge of the set of Monet paintings of the set of landscape photographs. We can reason about the stylistic differences between those two sets, and thereby imagine what a scene might look like if we were to translate it from one set into another."

Dent explained that they trained "adversarial networks" using photos and refined them by having both people and machines check the quality of the results.

Estes in Gizmodo shed light on what the researchers did: "The researchers trained CycleGAN with a set of the master impressionist's paintings as well as a random batch of landscape photos pulled from Flickr, and then applied algorithms to mimic the style in either direction. The end result looks something like a photo of the landscapes Monet painted, even though we've never seen a photo of that exact landscape. Likewise, the software can take a random landscape photo and make it look like a Monet painting."

No, their effort did not yield perfect results.

Dent in Engadget: "The team adds that its methods still aren't as good as using paired training data either—ie, photos that exactly match paintings. Nevertheless, left on its own accord, the AI is surprisingly good at transferring one image style to another."

The GitHub page describes the CycleGAN as "Software that generates photos from paintings, turns horses into zebras, performs style transfer, and more (from UC Berkeley)." Also on that page is a generous helping of input and output images so that you can see their work for yourself.

The prerequisites are Linux or OSX and NVIDIA GPU + CUDA CuDNN.

More information: — junyanz.github.io/CycleGAN/

— Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks, arXiv:1703.10593 [cs.CV] arxiv.org/abs/1703.10593

© 2017 Tech Xplore