October 18, 2018 feature

SLAP: Simultaneous Localization and Planning for autonomous robots

Researchers at NASA Jet Propulsion Laboratory (JPL), Texas A&M University, and Carnegie Mellon University recently carried out a research project aimed at enabling simultaneous localization and planning (SLAP) capabilities in autonomous robots. Their paper, published in IEEE Transactions on Robotics, presents a dynamic replanning scheme in belief space, which could be particularly useful for robots operating under uncertainty, such as in changing environments.

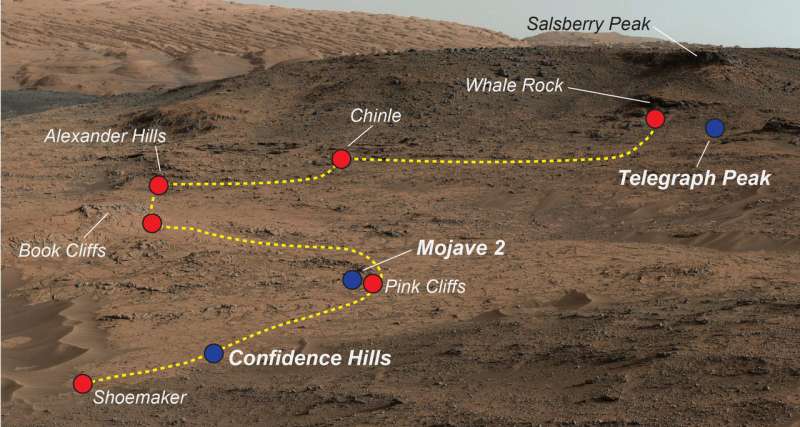

"Robots operating in the real world need to deal with uncertainty," Sung Kyun Kim, one of the researchers who carried out the study told TechXplore. "For example, a Mars rover is to navigate to scientific target locations, but it also needs to avoid collision with obstacles. Thus, both accurate localization and cost-efficient path planning are essential capabilities."

SLAP is a key ability for autonomous robots that operate under uncertainty, allowing them to effectively navigate spaces, avoid obstacles, and plan their path to target locations. A robot's sequential decision making process under uncertainty can be formulated as a POMDP (partially observable Markov decision process), which needs to be continuously solved online. However, ensuring that robots effectively and accurately solve POMDPs can be considerably challenging.

"We came up with two main ideas to solve SLAP problems," Kim explained. "One is to utilize feedback controllers to make a belief state reachable. This can effectively break the 'curse of history,' which helps us to solve larger problems. The other is to dynamically replan and improve the decision at run time, enhancing the solution quality and robustness. Dynamic replanning is especially beneficial when there are system modeling errors, dynamic environment changes, or intermittent sensor/actuator failures."

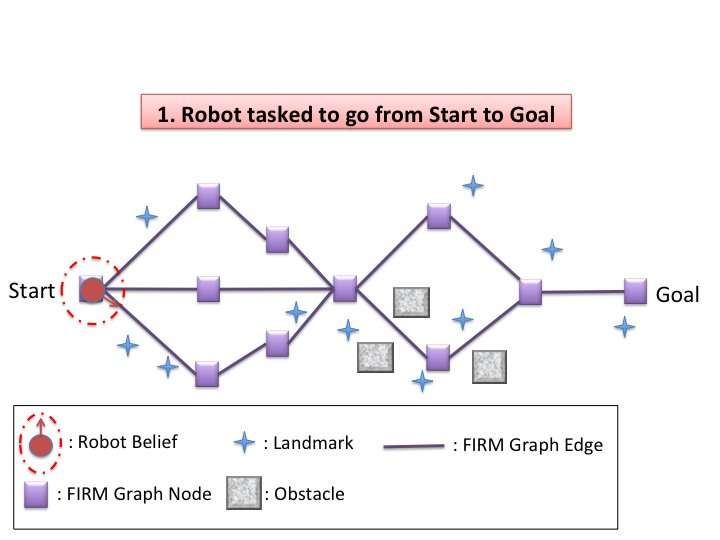

Kim and his colleagues devised a dynamic replanning scheme in belief space that allows robots to effectively navigate the space around them in situations of uncertainty, such as in changing environments or when presented with unexpected obstacles. Their algorithm has two phases, offline and online.

"In the offline phase, our algorithm constructs a sparse graph in belief space with a feedback controller for each node and then solves the coarse global policy (deciding what action to take at the current belief state) on the graph," Kim said. "In the online phase, dynamic replanning is conducted every time the belief state is updated. The algorithm locally evaluates each action of moving to a nearby node on the graph and selects the one with the minimum cost. After executing the selected action and updating the current belief, it repeats the replanning process."

The scheme devised by Kim and his colleagues exploits the behavior of feedback controllers in belief space. In other words, feedback controllers act as a funnel in belief space, with a nearby belief state potentially converging with the control target belief state. This effectively tackles a key issue in solving POMPDs—exponential complexity in the planning horizon.

In fact, once the algorithm's current belief converges with a known belief, there is no need to consider actions and observations leading up to the current belief. This ultimately leads to better scalability, allowing robots to solve more complex navigation problems.

"During dynamic replanning, the proposed method bootstraps the local optimization with the (coarse) global policy," Kim said. "This means that it can make a non-myopic decision, unlike other online planners with a finite receding horizon. In short, this method can adapt to dynamic changes in the environment and robustly operate despite an unmodelled perturbation or errors, while making cost-efficient plans in the global sense."

By eliminating unnecessary stabilization steps, the method devised by Kim and his colleagues outperformed feedback-based information roadmap (FIRM), a state-of-the-art technique for solving POMDPs. In future, this dynamic replanning scheme in belief space could enable better SLAP capabilities in robots operating under varying degrees of uncertainty.

"We now plan to apply our method to real-world problems," Kim said. "A possible application is a prototype Mars helicopter-rover navigation and coordination for planetary exploration, a project led by Dr. Ali-akbar Agha-mohammadi at JPL. A helicopter flying over the terrain could provide a rough map so that a coarse global policy can be obtained in the offline phase. Subsequently, a rover would dynamically replan in the online phase, to accomplish safe and cost-efficient navigation missions."

More information: Ali-akbar Agha-mohammadi et al. SLAP: Simultaneous Localization and Planning Under Uncertainty via Dynamic Replanning in Belief Space, IEEE Transactions on Robotics (2018). DOI: 10.1109/TRO.2018.2838556

www-robotics.jpl.nasa.gov/task … kID=304&tdaID=700108

© 2018 Tech Xplore