December 7, 2018 report

AlphaZero AI system able to teach itself how to play games, play at highest levels

A team of researchers with the DeepMind group and University College, both in the U.K., has developed an AI system capable of teaching itself how to play and master three difficult board games. In their paper published in the journal Science, the group describes their new system and explain why they believe it represents another big step forward in AI systems development. Murray Campbell with the T.J Watson Research Center in the U.S. offers a Perspective piece on the work done by the team in the same journal issue.

It has been over 20 years since a supercomputer known as Deep Blue beat world chess champion Gary Kasparov, showing the world just how far AI computing had come. In the years since, computers have grown ever smarter and now beat humans at such games as chess, shogi and Go. But such systems have all been tweaked to make them really good at just one game. In this new effort, the researchers have created an AI system that is not only good at more than one game, but gains such expertise on its own.

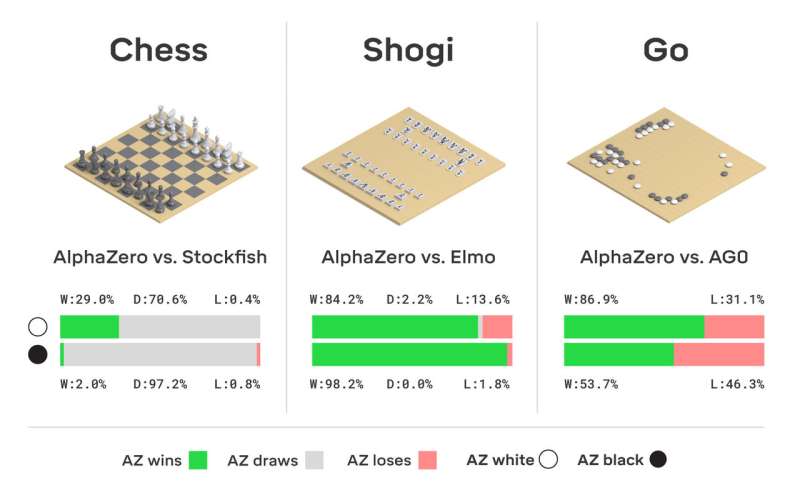

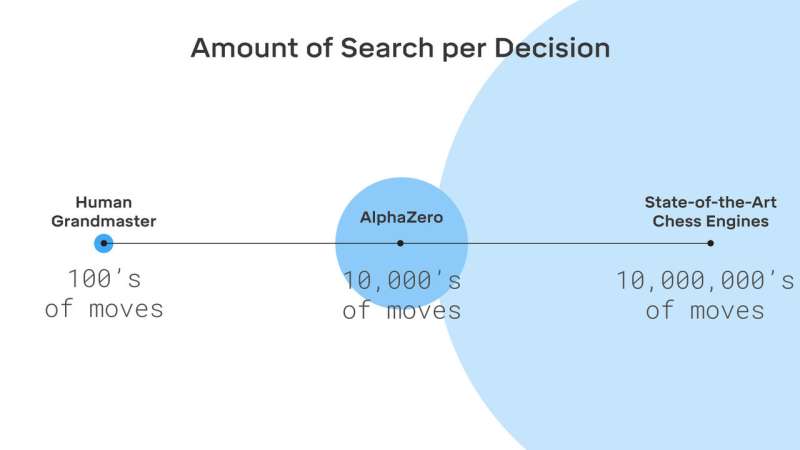

The new system, called AlphaZero, is a reinforcement learning system, which, as its name implies, means it learns by repeatedly playing a game and learning from its experiences. This is, of course, very similar to how humans learn. A basic set of rules is laid out and then the computer plays the game—with itself. It does not even need to play with other partners. It plays itself repeatedly, noting which plays constitute good moves and thus winning, and which constitute bad moves and losing. Over time, it improves. Eventually, it becomes so good it can beat not just humans, but other dedicated board game AI systems. The system also used a search method known as the Monte Carlo tree search. Combining the two technologies allows the system to teach itself how to get better at game playing. The researchers gave their test system a lot of power, as well, by employing 5000 tensor processing units, which puts it on a par with large supercomputers.

Thus far, AlphaZero has mastered chess, shogi and Go—games that are particularly well suited to AI applications. Campbell suggests the next step for such systems might be to branch out into games such as poker, or even popular video games.

More information: David Silver et al. A general reinforcement learning algorithm that masters chess, shogi, and Go through self-play, Science (2018). DOI: 10.1126/science.aar6404

© 2018 Science X Network