February 25, 2019 feature

A new method to generate gestures for different social robots

Social robots are designed to communicate with human beings naturally, assisting them with a variety of tasks. The effective use of gestures could greatly enhance robot-human interactions, allowing robots to communicate both verbally and non-verbally.

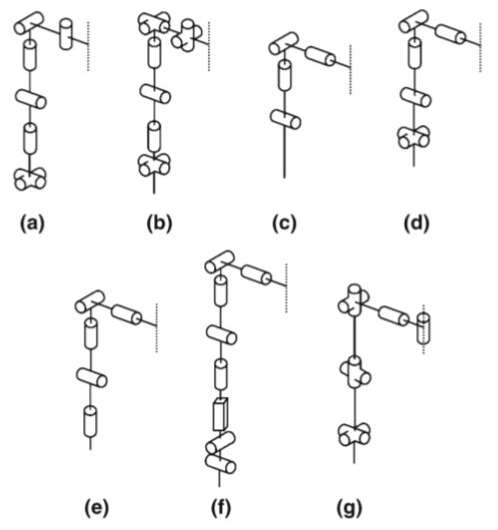

The design of most social robots is inspired by the human body, as this makes it easier to replicate human-like gestures and behaviors. However, different robots can have different morphologies, which allow them to best tackle the tasks that they are designed to complete.

Researchers at Vrije Universiteit Brussel, in Belgium, have recently introduced a new approach based on a generic gesture method to study the influence of different design aspects. Their paper, published on Springer's International Journal of Social Robotics, presents a framework that rapidly generate gestures that match a robot's specific configuration.

"In this paper, we propose a novel methodology to study the influence of different design aspects based on a generic gesture method," the researchers wrote in their paper. "The gesture method was developed to overcome the difficulties in transferring gestures to different robots, providing a solution for the correspondence problem."

The method devised by this team of researchers could overcome difficulties in transferring gestures to robots of different shapes and configurations. Users can input a robot's morphological information and the tool will use this data to calculate the gestures for that robot.

"A small set of morphological information, inputted by the user, is used to evaluate the generic framework of the software at runtime," the researchers explained. "Therefore, gestures can be calculated quickly and easily for a desired robot configuration. By generating a set of gestures for different morphologies, the importance of specific joints and their influence on a series of postures and gestures can be studied."

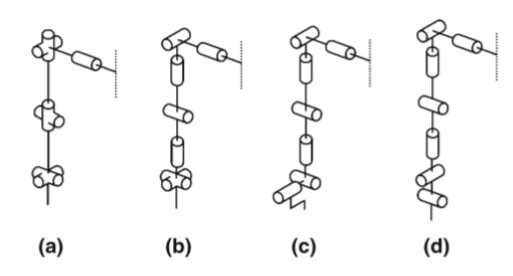

To ensure that their method would be applicable to different types of robots, the researchers drew inspiration from a human base model. This model consists of different chains and blocks, which are used to model the various rotational possibilities of humans. The researchers assigned a reference frame to each joint block using the human base model as a reference to construct the general framework behind their method.

"To generate gestures for a certain robot model, the method uses the Denavit-Hartenberg (DH) parameters of the configuration as input, whereby the different joints of the robot are grouped into chains and blocks of the human base model," the researchers explained in their paper. "At runtime, the generic framework of the method is evaluated using this information, and as such, adapted to the robot under consideration."

As different features are important for different kinds of gestures, the method devised by the researchers is designed to work in two different modes, namely the block mode and end effector mode. The block mode is used to calculate gestures such as emotional expressions in instances when the overall arm placement is crucial. The end effector mode, on the other hand, calculates gestures in situations in which the position of the end-effector is important, such as during object manipulation or pointing.

"The gesture method proves its usefulness in the design process of social robots by providing an impression of the necessary amount of complexity needed for a specified task, and can give interesting insights in the required joint angle range," the researchers said.

In their study, the researchers applied their method to the virtual model of a robot called Probo. They used this example to illustrate how their method could help to study the collocation of different joints and joint angle ranges in gestures. In the future, their approach could aid the development of social robots that can perform natural gestures suited to their morphology and application.

More information: Greet Van de Perre et al. Studying Design Aspects for Social Robots Using a Generic Gesture Method, International Journal of Social Robotics (2019). DOI: 10.1007/s12369-019-00518-x

© 2019 Science X Network