March 22, 2019 feature

WayPtNav: A new approach for robot navigation in novel environments

Researchers at UC Berkeley and Facebook AI Research have recently developed a new approach for robot navigation in unknown environments. Their approach, presented in a paper pre-published on arXiv, combines model-based control techniques with learning-based perception.

The development of tools that allow robots to navigate surrounding environments is a key and ongoing challenge in the field of robotics. In recent decades, researchers have tried to tackle this problem in a variety of ways.

The control research community has primarily investigated navigation for a known agent (or system) within a known environment. In these instances, a dynamics model of the agent and a geometric map of the environment it will be navigating are available, hence optimal-control schemes can be used to obtain smooth and collision-free trajectories for the robot to reach a desired location.

These schemes are typically used to control a number of real physical systems, such as airplanes or industrial robots. However, these approaches are somewhat limited, as they require explicit knowledge of the environment that a system will be navigating. In the learning research community, on the other hand, robot navigation is generally studied for an unknown agent exploring an unknown environment. This means that a system acquires policies to directly map on-board sensor readings to control commands in an end-to-end manner.

These approaches can have several advantages, as they allow policies to be learned without any knowledge of the system and the environment it will be navigating. Nonetheless, past studies suggests that these techniques do not generalize well across different agents. In addition, learning such policies often requires a vast number of training samples.

"In this paper, we study robot navigation in static environments under the assumption of perfect robot state measurement," the researchers wrote in their paper. "We make the crucial observation that most interesting problems involve a known system in an unknown environment. This observation motivates the design of a factorized approach that uses learning to tackle unknown environments and leverages optimal control using known system dynamics to produce smooth locomotion."

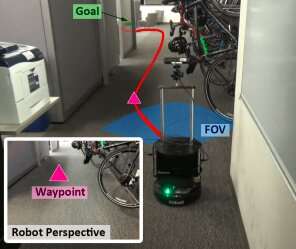

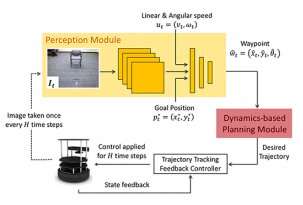

The team of researchers at UC Berkeley and Facebook trained a convolutional neural network (CNN) based model on high-level policies, which use current RGB image observations to produce a sequence of intermediate states, or 'waypoints'. These waypoints ultimately guide a robot to its desired location following a collision-free path, in previously unknown environments.

Their approach, dubbed waypoint-based navigation (WayPtNav), essentially couples model-based control techniques with learning-based perception. The learning-based perception module generates waypoints, which guide the robot to its target location via a collision-free path. The model-based planner, on the other hand, uses these waypoints to generate a smooth and dynamically feasible trajectory, which is then executed on the system using feedback control.

The researchers evaluated their approach on a hardware testbed, called TurtleBot2. Their tests gathered highly promising results, with WayPtNav enabling navigation in cluttered and dynamic environments, while also outperforming an end-to-end learning approach.

"Our experiments in simulated real-world cluttered environments and on an actual ground vehicle demonstrate that the proposed approach can reach goal locations more reliably and efficiently in novel environments as compared to a purely end-to-end learning-based alternative," the researchers wrote.

The new approach presented by this team of researchers could enhance robot navigation in novel indoor environments. Future studies could try to improve WayPtNav further, addressing some of its current limitations.

"Our proposed approach assumes perfect robot state estimate and employs a purely reactive policy," the researchers explained. "These assumptions and choices may not be optimal, especially for long range tasks. Incorporating spatial or visual memory to address these limitations would be fruitful future directions."

More information: Combining optimal control and learning for visual navigation in novel environments. arXiv:1903.02531 [cs.RO]. Science X Network

© 2019 Science X Network