Biologically-inspired skin improves robots' sensory abilities

Sensitive synthetic skin enables robots to sense their own bodies and surroundings—a crucial capability if they are to be in close contact with people. Inspired by human skin, a team at the Technical University of Munich (TUM) has developed a system combining artificial skin with control algorithms and used it to create the first autonomous humanoid robot with full-body artificial skin.

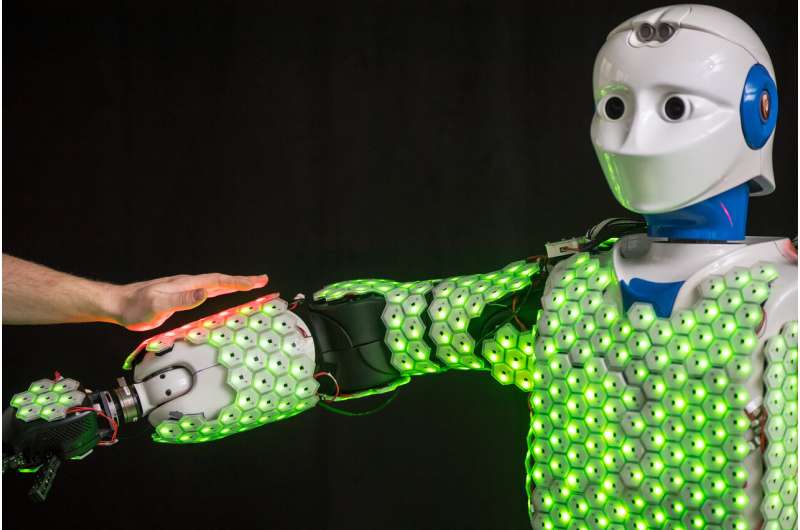

The artificial skin developed by Prof. Gordon Cheng and his team consists of hexagonal cells about the size of a two-euro coin (i.e. about one inch in diameter). Each is equipped with a microprocessor and sensors to detect contact, acceleration, proximity and temperature. Such artificial skin enables robots to perceive their surroundings in much greater detail and with more sensitivity. This not only helps them to move safely. It also makes them safer when operating near people and gives them the ability to anticipate and actively avoid accidents.

The skin cells themselves were developed around 10 years ago by Gordon Cheng, Professor of Cognitive Systems at TUM. But this invention only revealed its full potential when integrated into a sophisticated system as described in the latest issue of the journal Proceedings of the IEEE.

More computing capacity through event-based approach

The biggest obstacle in developing robot skin has always been computing capacity. Human skin has around 5 million receptors. Efforts to implement continuous processing of data from sensors in artificial skin soon run up against limits. Previous systems were quickly overloaded with data from just a few hundred sensors.

To overcome this problem, using a neuro-engineering approach, Gordon Cheng and his team do not monitor the skin cells continuously, but rather with an event-based system. This reduces the processing effort by up to 90 percent. The trick: The individual cells transmit information from their sensors only when values are changed. This is similar to the way the human nervous system works. For example, we feel a hat when we first put it on, but we quickly get used to the sensation. There is no need to notice the hat again until the wind blows it off our head. This enables our nervous system to concentrate on new impressions that require a physical response.

Safety even in case of close bodily contact

With the event-based approach, Prof. Cheng and his team have now succeeded in applying artificial skin to a human-size autonomous robot not dependent on any external computation. The H-1 robot is equipped with 1260 cells (with more than 13000 sensors) on its upper body, arms, legs and even the soles of its feet. This gives it a new "bodily sensation". For example, with its sensitive feet, H-1 is able to respond to uneven floor surfaces and even balance on one leg.

With its special skin, the H-1 can even give a person a hug safely. That is less trivial than it sounds: Robots can exert forces that would seriously injure a human being. During a hug, two bodies are touching in many different places. The robot must use this complex information to calculate the right movements and exert the correct contact pressures. "This might not be as important in industrial applications, but in areas such as nursing care, robots must be designed for very close contact with people," explains Gordon Cheng.

Versatile and robust

Gordon Cheng's robot skin system is also highly robust and versatile. Because the skin consists of cells, and not a single piece of material, it remains functional even if some cells stop working. "Our system is designed to work trouble-free and quickly with all kinds of robots," says Gordon Cheng. "Now we're working to create smaller skin cells with the potential to be produced in larger numbers."

More information: Gordon Cheng et al, A Comprehensive Realization of Robot Skin: Sensors, Sensing, Control, and Applications, Proceedings of the IEEE (2019). DOI: 10.1109/JPROC.2019.2933348

Florian Bergner et al. Evaluation of a Large Scale Event Driven Robot Skin, IEEE Robotics and Automation Letters (2019). DOI: 10.1109/LRA.2019.2930493

Julio Rogelio Guadarrama-Olvera et al. Pressure-Driven Body Compliance Using Robot Skin, IEEE Robotics and Automation Letters (2019). DOI: 10.1109/LRA.2019.2928214