December 11, 2019 feature

ROBOSHERLOCK: a system to enhance robot performance on manipulation tasks

Over the past decade or so, advancements in machine learning have enabled the development of systems that are increasingly autonomous, including self-driving vehicles, virtual assistants and mobile robots. Among other things, researchers developing autonomous systems need to identify ways to integrate components designed to tackle different and yet complementary sub-tasks.

For instance, a robot that completes manual tasks in a human user's home should be able to sense objects in its environment while also retrieving information about these objects that can then be used to plan its movements and actions. This process, also known as the "perception-cognition-action" paradigm, is of crucial importance, as it ultimately allows the robot to come up with useful strategies and efficiently complete tasks.

So far, most methods to implement this perception-cognition-action paradigm in robots treat these three tasks as almost entirely independent modules that act as black boxes for one another. A team of researchers at the University of Bremen and the University of Munich in Germany, however, believes that linking a robot's "perception" system with its cognition (i.e., its ability to "reason" or retrieve information about objects in the surrounding environment) could significantly improve its overall performance.

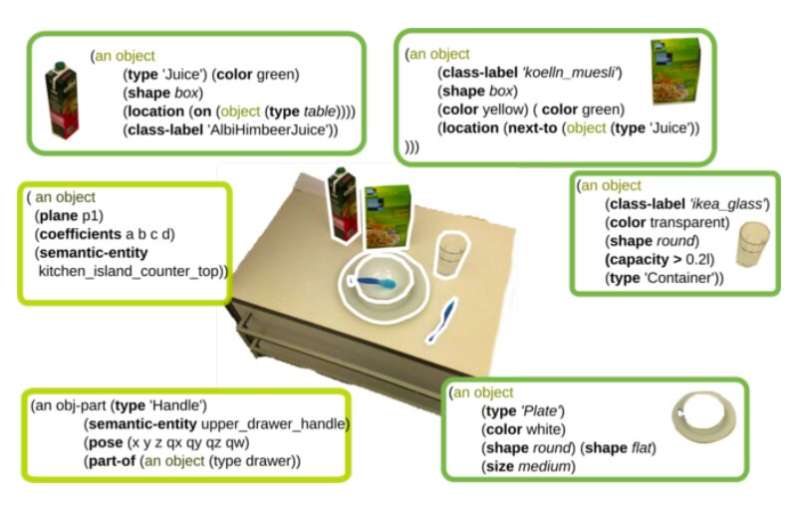

With this in mind, the researchers recently developed a cognitive perception system that could enhance the performance of mobile robots in everyday manipulation tasks. This system, dubbed ROBOSHERLOCK, attains perception via content analytics (CA), a strategy that entails the use of statistical methods to analyze vast amounts of data.

The data analyzed by ROBOSHERLOCK is "unstructured," as its structure does not reflect the semantics associated with it, as it would in a database or spreadsheet. The system thus uses a strategy known as unstructured information management (UIM), which essentially means that it can process large quantities of unstructured data (e.g., text documents, audio files, images, etc.) using a set of information extraction algorithms. Each of these algorithms extracts different types of knowledge depending on its "expertise," and they are subsequently rated and combined to reach a single consistent decision.

"In ROBOSHERLOCK, perception and interpretation of realistic scenes is formulated as an unstructured information management (UIM) problem," the researchers wrote in their paper. "The application of the UIM principle supports the implementation of perception systems that can answer task-relevant queries about objects in a scene, boost object recognition performance by combining the strengths of multiple perception algorithms, support knowledge-enabled reasoning about objects and enable automatic and knowledge-driven generation of processing pipelines."

The researchers evaluated their framework in a series of tests, applying it to different systems for real-world scene perception. They found that "reasoning" about (i.e., processing) the background knowledge retrieved by its algorithms allows ROBOSHERLOCK to answer a wide variety of questions, going beyond was is directly perceivable in the surrounding environment.

The components of ROBOSHERLOCK presented by the researchers in their recent study could be seen as its core functionalities. Subsequently, the researchers have also developed several extensions that enhance the system's cognitive capabilities. For instance, they created an extension that allows the system to detect humans and objects simultaneously, reasoning about the actions that the humans are performing and the intentions behind these actions.

"More recently, we have investigated how the ROBOSHERLOCK framework can enable the agents to 'dream' and using state of the art gaming engines generate variations of a task and learn new perception models," the researchers wrote in their paper. "All of these extensions look at robot perception from the perspective of a robot performing tasks, which would not have been possible without the core framework presented here."

More information: RoboSherlock: cognition-enabled robot perception for everyday manipulation tasks. arXiv:1911.10079 [cs.RO]. arxiv.org/abs/1911.10079

© 2019 Science X Network