Blueprint for the perfect coronavirus app

Many countries are turning to digital aids to help manage the COVID-19 pandemic. ETH researchers are now pointing out the ethical challenges, that need to be taken into account and the issues that need careful consideration when planning, developing and implementing such tools.

Handwashing, social distancing and mask wearing: all these measures have proven effective in the current COVID-19 pandemic—just as they were 100 years ago when the Spanish flu was raging throughout the world. However, the difference this time is that we have additional options at our disposal. Many countries are now using digital tools such as tracing apps to supplement those tried and tested measures.

Whether these new tools will actually achieve the desired effect remains to be seen. There are several reasons why they might not be as successful as hoped: technical shortcomings, lack of acceptance by the population and inaccurate data are all factors that could hinder their success. In the worst case, digital aids could become a data protection nightmare or lead to discrimination against certain groups of the population.

No miracle solution

And that's the exact opposite of what these tools should do, says Effy Vayena, Professor of Bioethics at ETH Zurich. She and her group recently published a comprehensive study outlining the ethical and legal aspects that must be considered when developing and implementing digital tools. "These can naturally be very useful instruments," she explains, "but you can't expect miracles from them."

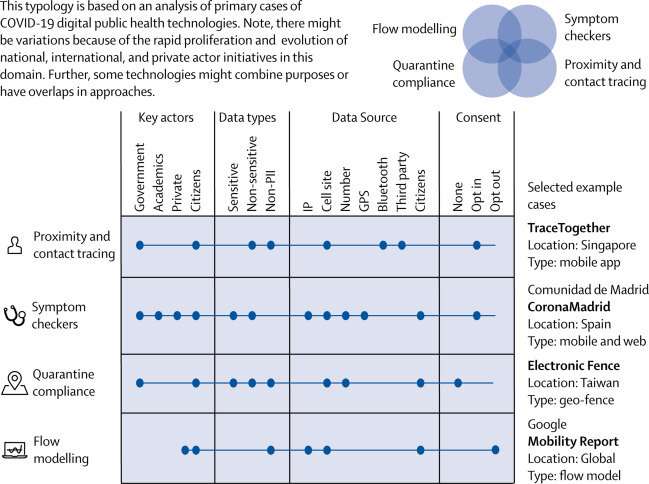

The authors of the study focused their investigation on four tool categories: contact tracing apps, including the SwissCovid app co-developed by EPFL and ETH Zurich; programs that can be used to assess whether an infection is present based on symptoms; apps for checking whether quarantine regulations are being observed; and flow models of the kind that Google uses for mobility reports.

A careless approach will backfire

Although such tools are all very different, in each case it is crucial to carefully weigh up the advantages of the technology against the potential disadvantages for the society—for example with regard to data protection. That might sound obvious. But it's precisely in acute situations, when policymakers and the public are keen for tools to be made available quickly, that time to clarify these points in detail seems to be lacking. Don't be deceived, Vayena says: "People sometimes have completely unrealistic expectations of what an app can do, but one single technology can never be the answer to the whole problem. And if the solution we've come up with is flawed because we didn't consider everything carefully enough, then that will undermine its long-term success."

For that reason, Vayena stresses the critical importance of rigorous scientific validation. "Does the technology actually work as intended? Is it effective enough? Does it provide enough reliable data? We have to keep verifying all those things," she says, explaining that there are also lots of questions surrounding social acceptance: "Back in April, over 70 percent of people in Switzerland were saying they would install the coronavirus app as soon as it became available," she says. "Now, at the end of June, over half say they don't want to install it. Why the sudden change of heart?"

Considering the adverse effects

The researchers highlight a whole range of ethical issues that need to be considered in the development stage. For example, developers must ensure that the data an app collects is not used for anything other than its intended purpose without the users' prior knowledge. A shocking example of this is a Chinese app for assessing quarantine measures, which reportedly shares information directly with the police. Another of the ETH researchers' stipulations is that these digital tools be deployed only for a limited timeframe so as to ensure that the authorities do not subsequently misuse them for monitoring the population.

To round off, the authors also address issues of accessibility and discrimination. Some apps collect socio-demographic data. While this information is useful for health authorities, there is a risk it may lead to discrimination. At the start of the outbreak, it became apparent just how quickly some people were prepared to denigrate others in a crisis, when all people of Asian origin were being wrongly suspected of possibly carrying the coronavirus. "You have to think about these adverse effects from the outset," Vayena explains.

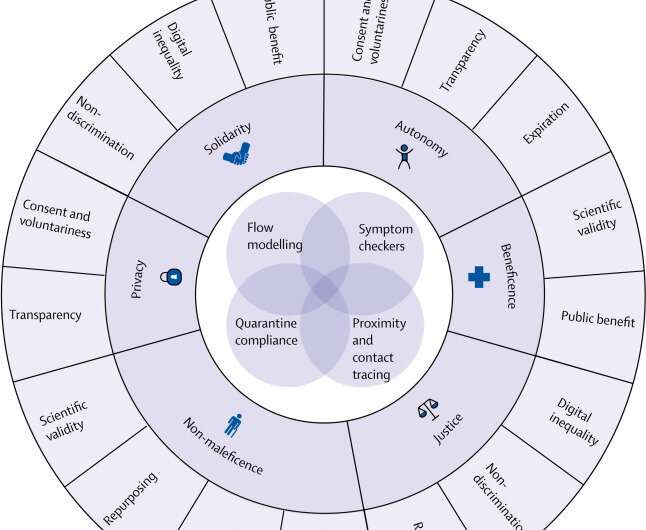

The same principles apply everywhere

So how should developers of such apps go about it? The researchers provide a step-by-step overview of the questions that must be answered in each phase of the development process—from planning all the way through to implementation. "Of course, there will always be certain features that are specific to different countries," Vayena says, "but the basic principles—respecting autonomy and privacy, promoting health care and solidarity, and preventing new infections and malicious behavior—are the same everywhere. By taking these fundamentals into account, it's possible to find technically and ethically sound solutions that can play a valuable role in overcoming the crisis in hand."

More information: Urs Gasser et al. Digital tools against COVID-19: taxonomy, ethical challenges, and navigation aid, The Lancet Digital Health (2020). DOI: 10.1016/S2589-7500(20)30137-0