AI taught to rapidly assess disaster damage so humans know where help is needed most

Researchers at Hiroshima University have taught an AI to look at post-disaster aerial images and accurately determine how battered the buildings are—a technology that crisis responders can use to map damage and identify extremely devastated areas where help is needed the most.

Quick action in the first 72 hours after a calamity is critical in saving lives. And the first thing disaster officials need to plan an effective response is accurate damage assessment. But anyone who has seen aftermath scenes of a natural catastrophe knows the many logistical challenges that can make on-site evaluation a danger to the lives of crisis responders.

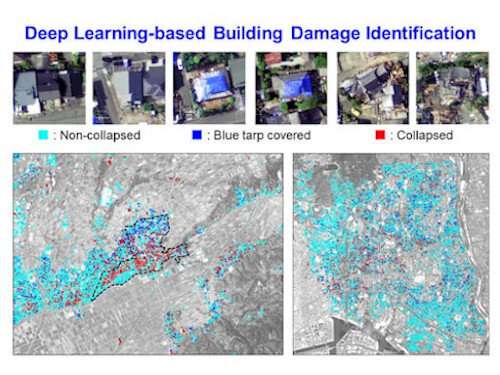

Using convolutional neural network (CNN)—a deep learning algorithm inspired by the human brain's image recognition process—a team led by Associate Professor Hiroyuki Miura of Hiroshima University's Graduate School of Advanced Science and Engineering trained an AI to finish in an instant a task that usually requires us to devote crucial hours and personnel at a time when resources are scarce.

Previous CNN models that assess damage require both before and after photos to give an evaluation. But Miura's model doesn't need pre-disaster images. It only relies on post-disaster photos to determine building damage.

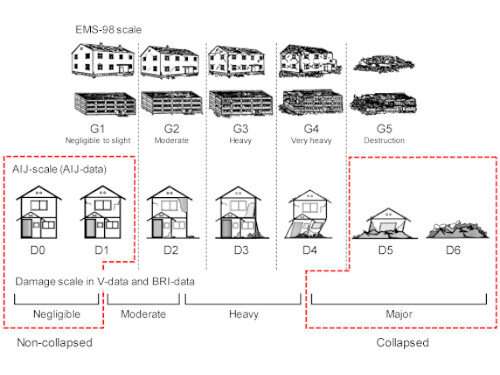

It works by classifying buildings as collapsed, non-collapsed, or blue tarp-covered based on the seven damage scales (D0-D6) used in the 2016 Kumamoto earthquakes by the Architectural Institute of Japan.

A collapsed building is defined as D5-D6 or major damage. Non-collapse is interpreted as D0-D1 or negligible damage. Intermediate damage, which was rarely considered in previous CNN models, is designated as D2-D3 or moderate damage.

Researchers trained their CNN model using post-disaster aerial images and building damage inventories by experts during the 1995 Kobe and 2016 Kumamoto earthquakes.

The researchers overcame the challenge of identifying buildings that suffered intermediate damage after confirming that blue tarp-covered structures in photos used to train the AI predominantly represented D2-D3 levels of devastation.

Since ground truth data from field investigations of structural engineers were used to teach the AI, the team believes its evaluations are more reliable than other CNN models that depended on visual interpretations of non-experts.

When they tested it on post-disaster aerial images of the September 2019 typhoon that hit Chiba, results showed that damage levels of approximately 94% of buildings were correctly classified.

Now, the researchers want their AI to outdo itself by making its damage assessment more powerful.

"We would like to develop a more robust damage identification method by learning more training data obtained from various disasters such as landslides, tsunami, and etcetera," Miura said.

"The final goal of this study is the implementation of the technique to the real disaster situation. If the technique is successfully implemented, it can immediately provide accurate damage maps not only damage distribution but also the number of damaged buildings to local governments and governmental agencies."

More information: Hiroyuki Miura et al, Deep Learning-Based Identification of Collapsed, Non-Collapsed and Blue Tarp-Covered Buildings from Post-Disaster Aerial Images, Remote Sensing (2020). DOI: 10.3390/rs12121924