Biomimetic resonant acoustic sensor detecting far-distant voices accurately to hit the market

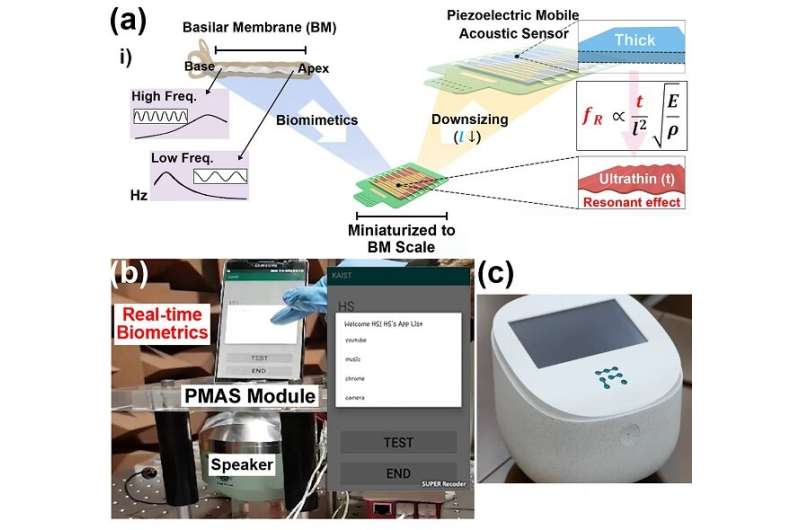

A KAIST research team led by Professor Keon Jae Lee from the Department of Materials Science and Engineering has developed a bioinspired flexible piezoelectric acoustic sensor with multi-resonant ultrathin piezoelectric membrane mimicking the basilar membrane of the human cochlea. The flexible acoustic sensor has been miniaturized for embedding into smartphones and the first commercial prototype is ready for accurate and far-distant voice detection.

In 2018,Professor Lee presented the first concept of a flexible piezoelectric acoustic sensor,inspired by the fact that humans can accurately detect far-distant voices using a multi-resonant trapezoidal membrane with 20,000 hair cells. However, previous acoustic sensors could not be integrated into commercial products like smartphones and AI speakers due to their large device size.

In this work, the research team fabricated a mobile-sized acoustic sensor by adopting ultrathin piezoelectric membranes with high sensitivity. Simulation studies proved that the ultrathin polymer underneath inorganic piezoelectric thin film can broaden the resonant bandwidth to cover the entire voice frequency range using seven channels. Based on this theory, the research team successfully demonstrated the miniaturized acoustic sensor mounted in commercial smartphones and AI speakers for machine learning-based biometric authentication and voice processing. (Please refer to the explanatory movieKAIST Flexible Piezoelectric Mobile Acoustic Sensor).

The resonant mobile acoustic sensor has superior sensitivity and multi-channel signals compared to conventional condenser microphones with a single channel, and it has shown highly accurate and far-distant speaker identification with a small amount of voice training data. The error rate of speaker identification was significantly reduced by 56% (with 150 training datasets) and 75% (with 2,800 training datasets) compared to that of a MEMS condenser device.

Professor Lee said, "Recently, Google has been targeting the "Wolverine Project' on far-distant voice separation from multi-users for next-generation AI user interfaces. I expect that our multi-channel resonant acoustic sensor with abundant voice information is the best fit for this application. Currently, the mass production process is on the verge of completion, so we hope that this will be used in our daily lives very soon."

Professor Lee also established a startup company called Fronics Inc., located both in Korea and U.S. (branch office) to commercialize this flexible acoustic sensor and is seeking collaborations with global AI companies.

These research results, titled "Biomimetic and Flexible Piezoelectric Mobile Acoustic Sensors with Multi-Resonant Ultrathin Structures for Machine Learning Biometrics," were published in Science Advances in 2021 (7, eabe5683).

More information: Hee Seung Wang et al, Biomimetic and flexible piezoelectric mobile acoustic sensors with multiresonant ultrathin structures for machine learning biometrics, Science Advances (2021). DOI: 10.1126/sciadv.abe5683