November 26, 2021 feature

A deep learning method to automatically enhance dog animations

Researchers at Trinity College Dublin and University of Bath have recently developed a model based on deep neural networks that could help to improve the quality of animations containing quadruped animals, such as dogs. The framework they created was presented at the MIG (Motion, Interaction & Games) 2021 conference, an event where researchers present some of the latest technologies for producing high-quality animations and videogames.

"We were interested in working with non-human data," Donal Egan, one of the researchers who carried out the study, told TechXplore. "We chose dogs for practicality reasons, as they are probably the easiest animal to obtain data for."

Creating good quality animations of dogs and other quadruped animals is a challenging task. This is mainly because these animals move in complex ways and have unique gaits with specific footfall patterns. Egan and his colleagues wanted to create a framework that could simplify the creation of quadruped animations, producing more convincing content for both animated videos and videogames.

"Creating animations reproducing quadruped motion using traditional methods such as key-framing, is quite challenging," Egan said. "That's why we thought it would be useful to develop a system which could automatically enhance an initial rough animation, removing the need for a user to handcraft a highly realistic one."

The recent study carried out by Egan and his colleagues builds on previous efforts aimed at using deep learning to generate and predict human motions. To achieve similar results with quadruped motions, they used a large set of motion capture data representing the movements of a real dog. This data was used to create several high-quality and realistic dog animations.

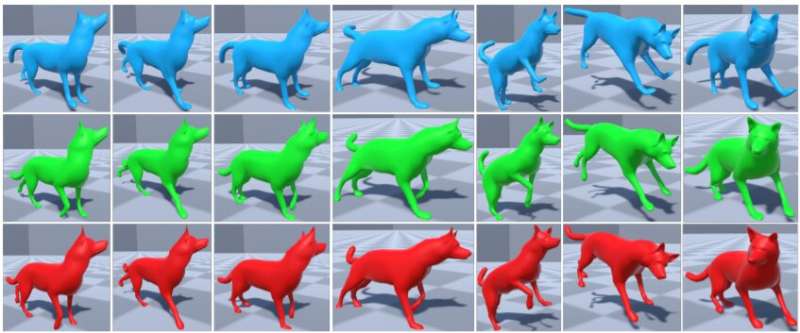

"For each of these animations, we were able to automatically create a corresponding 'bad' animation with the same context but of a reduced quality, i.e., containing errors and lacking many subtle details of true dog motion," Donal Egan, one of the researchers who carried out the study, told TechXplore. "We then trained a neural network to learn the difference between these 'bad' animations and the high-quality animations."

After it was trained on good and bad quality animations, the researchers' neural network learned to enhance animations of dogs: improving their quality and making them more realistic. The team's idea was that at run-time the initial animations might have been created using a variety of methods, including key-framing techniques, thus they might not be very convincing.

"We showed that it is possible for a neural network to learn how to add the subtle details that make a quadruped animation look more realistic," Egan said. "The practical implications of our work are the applications that it could be incorporated into. For example, it could be used to speed up an animation pipeline. Some applications create animations using methods such as traditional inverse kinematics, which can produce animations that lack realism; our work could be incorporated as a post-processing step in such situations.

The researchers evaluated their deep learning algorithm in a series of tests and found that it could significantly improve the quality of existing dog animations, without changing the semantics or context of the animation. In the future, their model could be used to speed up and facilitate the creation of animations for use in films or videogames. In their next studies, Egan and his colleagues plan to continue exploring ways in which the movements of dogs could be digitally and graphically reproduced.

"Our group is interested in a wide range of topics, including graphics, animation, machine learning and avatar embodiment in virtual reality," Egan said. "We want to combine these areas to develop a system for the embodiment of quadrupeds in virtual reality—allowing gamers or actors to become a dog in virtual reality. The work discussed in this article could form part of this system, by helping us to produce realistic quadruped animations in VR."

More information: How to train your dog: neural enhancement of quadruped animations. MIG'21, Motion, Interaction and Games(2021). DOI: 10.1145/3487983.3488293.

© 2021 Science X Network