Collections of traffic images used to enhance the perception capabilities self-driving car

If self-driving cars are to safely navigate traffic, they need to "understand" the things around them.

They can learn this by practicing with existing images of traffic situations. Panagiotis Meletis combined various collections of traffic images to enhance the perception capabilities of TU/e's self-driving car.

Is that young man with that cap waiting for someone, or does he intend to cross the street? A ball rolls onto the street, will a child come running after it? And is that small blue Toyota going to parallel park, or is it about to drive away? When you drive a car in the city, you constantly need to assess these kinds of situations, which requires some serious traffic insight from the person behind the wheel. One of the major challenges for a self-driving car therefore, is to arrive at the proper conclusions based on the things it 'sees' around it, so that it will be able to anticipate unexpected situations—a precondition to safely participate in traffic.

The first step towards more insight into traffic situations, is to correctly determine the various objects in the images that an autonomous vehicle receives from its camera, Greek researcher Panagiotis Meletis explains. Within the Video Coding & Architectures group, which specializes in image recognition, he worked on a project of the Mobile Perception Systems Lab: a self-driving car that regularly goes out for a test drive on the TU/e campus. "It needs to be able to determine whether it sees a traffic light or a tree, a pedestrian, a cyclist or a vehicle." And on a more detailed level, it also needs to be able to recognize wheels or limbs, because these indicate the direction of movement and intentions of the road users.

Gray torsos

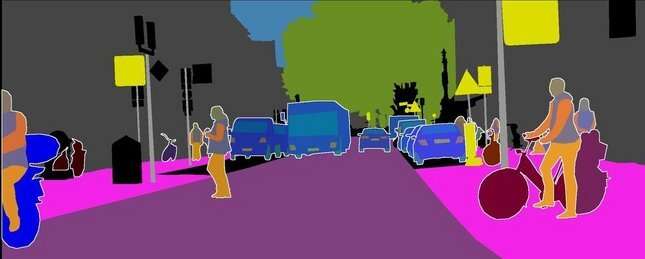

You can teach an artificial neural network (the artificial intelligence, or AI) to analyze a traffic scene by feeding it large quantities of images of traffic situations, in which all relevant elements have been labeled. You can then measure the AI's level of understanding by feeding it new, unlabeled images. An example of such an image can be seen below. In the lower image, the colors show us how the AI interpreted the image: cars are blue, bicycles are dark red, people's arms are colored orange and their torsos are gray.

When Meletis started his doctoral research, there were only a few publicly available image datasets depicting traffic scenes, he says. "Now, there are dozens, each with its own focus. Think of images that contain traffic lights, images that contain cyclists, pedestrians, etcetera." The problem however, was that each dataset was labeled with a different system.

What Meletis contributed, was the fact that he managed to connect those labels on a higher so-called semantic level. "To give an idea: cars, buses and trucks all belong in the category 'vehicle.' And cyclists, motorcyclists and chauffeurs are all specific examples of 'drivers.' With the help of those definitions, I was able to train our AI with all the available datasets at the same time. This immediately yielded much better results."

Heavy rain

The power of his method was proven during a workshop organized as part of the Conference on Computer Vision and Pattern Recognition in 2018. "This is a large annual conference with over ten thousand participants, including all the major tech companies. One of the competitions that year was about 'robust vision,' where traffic images with visual hazards, such as heavy rain or overexposure to sunlight, had to be analyzed. Our system performed better than all other participants on a dataset that contained many of such degraded images."

Over the past year, Meletis continued to work at this project as a postdoc, while he was reviewing his thesis, which is common practice in his group. "During that time, we managed to compile two new datasets for holistic scene understanding." And before the pandemic broke out, Meletis was part of TU/e's communication team that recruits students and Ph.D. candidates in his home country of Greece. "I'm very enthusiastic about the university and the atmosphere in Eindhoven, and I wanted to convey that to my compatriots, who perhaps wouldn't take the step and go to TU/e out of fear of the unknown."

And the future? "I'm looking for a job where I hope to put the most recent scientific insights into practice, somewhere at the interface between academia and industry. Preferably in the Netherlands or somewhere else in Europe due to the pandemic. I might also move to the United States when the timing is appropriate."