Neuromorphic simulations can yield computational advantages relevant to many applications

With the insertion of a little math, Sandia National Laboratories researchers have shown that neuromorphic computers, which synthetically replicate the brain's logic, can solve more complex problems than those posed by artificial intelligence and may even earn a place in high-performance computing.

The findings, detailed in a recent article in the journal Nature Electronics, show that neuromorphic simulations using the statistical method called random walks can track X-rays passing through bone and soft tissue, disease passing through a population, information flowing through social networks and the movements of financial markets, among other uses, said Sandia theoretical neuroscientist and lead researcher James Bradley Aimone.

"Basically, we have shown that neuromorphic hardware can yield computational advantages relevant to many applications, not just artificial intelligence to which it's obviously kin," said Aimone. "Newly discovered applications range from radiation transport and molecular simulations to computational finance, biology modeling and particle physics."

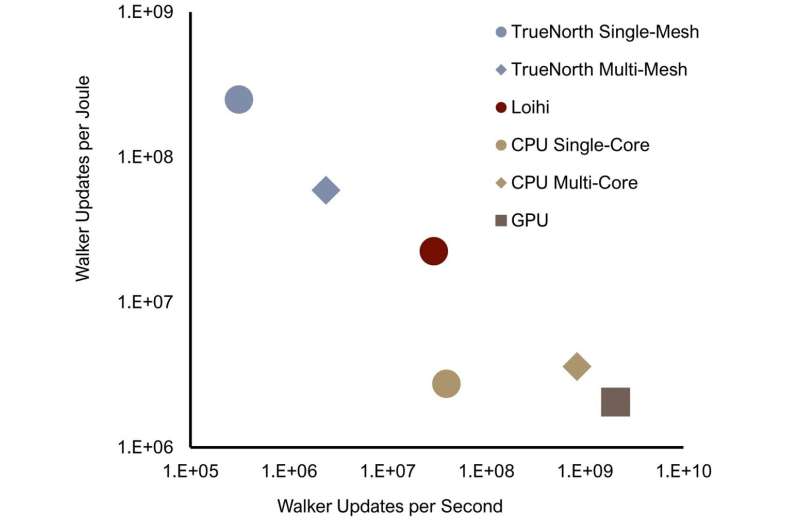

In optimal cases, neuromorphic computers will solve problems faster and use less energy than conventional computing, he said.

The bold assertions should be of interest to the high-performance computing community because finding capabilities to solve statistical problems is of increasing concern, Aimone said.

"These problems aren't really well-suited for GPUs [graphics processing units], which is what future exascale systems are likely going to rely on," Aimone said. "What's exciting is that no one really has looked at neuromorphic computing for these types of applications before."

Sandia engineer and paper author Brian Franke said, "The natural randomness of the processes you list will make them inefficient when directly mapped onto vector processors like GPUs on next-generation computational efforts. Meanwhile, neuromorphic architectures are an intriguing and radically different alternative for particle simulation that may lead to a scalable and energy-efficient approach for solving problems of interest to us."

Franke models photon and electron radiation to understand their effects on components.

The team successfully applied neuromorphic-computing algorithms to model random walks of gaseous molecules diffusing through a barrier, a basic chemistry problem, using the 50-million-chip Loihi platform Sandia received approximately a year and a half ago from Intel Corp., said Aimone. "Then we showed that our algorithm can be extended to more sophisticated diffusion processes useful in a range of applications."

The claims are not meant to challenge the primacy of standard computing methods used to run utilities, desktops and phones. "There are, however, areas in which the combination of computing speed and lower energy costs may make neuromorphic computing the ultimately desirable choice," he said.

Unlike the difficulties posed by adding qubits to quantum computers—another interesting method of moving beyond the limitations of conventional computing—chips containing artificial neurons are cheap and easy to install, Aimone said.

There can still be a high cost for moving data on or off the neurochip processor. "As you collect more, it slows down the system, and eventually it won't run at all," said Sandia mathematician and paper author William Severa. "But we overcame this by configuring a small group of neurons that effectively computed summary statistics, and we output those summaries instead of the raw data."

Severa wrote several of the experiment's algorithms.

Like the brain, neuromorphic computing works by electrifying small pin-like structures, adding tiny charges emitted from surrounding sensors until a certain electrical level is reached. Then the pin, like a biological neuron, flashes a tiny electrical burst, an action known as spiking. Unlike the metronomical regularity with which information is passed along in conventional computers, said Aimone, the artificial neurons of neuromorphic computing flash irregularly, as biological ones do in the brain, and so may take longer to transmit information. But because the process only depletes energies from sensors and neurons if they contribute data, it requires less energy than formal computing, which must poll every processor whether contributing or not. The conceptually bio-based process has another advantage: Its computing and memory components exist in the same structure, while conventional computing uses up energy by distant transfer between these two functions. The slow reaction time of the artificial neurons initially may slow down its solutions, but this factor disappears as the number of neurons is increased so more information is available in the same time period to be totaled, said Aimone.

The process begins by using a Markov chain—a mathematical construct where, like a Monopoly gameboard, the next outcome depends only on the current state and not the history of all previous states. That randomness contrasts, said Sandia mathematician and paper author Darby Smith, with most linked events. For example, he said, the number of days a patient must remain in the hospital are at least partially determined by the preceding length of stay.

Beginning with the Markov random basis, the researchers used Monte Carlo simulations, a fundamental computational tool, to run a series of random walks that attempt to cover as many routes as possible.

"Monte Carlo algorithms are a natural solution method for radiation transport problems," said Franke. "Particles are simulated in a process that mirrors the physical process."

The energy of each walk was recorded as a single energy spike by an artificial neuron reading the result of each walk in turn. "This neural net is more energy efficient in sum than recording each moment of each walk, as ordinary computing must do. This partially accounts for the speed and efficiency of the neuromorphic process," said Aimone. More chips will help the process move faster using the same amount of energy, he said.

The next version of Loihi, said Sandia researcher Craig Vineyard, will increase its current chip scale from 128,000 neurons per chip to up to one million. Larger scale systems then combine multiple chips to a board.

"Perhaps it makes sense that a technology like Loihi may find its way into a future high-performance computing platform," said Aimone. "This could help make HPC much more energy efficient, climate-friendly and just all around more affordable."

More information: J. Darby Smith et al, Neuromorphic scaling advantages for energy-efficient random walk computations, Nature Electronics (2022). DOI: 10.1038/s41928-021-00705-7