March 16, 2022 feature

Robotic telekinesis: Allowing humans to remotely operate and train robotic hands

Over the past few decades, computer scientists have developed increasingly advanced techniques to train and operate robots. Collectively, these methods could facilitate the integration of robotic systems in an increasingly wide range of real-world settings.

Researchers at Carnegie Mellon University have recently created a new system that allows users to control a robotic hand and arm remotely, simply by demonstrating the movements they want it to replicate in front of a camera. This system, introduced in a paper pre-published on arXiv, could open exciting possibilities for the teleoperation and remote training of robots completing missions in both everyday settings and environments that are inaccessible to humans.

"Prior works in this area rely either on gloves, motion markers or a calibrated multi-camera setup," Deepak Pathak, one of the researchers who developed the new system, told TechXplore. "Instead, our system works using a single uncalibrated camera. Since no calibration is needed, the user can be standing anywhere and still successfully teleoperate the robot."

The new system developed by Pathak and his colleagues is based on a model that can translate the movements of human hands into a series of instructions, which then guide the movements of a robot. Remarkably, the model was solely trained on a series of YouTube videos in which humans perform actions and interact with different objects.

"The diversity of huge passive video data helps it work across untrained users, tasks, and objects," Pathak explained. "Our system offers a low-cost and natural way to teach robots via demonstrations, as opposed to kinesthetically holding the robot or wearing a glove or motion capture suit."

By analyzing a single, two-dimensional (2D) image, the researchers' system can derive the movements that a human hand and arm are performing in a three-dimensional (3D) space. Subsequently, it re-targets a human's hand joints to match a robot's hand joints, in order to reproduce the same movements.

"As human and robot hands differ in shape, size and structure, this translation is under-constrained, particularly with a single image." Aravind Sivakumar and Kenny Shaw, the two students involved in the project, explained. "Our key insight is that while paired human-robot correspondence data is expensive to collect, the internet contains a massive corpus of rich and diverse human hand videos."

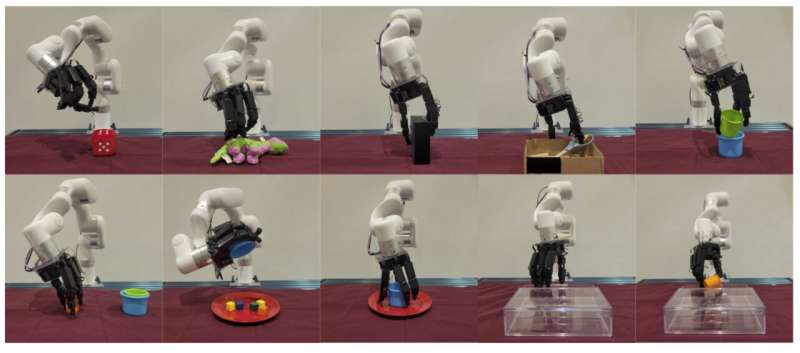

To train their system solely using passive video data, the researchers built on decades of research focusing on 3D human pose estimation and computer vision. Their initial results are highly promising, as they found that their system could allow untrained users to teleoperate a robot and remotely complete different dexterous manipulation tasks.

"Personally, the most exciting part to me is the use of diverse internet data for robotics," Pathak said. "Hopefully, our recent work is just one of many future directions in which internet videos act as a rich source of supervision for robotic control, in addition to robotic vision."

To use the system developed by this team of researchers, users simply need to stand in front of an RGB camera and perform the hand or arm movements they would like the robot to replicate. As it is very easy to use and does not require sophisticated equipment, the system could eventually be used to tackle numerous real-world problems.

"Robotic telekinesis and similar technologies will enable robot teaching in a larger variety of settings, including in households, where they will be expected to perform everyday tasks. Pathak added, "Using only a single uncalibrated camera, our system can in theory be controlled from anywhere in the world, thus it makes robot teaching more accessible to anyone. We are now collecting large-scale data using our robotic telekinesis system to teach the robot to act and adapt autonomously in the real world."

More information: Aravind Sivakumar, Kenneth Shaw, Deepak Pathak, Robotic telekinesis: learning a robotic hand imitator by watching humans on Youtube. arXiv:2202.10448v1 [cs.RO], arxiv.org/abs/2202.10448

© 2022 Science X Network