Can computers write product reviews with a human touch?

Artificial intelligence systems can be trained to write human-like product reviews that assist consumers, marketers and professional reviewers, according to a study from Dartmouth College, Dartmouth's Tuck School of Business, and Indiana University.

The research, published in the International Journal of Research in Marketing, also identifies ethical challenges raised by the use of the computer-generated content.

"Review writing is challenging for humans and computers, in part, because of the overwhelming number of distinct products," said Keith Carlson, a doctoral research fellow at the Tuck School of Business. "We wanted to see how artificial intelligence can be used to help people that produce and use these reviews."

For the research, the Dartmouth team set two challenges. The first was to determine whether a machine can be taught to write original, human-quality reviews using only a small number of product features after being trained on a set of existing content. Secondly, the team set out to see if machine learning algorithms can be used to write syntheses of reviews of products for which many reviews already exist.

"Using artificial intelligence to write and synthesize reviews can create efficiencies on both sides of the marketplace," said Prasad Vana, assistant professor of business administration at Tuck School of Business. "The hope is that AI can benefit reviewers facing larger writing workloads and consumers that have to sort through so much content about products."

The researchers focused on wine and beer reviews because of the extensive availability of material to train the computer algorithms. Write-ups of these products also feature relatively focused vocabularies, an advantage when working with AI systems.

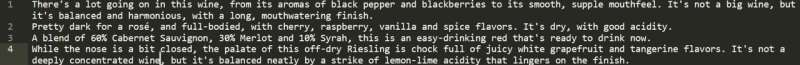

To determine whether a machine could write useful reviews from scratch, the researchers trained an algorithm on about 180,000 existing wine reviews. Metadata tags for factors such as product origin, grape variety, rating, and price were also used to train the machine-learning system.

When comparing the machine-generated reviews against human reviews for the same wines, the research team found agreement between the two versions. The results remained consistent even as the team challenged the algorithms by changing the amount of input data that was available for reference.

The machine-written material was then assessed by non-expert study participants to test if they could determine whether the reviews were written by humans or a machine. According to the research paper, the participants were unable to distinguish between the human and AI-generated reviews with any statistical significance. Furthermore, their intent to purchase a wine was similar across human versus machine generated reviews of the wine.

Having found that artificial intelligence can write credible wine reviews, the research team turned to beer reviews to determine the effectiveness of using AI to write "review syntheses." Rather than being trained to write new reviews, the algorithm was tasked with aggregating elements from existing reviews of the same product. This tested AI's ability to identify and provide limited but relevant information about products based on a large volume of varying opinions.

"Writing an original review tests the computer's expressive ability based on a relatively narrow set of data. Writing a synthesis review is a related but distinct task where the system is expected to produce a review that captures some of the key ideas present in an existing set of reviews for a product," said Carlson, who conducted the research while a Ph.D. candidate in computer science at Dartmouth.

To test the algorithm's ability to write review syntheses, researchers trained it on 143,000 existing reviews of over 14,000 beers. As with the wine dataset, the text of each review was paired with metadata including the product name, alcohol content, style, and scores given by the original reviewers.

As with the wine reviews, the research used independent study participants to judge whether the machine-written summaries captured and summarized the opinions of numerous reviews in a useful, human-like manner.

According to the paper, the model was successful at taking the reviews of a product as input and generating a synthesis review for that product as output.

"Our modeling framework could be useful in any situation where detailed attributes of a product are available and a written summary of the product is required," said Vana. "It's interesting to imagine how this could benefit restaurants that cannot afford sommeliers or independent sellers on online platforms who may sell hundreds of products."

Both challenges used a deep learning neural net based on transformer architecture to ingest, process and output review language.

According to the research team, the computer systems are not intended to replace professional writers and marketers, but rather to assist them in their work. A machine-written review, for instance, could serve as a time-saving first draft of a review that a human reviewer could then revise.

The research can also help consumers. Syntheses reviews—like those on beer in the study—can be expanded to the constellation of products and services in online marketplaces to assist people who have limited time to read through many product reviews.

In addition to the benefits of machine-written reviews, the research team highlights some of the ethical challenges presented by using computer algorithms to influence human consumer behavior.

Noting that marketers could get better acceptance of machine-generated reviews by falsely attributing them to humans, the team advocates for transparency when computer-generated reviews are offered.

"As with other technology, we have to be cautious about how this advancement is used," said Carlson. "If used responsibly, AI-generated reviews can be both a productivity tool and can support the availability of useful consumer information."

More information: Keith Carlson et al, Complementing human effort in online reviews: A deep learning approach to automatic content generation and review synthesis, International Journal of Research in Marketing (2022). DOI: 10.1016/j.ijresmar.2022.02.004