July 11, 2022 feature

A new approach to enhance multi-fingered robot hand manipulation

In recent years, roboticists have developed increasingly advanced robotic systems, many of which have artificial hands or robot hands with multiple fingers. To complete everyday tasks in both homes and public settings, robots should be able to use their "hands" to efficiently grasp and manipulate objects.

Enabling dexterous manipulation involving multiple fingers in robots, however, has so far proved challenging. This is primarily because it is an advanced skill that entails an adaptation to the shape, weight, and configuration of objects.

Researchers at Universität Hamburg have recently introduced a new approach to teach robots to grasp and manipulate objects using a multi-fingered robotic hand. This approach, introduced in IEEE Transactions on Neural Networks and Learning Systems, allows a robotic hand to learn from humans through teleoperation and adapt its manipulation strategies based on human hand postures and the data gathered when interacting with the environment.

"The original idea behind this research was to develop a teleoperation system that can transfer human hand manipulation skills to a multifigured robot hand, so that a human user can teach a robot hand to perform tasks online," Dr. Chao Zeng, one of the researchers who carried out the study, told TechXplore. "There are two basic objectives of our work. Firstly, unlike other state-of-the-art methods, we do not want to wear a glove with optical markers on it."

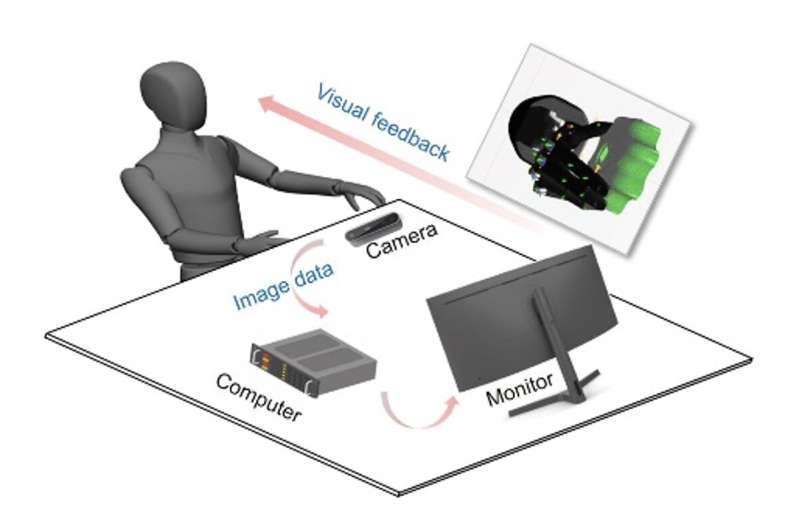

Zeng and his colleagues wanted their robot to acquire dexterous manipulation skills by watching human demonstrations. However, instead of forcing human users who are training the robot to wear gloves with optical markers, as done in other previous studies, they wanted the user to be able to move his/her fingers freely, without any physical restrictions.

Instead, they used cameras to capture images of the human user's hand postures. This proved to be quite challenging, yet they were ultimately able to attain promising results.

"Our second objective was to use the robotic hand to achieve compliant behaviors, like we humans do, so that it would be able to deal with physical contact-rich interaction tasks with expected dexterity," Zeng explained.

In their previous works, the researchers found that controlling the force with which a robot grasps or holds objects can help to attain more compliant manipulation skills. These are skills that are particularly important during tasks that entail a physical interaction with objects, such as cutting, sawing or inserting objects inside something.

"In this research, we also wanted to adopt force control on the robot hand," Zeng said. "However, directly training a deep neural network (DNN) to generate the desired force control commands for the robot at run time is challenging. To address this problem, we take a two-step approach."

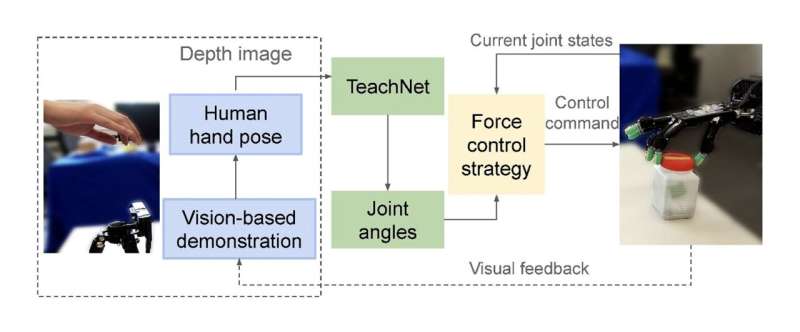

The first step of the approach devised by Zeng and his colleagues entailed capturing the posture of human users and mapping this onto the robot's joint angles using a DNN. Their model was trained on data they collected during simulations. After its training, it could effectively analyze images of a human user's hands and produce matching joint angles for the robot's hands.

"As a second step, we designed a force control strategy that can predict the desired force commands at each time step given the current reference angles," Zeng said. "Our approach's two components can be seamlessly integrated into the teleoperation system, to improve the compliance of the robotic hand, as we had set out to do."

The researchers evaluated their approach in a series of tests, both in simulations and in real-world settings using the Shadow hand, a robotic system that resembles a human's hand in both size and shape. Their results were highly promising, as their models significantly outperformed a widely used approach for compliant robot manipulation, producing more effective manipulation strategies.

"The system we proposed can be used for robot hand teleoperation only relying on vision data, and it can work in both simulation and real-world tasks," Zeng said. "Our work is an interesting attempt to integrate high-level learning and low-level control for robot manipulation. Although this integration looks somehow straightforward, it can indeed improve the robot's compliant manipulation ability."

In the future, the new approach introduced by this team of researchers could help to improve the manipulation skills of both existing and newly developed humanoid robots. In addition, it could prove to be a promising strategy to close the gap between deep learning and control-based approaches, merging the advantages of both to improve the capabilities of robots.

"Our current teleoperation system is not perfect, and several aspects could be improved," Zeng added. "For example, it lacks immersion during teleoperation and VR/AR might be used to improve the human user experience. In our next studies, we plan to explore these possibilities and train a better NN model that can generalize over different human hands of different sizes. We are also considering the possibility of tracking the robot's arm to realize robot arm-hand teleoperation for compliant manipulation."

More information: Chao Zeng et al, Multifingered Robot Hand Compliant Manipulation Based on Vision-Based Demonstration and Adaptive Force Control, IEEE Transactions on Neural Networks and Learning Systems (2022). DOI: 10.1109/TNNLS.2022.3184258

© 2022 Science X Network