January 11, 2023 feature

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

A deep belief neural network based on silicon memristive synapses

While artificial intelligence (AI) models are becoming increasingly advanced, training and running these models on conventional computer hardware is very energy consuming. Engineers worldwide have thus been trying to create alternative, brain-inspired hardware that could better support the high computational load of AI systems.

Researchers at Technion–Israel Institute of Technology and the Peng Cheng Laboratory have recently created a new neuromorphic computing system supporting deep belief neural networks (DBNs), a generative and graphical class of deep learning models. This system, outlined in Nature Electronics, is based on silicon-based memristors, energy-efficient devices that can both store and process information.

Memristors are electrical components that can switch or regulate the flow of electrical current in a circuit, while also remembering the charge that passed through it. As their capabilities and structure resemble those of synapses in the human brain more closely than conventional memories and processing units, they could be better suited for running AI models.

"We, as part of a large scientific community, have been working on neuromorphic computing for quite some time now," Shahar Kvatinsky, one of the researchers who carried out the study, told TechXplore. "Usually, memristors are used to perform analog computations. It is known that there are two main limitations in the neuromorphic field—one is the memristive technology that is still not widely available. The second is the high cost of converters that are required to convert the analog computation to the digital data and vice versa."

When developing their neuromorphic computing system, Kvatinsky and his colleagues set out to overcome these two crucial limitations of memristor-based systems. As memristors are not widely available, they decided to instead use a commercially available Flash technology developed by Tower Semiconductor, engineering it to behave like a memristor. In addition, they specifically tested their system with a newly designed DBN, as this particular model does not require data conversions (i.e., its input and output data are binary and inherently digital.

"DBNs are an old machine learning theoretical concept," Kvatinsky explained. "Our idea was to use binary (i.e., either with a value of 0 or 1) neurons (input/output). There are several unique properties (compared to deep neural networks), including that the training of such a network relies on calculating the accumulated desired model update and updating it only when reaching a certain threshold."

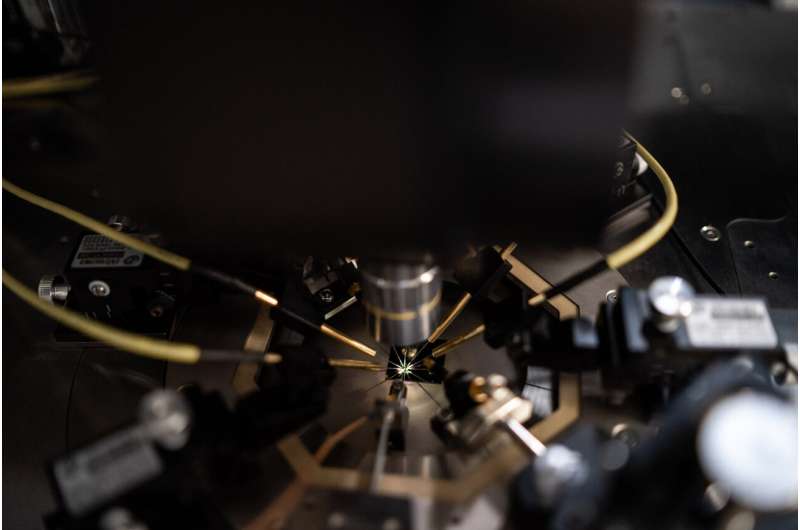

The artificial synapses created by the researchers were fabricated using commercial complementary-metal-oxide-semiconductor (CMOS) processes. These memristive, silicon-based synapses have numerous advantageous features, including analog tunability, high endurance, long retention time, predictable cycling degradation, and moderate variability across different devices.

Kvatinsky and his colleagues demonstrated their system by training a type of DBN, known as a restricted Boltzmann machine, on a pattern recognition task. To train this model (a 19x 8 memristive restricted Boltzmann machine), they used two 12 x 8 arrays of the memristors they engineered.

"The simplicity of DBN makes them attractive for hardware implementation," Kvatinsky said. "We showed that even though DBN are simple to implement (due to their binary nature), we can reach high accuracy (>97% accurate recognition of handwritten digits) when using Y-Flash based memristors."

The architecture introduced by this team of researchers offers a new viable solution for running restricted Boltzmann machines and other DBNs. In the future, it could inspire the development of similar neuromorphic systems, collectively helping to run AI systems more energy-efficiently.

"We now plan to scale up this architecture, explore additional memristive technologies and explore more neural network architectures."

More information: Wei Wang et al, A memristive deep belief neural network based on silicon synapses, Nature Electronics (2022). DOI: 10.1038/s41928-022-00878-9

© 2023 Science X Network