This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

Hands-free tech adds realistic sense of touch in extended reality

With an eye toward a not-so-distant future where some people spend most or all of their working hours in extended reality, researchers from Rice University, Baylor College of Medicine and Meta Reality Labs have found a hands-free way to deliver believable tactile experiences in virtual environments.

Users in virtual reality (VR) have typically needed hand-held or hand-worn devices like haptic controllers or gloves to experience tactile sensations of touch. The new "multisensory pseudo-haptic" technology, which is described in an open-access study published online in Advanced Intelligent Systems, uses a combination of visual feedback from a VR headset and tactile sensations from a mechanical bracelet that squeezes and vibrates the wrist.

"Wearable technology designers want to deliver virtual experiences that are more realistic, and for haptics, they've largely tried to do that by recreating the forces we feel at our fingertips when we manipulate objects," said study co-author Marcia O'Malley, Rice's Thomas Michael Panos Family Professor in Mechanical Engineering. "That's why today's wearable haptic technologies are often bulky and encumber the hands."

O'Malley said that's a problem going forward because comfort will become increasingly important as people spend more time in virtual environments.

"For long-term wear, our team wanted to develop a new paradigm," said O'Malley, who directs Rice's Mechatronics and Haptic Interfaces Laboratory. "Providing believable haptic feedback at the wrist keeps the hands and fingers free, enabling 'all-day' wear, like the smart watches we are already accustomed to."

Haptic refers to the sense of touch. It includes both tactile sensations conveyed through skin and kinesthetic sensations from muscles and tendons. Our brains use kinesthetic feedback to continually sense the relative positions and movements of our bodies without conscious effort. Pseudo-haptics are haptic illusions, simulated experiences that are created by exploiting how the brain receives, processes and responds to tactile and kinesthetic input.

"Pseudo-haptics aren't new," O'Malley said. "Visual and spatial illusions have been studied and used for more than 20 years. For example, as you move your hand, the brain has a kinesthetic sense of where it should be, and if your eye sees the hand in another place, your brain automatically takes note. By intentionally creating those discrepancies, it's possible to create a haptic illusion that your brain interprets as, 'My hand has run into an object'."

"What is most interesting about pseudo-haptics is that you can create these sensations without hardware encumbering the hands," she said.

While designers of virtual environments have used pseudo-haptic illusions for years, the question driving the new research was: Can visually driven pseudo-haptic illusions be made to appear more realistic if they are reinforced with coordinated, hands-free tactile sensations at the wrist?

Evan Pezent, a former student of O'Malley's and now a research scientist at Meta Reality Labs in Redmond, Washington, worked with O'Malley and colleagues to design and conduct experiments in which pseudo-haptic visual cues were augmented with coordinated tactile sensations from Tasbi, a mechanized bracelet Meta had previously invented.

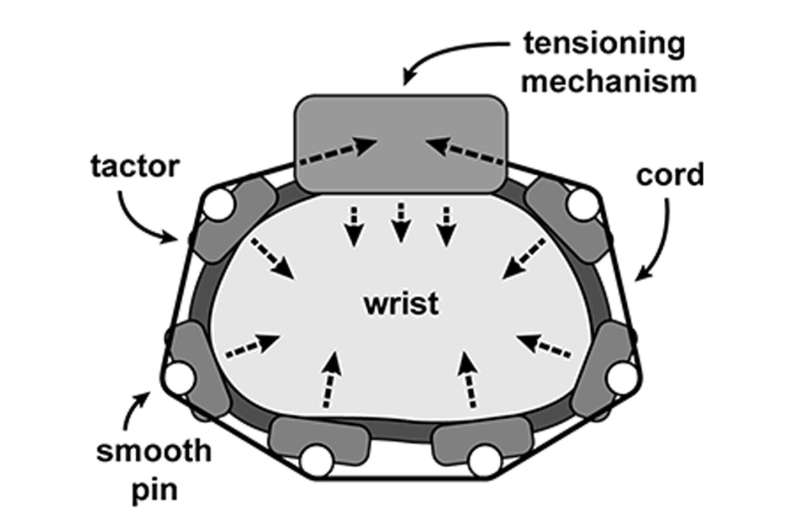

Tasbi has a motorized cord that can tighten and squeeze the wrist, as well as a half-dozen small vibrating motors—the same components that deliver silent alerts on mobile phones—which are arrayed around the top, bottom and sides of the wrist. When and how much these vibrate and when and how tightly the bracelet squeezes can be coordinated, both with one another and with a user's movements in virtual reality.

In initial experiments, O'Malley and colleagues had users press virtual buttons that were programmed to simulate varying degrees of stiffness. The research showed volunteers were able to sense varying degrees of stiffness in each of four virtual buttons. To further demonstrate the range of physical interactions the system could simulate, the team then incorporated it into nine other common types of virtual interactions, including pulling a switch, rotating a dial, and grasping and squeezing an object.

"Keeping the hands free while combining haptic feedback at the wrist with visual pseudo-haptics is an exciting new approach to designing compelling user experiences in VR," O'Malley said. "Here we explored user perception of object stiffness, but Evan has demonstrated a wide range of haptic experiences that we can achieve with this approach, including bimanual interactions like shooting a bow and arrow, or perceiving an object's mass and inertia."

Study co-authors include Alix Macklin of Rice, Jeffrey Yau of Baylor and Nicholas Colonnese of Meta.

More information: Evan Pezent et al, Multisensory Pseudo‐Haptics for Rendering Manual Interactions with Virtual Objects, Advanced Intelligent Systems (2023). DOI: 10.1002/aisy.202200303