This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

Wearable in-sensor reservoir computing for multitask learning via material-algorithm co-design strategy

The human retina not only senses light signals, but also processes them simultaneously by capturing their rich dynamics, thus accelerating the task-dependent learning in the down-stream visual cortex. This synergy of both the retina and the visual cortex has inspired in-sensor multi-task learning.

However, traditional silicon-vision chips suffer from the large time/energy overheads which are caused by the massive and frequent data shuttling and sequential analog-digital conversions among their separated sensing, processing and storage units. In addition, the slowdown of Moore's law further exacerbates the limitation. Therefore, devising a paired material-algorithm combining the artificial retina and reservoir computing (RC) is of significance for the sensing-computing systems with ultra-low energy overheads and ultra-fast computing speed.

In a study published in Nature Communications, the research group led by Prof. Huang Weiguo from Fujian Institute of Research on the Structure of Matter of the Chinese Academy of Sciences, achieved wearable in-sensor reservoir computing for multitask learning via a material-algorithm co-design strategy.

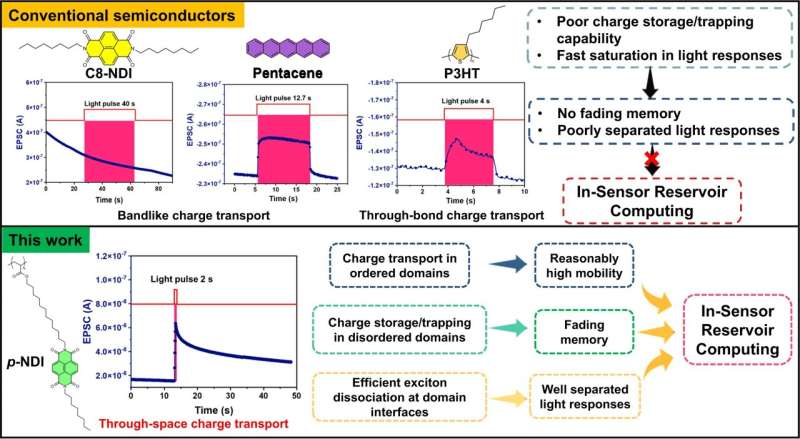

The researchers designed and synthesized a material-algorithm co-design, a light-responsive semiconducting polymer (p-NDI) with efficient exciton dissociations and through-space charge-transport characteristics to construct an in-sensor RC for multi-tasked pattern classification.

They found that the p-NDI-based flexible phototransistors exhibit well-separated light responses, nonlinear fading memory, and echo state property, enabling a wearable transistor-based dynamic in-sensor RC system.

This all-organic-materials-based RC system recognized handwritten letters and digits, and classified various costumes with accuracies of 98.04%, 88.18% and 91.76%, respectively. In addition to 2D images, the RC efficiently classified three types of spatiotemporal dynamic gestures (left-hand waving, right-hand waving and hand clapping gestures) at an accuracy of 98.62%.

This study not only overcomes the bottleneck associated with conventional sensing-computing systems of large time and energy overheads, but also provides a new material-algorithm co-design strategy for wearable, affordable, and highly efficient photonic neuromorphic systems.

More information: Xiaosong Wu et al, Wearable in-sensor reservoir computing using optoelectronic polymers with through-space charge-transport characteristics for multi-task learning, Nature Communications (2023). DOI: 10.1038/s41467-023-36205-9