April 21, 2023 feature

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

A model to automatically identify the sentiment polarity of specific words in written texts

In recent years, computer scientists have been trying to develop effective models for sentiment analysis. These models are designed to analyze sentences or longer texts and autonomously determine their underlying emotional tone.

In addition to expressing an overall emotional tone, texts can contain individual words that are charged with positive or negative emotions, as well as neutral words. For example, if we are complaining about something, the word describing the thing in question will most likely be charged with a negative emotion.

The task of identifying the 'sentiment polarity' (i.e., the negative or positive emotional connotations) of specific words in a sentence is known as aspect-based sentiment analysis (ABSA). While this task can be intuitive and simple for humans, it can be far more challenging to tackle using computational models.

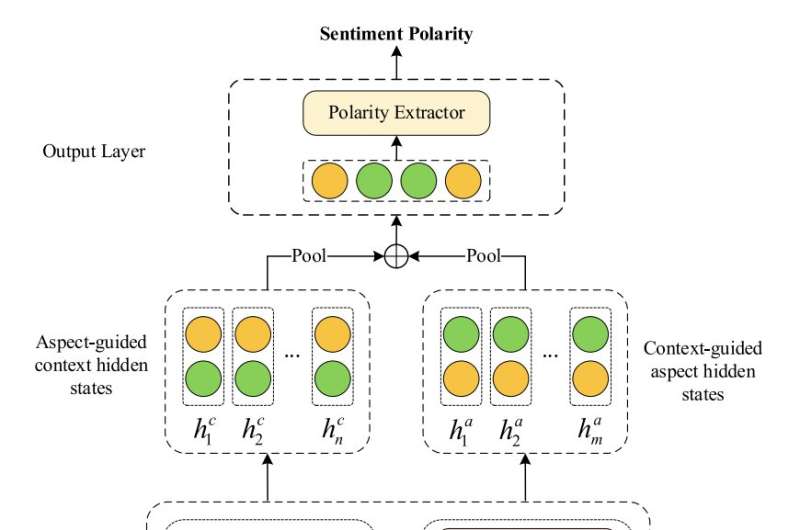

Researchers at Anhui University of Science & Technology in China have recently developed a new model designed to effectively complete ABSA tasks. This model, introduced in a paper in Connection Science, is based on a machine learning algorithm known as lightweight multilayer interactive attention network (LMIAN).

"Existing studies have recognized the value of interactive learning in ABSA and have developed various methods to precisely model aspect words and their contexts through interactive learning," Wenjun Zheng, Shunxiang Zhang and their colleagues wrote in their paper. "However, these methods mostly take a shallow interactive way to model aspect words and their contexts, which may lead to the lack of complex sentiment information. To solve this issue, we propose a Lightweight Multilayer Interactive Attention Network (LMIAN) for ABSA."

Interactive attention networks can learn to pay attention to specific words in a sentence via an attention mechanism, which allows them to link these words to the overall linguistic context in which they are found. As part of their recent study, Zheng, Zhang and their colleagues trained one of these models to specifically learn the complex emotional information hidden in a text, so that it can then detect the sentiment polarity of specific words.

"We first employ a pre-trained language model to initialize word embedding vectors," Zheng, Zhang and their colleagues wrote in their paper. "Second, an interactive computational layer is designed to build correlations between aspect words and their contexts. Such correlation degree is calculated by multiple computational layers with neural attention models. Third, we use a parameter-sharing strategy among the computational layers. This allows the model to learn complex sentiment features with lower memory costs."

The researchers trained and evaluated their model using six publicly available sentiment analysis datasets containing texts in Chinese and English. These texts are essentially online reviews of products or services, with an associated object and a sentiment label (i.e., positive, negative or neutral), which ultimately allowed the team to train their model.

In initial evaluations on these datasets, the team's LMIAN achieved highly promising results, identifying the sentiment polarity of words in sentences with accuracies over 90%, while cosuming less GPU memory than other networks. In the future, its performance could be improved further, and it could potentially also be integrated with other text and sentiment analysis tools.

"Extensive experiments have proved that our LMIAN achieves a better balance between the model's performance, size, and GPU memory consumption," Zheng, Zhang and their colleagues concluded in their paper. "In the future, we will further optimize our interactive attention model to have higher performance and lower GPU memory consumption. One of our ideas is to perform deep interaction of local features with aspect words to improve model performance by reducing interference information."

More information: Wenjun Zheng et al, Lightweight multilayer interactive attention network for aspect-based sentiment analysis, Connection Science (2023). DOI: 10.1080/09540091.2023.2189119

© 2023 Science X Network