This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

New mathematical model: Punishments and rewards teach AI agents to make the right decisions

In a new dissertation in mathematics, Björn Lindenberg shows how reinforcement learning in AI can be used to create effective strategies for autonomous decision-making in various environments. Reward systems can be developed to reinforce correct behavior, such as finding optimal pricing strategies for financial instruments or controlling robots and network traffic.

Reinforcement learning is a part of AI where a digital decision-maker, known as an agent, learns to make decisions by interacting with its environment and receiving rewards or punishments depending on how well it performs its actions.

The agent receives rewards and punishments in the learning process by acting in an environment and receiving feedback based on its actions. By maximizing rewards and minimizing punishments, AI gradually learns to perform desirable actions and improve its performance in the given task.

"My research focuses on reinforcement learning where an agent is placed in an environment. The agent observes the state of the environment at each step, similar to how we humans perceive our surroundings. This could, for example, be the chessboard position, incoming video footage, industrial data, or sensor data from a robot," says Björn Lindenberg, Ph.D. in mathematics at the Department of Mathematics at Linnaeus University.

Reinforcement learning trains AI in autonomous decision making. The goal is to develop algorithms and models that help the agent make the best decisions. This is achieved through learning algorithms that take into account the agent's previous experiences and improve its performance over time.

There are many applications for reinforcement learning, such as game theory, robotics, financial analysis, and control of industrial processes.

"The agent makes decisions by choosing an action from a list of options, such as moving a chess piece or controlling a robot movement. These choices can then affect the environment and create a new game situation in chess or provide new sensor values for a robot," says Björn Lindenberg.

New mathematical model enhances reliability in the learning process

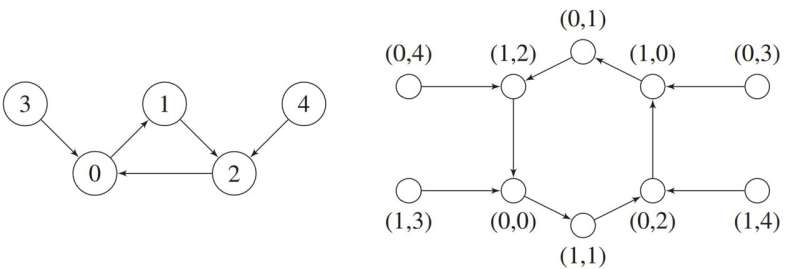

In his dissertation, Lindenberg has developed a model for deep reinforcement learning with multiple concurrent agents, which can enhance the learning process and make it more robust and effective. He has also investigated the number of iterations, i.e., repeated attempts, required for a system to become stable and perform well.

"Deep reinforcement learning is advancing at the same pace as other AI technologies, that is, very rapidly. This is largely due to exponentially increasing hardware capacity, meaning that computers are becoming more and more powerful, along with new insights into network architectures," Lindenberg continues.

The more complex the applications become, the more advanced mathematics and deep learning is needed in reinforcement learning. This need is evident in promoting the understanding of existing problems and discovering new algorithms.

"The methods presented in the dissertation can be incorporated into a variety of decision-making AI applications that, whether we realize it or not, are becoming an increasingly prevalent part of our daily lives," Lindenberg concludes.

More information: Lindenberg, Björn, Reinforcement Learning and Dynamical Systems, Linnaeus University (2023). DOI: 10.15626/LUD.494.2023