This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

New framework enables human-robot interaction for broader access to deep sea scientific exploration

Scientific exploration of the deep ocean has largely remained inaccessible to most people because of barriers to access due to infrastructure, training, and physical ability requirements for at-sea oceanographic research.

Now, a new and innovative framework for oceanographic research provides a way for shore-based scientists, citizen scientists, and the general public to seamlessly observe and control robotic sampling processes.

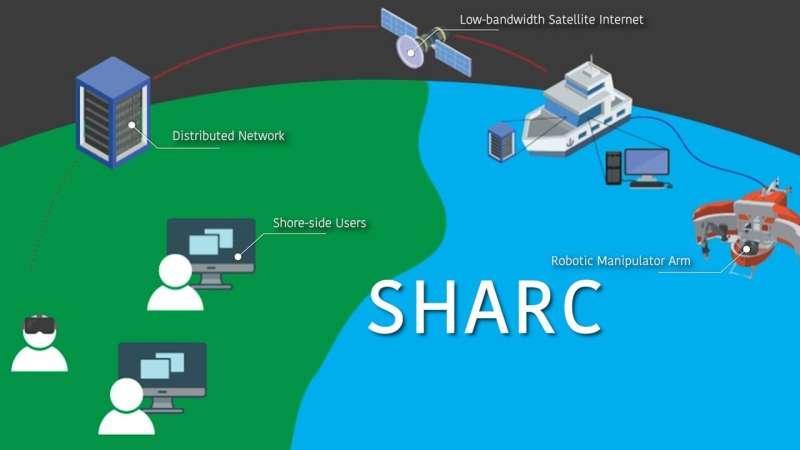

The Shared Autonomy for Remote Collaboration (SHARC) framework "enables remote participants to conduct shipboard operations and control robotic manipulators"—such as on remotely operated vehicles (ROVs)—"using only a basic internet connection and consumer-grade hardware, regardless of their prior piloting experience."

This is according to a paper in Science Robotics, titled "Enhancing scientific exploration of the deep sea through shared autonomy in remote manipulation." The framework has been developed by a research team from the Woods Hole Oceanographic Institution (WHOI), the Massachusetts Institute of Technology (MIT), and the Toyota Technological Institute at Chicago (TTIC).

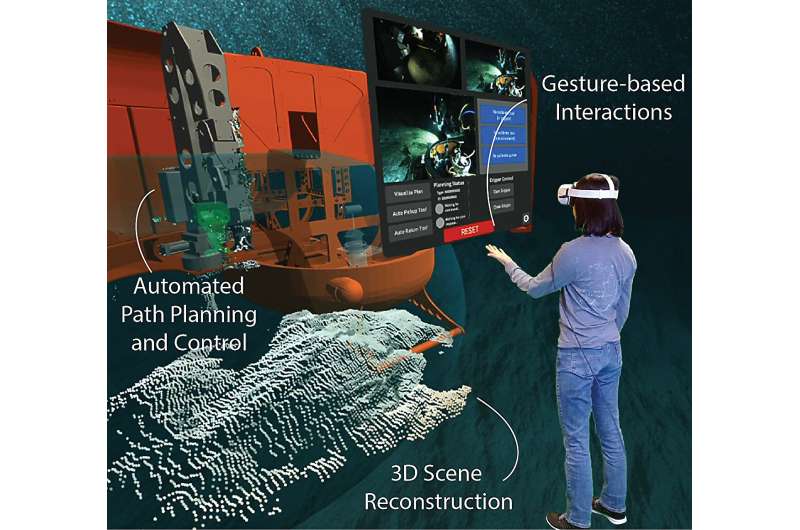

The SHARC framework enables real-time collaboration between multiple remote operators, who can issue goal-directed commands through simple speech and hand gestures while wearing virtual reality goggles in an intuitive three-dimensional workspace representation.

Through SHARC, "we can open up the operational aspects of deep sea exploration to citizen scientists, whether they be kids in a classroom or people who can't be present on a ship because of logistical or physical requirements," said co-author Richard Camilli, a principal investigator for the project and scientist in WHOI's Applied Ocean Physics and Engineering Department. "Citizen scientists can interact with the ROV's robotic manipulator arm in a virtual world, somewhat analogous to the science fiction 'holodeck' holographic system used on Federation starships on Star Trek."

SHARC enables human-robot interaction—sometimes referred to as shared autonomy—that delegates responsibilities between the robot and the human operator based on their complementary strengths. The robot, for instance, can handle kinematics, motion planning, obstacle avoidance, and other low-level tasks, while human operators take responsibility for high-level scene understanding, goal selection, and task-level planning. In addition, SHARC allows for parallel rather than sequential operation.

"We just give the robot its goal, and it finds a solution," said Camilli. "People and the robot can collaborate together, where we're not waiting for one thing to happen in order to do the next thing. While the robotic arm is executing a task, we can be focusing on the next goal."

In September 2021, during the height of the COVID pandemic, scientists successfully tested SHARC. During an oceanographic expedition in the San Pedro Basin of the Eastern Pacific Ocean, SHARC team members operated WHOI's Nereid Under Ice (NUI) hybrid ROV from thousands of kilometers away using SHARC's virtual reality and desktop interfaces.

The team members—physically located in Chicago, Boston, and Woods Hole—collaboratively collected a physical push core sample and recorded in-situ X-ray fluorescence measurements of seafloor microbial mats and sediments at water depths exceeding 1000 meters.

"This paper really highlights shared autonomy's potential to help democratize access to the deep sea," said lead author Amy Phung, who is a student in the MIT-WHOI Joint Program in Oceanography/Applied Ocean Science and Engineering graduate degree program. Phung was one of the scientists operating the NUI vehicle during the 2021 test of SHARC.

"With SHARC, our shore-side team was able to collect seafloor samples from over 4000 kilometers away without specialized hardware or extensive prior training. In the future, I believe that further advancements in robotics and autonomy research can someday enable shore-side scientists, students, and enthusiasts to actively participate in and contribute to deep-ocean exploration operations as they occur, which in turn can help to foster ocean literacy among the general public," Phung added.

"Whether it is on land, air, or in the ocean, most robots that operate today do so in one of two distinctly different ways: full autonomy or full remote control by highly trained pilots, with the latter being standard for settings like underwater manipulation that involve complex interactions between robots and their environment. This paper describes a new framework that enables robots to operate in between these two extremes in a way that takes advantage of the complementary capabilities of robots and humans," said co-author Matthew Walter, associate professor at TTIC.

Walter also currently is a WHOI guest investigator; previously, he was a student in the MIT-WHOI Joint Program. "SHARC allows people with little to no training to perform sophisticated tasks with deep-sea robots, with pilot oversight, from the comfort of their homes and offices through a combination of speech and virtual reality, and in turn, promises to redefine how we use robots for marine science and engineering," he explained.

In addition, SHARC is not dependent on a specific kind of ROV, manipulator arm, or other factors. "We can apply the same SHARC technology with totally different robotic arms and vehicles in completely different contexts," said Camilli. The SHARC framework "is flexible and hardware-agnostic."

"By using the SHARC framework for scientific exploration in the deep sea—which is a very challenging and unstructured environment—it highlights that this technology can be transferable to many different operational contexts, as well, potentially including subsea scientific infrastructure maintenance, deep space operations, nuclear decommissioning, and even unexploded ordnance remediation," Camilli added.

More information: Amy Phung et al, Enhancing scientific exploration of the deep sea through shared autonomy in remote manipulation, Science Robotics (2023). DOI: 10.1126/scirobotics.adi5227