November 27, 2023 feature

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

preprint

trusted source

proofread

A sensing paw that could improve the ability of legged robots to move on different terrains

Legged robots that artificially replicate the body structure and movements of animals could efficiently complete missions in a wide range of environments, including various outdoor natural settings. To do so, however, these robots should be able to walk on different terrains, such as soil, sand, grass, and so on, without losing balance, getting stuck or falling over.

Researchers at the Norwegian University of Science and Technology (NTNU) and the Indian Institute of Technology Bombay recently developed a new artificial paw with sensing capabilities that could help to improve the ability of legged robots to move on a variety of terrains. This "sensorized" paw, introduced in a paper posted to the preprint server arXiv, can recognize different terrains and their properties by estimating the force applied to its surface from the ground underneath.

"Our past research activities for the DARPA Subterranean Challenge, which was ultimately won by Team CERBERUS led by Prof. Kostas Alexis, indicated the importance of robust response to challenging terrain," Tejal Barnwal, Prof. Alexis and Jørgen Anker Olsen, authors of the paper, told Tech Xplore.

"Our team participated in the competition with the legged robot ANYmal, a platform provided by our ETH Zurich partners, and this was key to our success. Understanding the limitations of the state-of-the-art we concluded that enhancing a legged robot's perception through sensorized paws could render locomotion control even more reliable and adaptive."

Past studies have consistently reported the difficulties that legged robots can experience when moving on uneven and complex terrains. For instance, they have found that difficult terrains can restrict the movements of legged robots and create occlusions, preventing the robots from effectively sensing their surrounding environment.

In recent years, roboticists and computer scientists have thus been trying to develop computational methods that can recognize different terrains and modulate the movements of legged robots accordingly, to ensure their optimal locomotion. Yet many approaches proposed so far rely on sensors that are already integrated in the robots, such as LiDAR sensors and cameras, which offer only a limited view of the surrounding environment and of the terrain the robots are walking on.

"The integration of information from sight, touch, and sound empowers humans and animals to swiftly adapt while walking or running on diverse terrains," Barnwal, Olsen and Alexis said. "This multisensory approach boosts spatial awareness, enhances balance, and facilitates rapid decision-making for safe navigation in varied environments. Similarly, providing quadrupeds with sound-based terrain recognition and pressure information on foot exertion in real-time can aid them in maintaining balance and can help them adapt their control and navigation strategies effectively in various terrain situations."

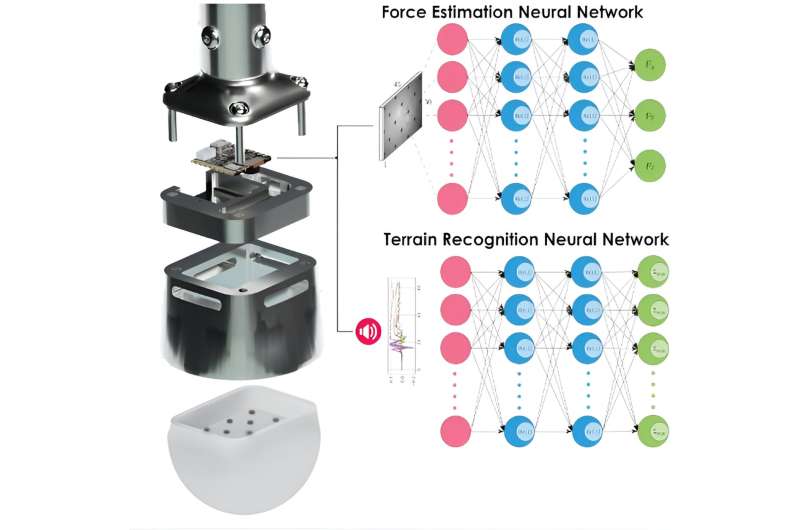

Barnwal, Olsen, Alexis and their colleague Alexander Vangen set out to develop a new system that could gather more detailed information about the terrain that robots are moving on in real-time. They ultimately created an artificial paw or foot, dubbed TRACEPaw, which can be integrated at the bottom of a robotic leg.

"Featuring a silicone-based hemispherical point end-effector, TRACEPaw utilizes silicon deformation, an embedded micro camera, and a microphone for the real-time estimation of 3D force vectors and recognition of various terrain types, including gravel, snow, sand and more," the researchers explained.

"The paw end-effector responds to contact forces by deforming, while an embedded micro camera captures images of the deformed inner surface inside the shoe. Simultaneously, a microphone captures audio signals during the interaction between the paw and the terrain."

The paw-like system created by Barnwal, Olsen, Alexis and Vangen collects a variety of sensory data from the surrounding environment, particularly from the terrain below it. Subsequently, this data is analyzed by a computer vision model trained via supervised learning, which can make predictions about a terrain and estimate the so-called contact force, based on the deformation of its silicon surface and the noise produced by the soil.

"The system employs simple yet efficient supervised learning models for vision-based 3D force estimation on silicone deformation and audio-based soil classification, allowing for on-edge sensing, computing, and inferencing in real-time," the researchers said.

A further advantage of the sensing system created by this research team is that it was created using off-the-shelf and readily available electronic components. This means that it could easily and affordably be fabricated on a large scale.

"Our sensorized paw was fabricated using off-the-shelf electronics and standard components," Barnwal, Olsen and Alexis said. "This can contribute to the system's accessibility, scalability, and ease of in-house fabrication, which could facilitate its widespread adoption and replication."

The researchers evaluated their system's performance in a series of experiments carried out within a laboratory setting. Their initial findings were highly promising, suggesting that TRACEPaw can significantly enhance the mobility and utility of legged robots, enabling them to recognize and adapt to specific terrains.

"Our study also shows that on-edge—inside the paw—computing with supervised learning models can help with rapid and dependable decision-making, enhancing the robot's adaptability and responsiveness, crucial for navigating dynamic environments and preventing incidents like slipping or stumbling in unpredictable terrains," the researchers said.

In the future, the artificial paw created by Barnwal, Olsen, Alexis and Vangen could facilitate the deployment of legged robots in real-world settings, for instance during search and rescue or exploration missions. Meanwhile, the team plans to continue improving their system by training its underlying algorithm on more data, as this could further refine its force estimation and soil classification capabilities.

"In our future work, we will aim to boost the system's environmental understanding by incorporating data from the on-board IMU, offering insights into terrain slope and force direction in the Earth's frame," the researchers added.

"We also plan to assess its performance on more complex multi-classed diverse terrains. Eventually, the potential integration of TRACEPaw with a physical legged robot will enable a comprehensive evaluation of the integrated system's performance in real-world scenarios."

More information: Aleksander Vangen et al, Terrain Recognition and Contact Force Estimation through a Sensorized Paw for Legged Robots, arXiv (2023). DOI: 10.48550/arxiv.2311.03855

© 2023 Science X Network