December 4, 2023 report

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

preprint

trusted source

proofread

AI image generation adds to carbon footprint, research shows

So you program your thermostat to save heating costs, recycle glass and plastic, ride a bicycle to work instead of driving a car, reuse sustainable grocery bags, buy solar panels, and shower with your mate—all to do your part to conserve energy, curb waste and lower your carbon footprint.

A study released last week may just spoil your day.

Researchers at Carnegie Mellon University and Hugging Face, a machine learning community website, report that you might still contribute to climate change if you are one of the 10 million-plus users who tap into machine learning models daily.

In what they term the first systematic comparison of costs associated with machine-learning models, the researchers found that using an AI model to generate an image requires the same amount of energy as charging a smartphone.

"People think that AI doesn't have any environmental impacts, that it's this abstract technological entity that lives on a 'cloud,'" said team leader Alexandra Luccioni. "But every time we query an AI model, it comes with a cost to the planet, and it's important to calculate that."

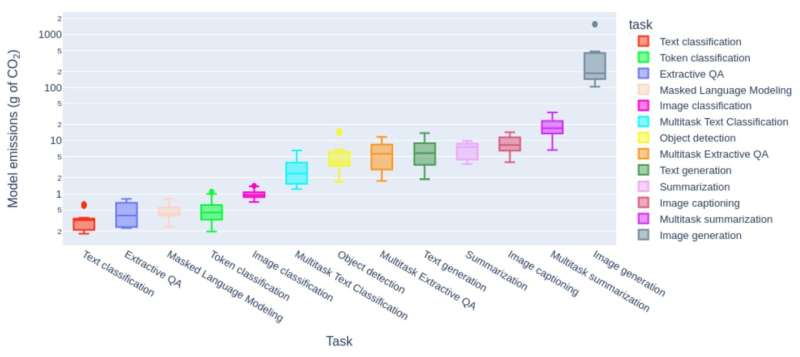

Her team tested 30 datasets using 88 models and found widespread differences in energy usage between varying types of tasks. They measured the amount of carbon dioxide emissions utilized per task.

The greatest amount of energy was expended by Stability AI's Stable Diffusion XL, an image generator. Nearly 1,600 grams of carbon dioxide is produced during such a session. Luccioni said that is roughly the equivalent of driving four miles in a gas-powered car.

On the lowest end of the scale, basic text generation tasks expended the equivalent of a car driving just 3/500 of a mile.

Other categories of machine learning tasks included classifying images and text, image captioning, summarizations, and answering questions.

The researchers stated that generative tasks that create new content, such as images and summarizations, are more energy- and carbon-intensive than discriminative tasks, such as ranking movies.

They also observed that using multi-purpose models to undertake discriminative tasks is more energy-intensive than using task-specific models for the same tasks. This is important, the researchers said, because of recent trends in model usage.

"We find this last point to be the most compelling takeaway of our study, given the current paradigm shift away from smaller models fine-tuned for a specific task towards models that are meant to carry out a multitude of tasks at once, deployed to respond to a barrage of user queries in real-time," the report said.

According to Luccioni, "If you're doing a specific application, like searching through email … do you really need these big models that are capable of anything? I would say no."

Although the numbers of carbon dioxide usage for such tasks may appear small, when multiplied by millions of users relying on AI-generated programs daily, often with multiple requests, the totals show what could amount to a significant impact on efforts to rein in environmental waste.

"I think that for generative AI overall, we should be conscious of where and how we use it, comparing its cost and its benefits," Luccioni said.

The findings are published on the arXiv preprint server.

More information: Alexandra Sasha Luccioni et al, Power Hungry Processing: Watts Driving the Cost of AI Deployment?, arXiv (2023). DOI: 10.48550/arxiv.2311.16863

© 2023 Science X Network