Scientists look to AI for help in peer review

Peer review is a cornerstone of the scientific publishing process but could artificial intelligence help with the process? Computer scientists from the University of Bristol have reviewed how state-of-the-art tools from machine learning and artificial intelligence are already helping to automate parts of the academic peer-review process.

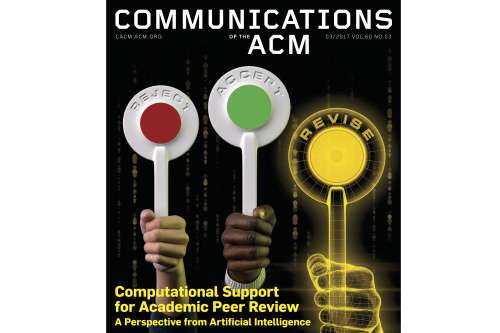

The paper, published as the cover feature in the current issue of Communications of the ACM, the Association for Computing Machinery's flagship magazine, considers the opportunities for streamlining and improving the review process further.

The invited review by Dr Simon Price and Professor Peter Flach from Bristol's Intelligent Systems Laboratory, who developed the SubSift system for profiling and matching papers with potential reviewers, discusses a range of computational tools supporting the allocation of papers to reviewers, aggregation of reviewers' scores, and putting together peer review panels.

Papers submitted to academic journals or conferences are reviewed by experts on the topic - the authors or 'peers' - who decide whether the paper makes a significant contribution to the scientific literature and whether its claims are verifiable. Submitted papers are often considerably improved in the process, particularly for journals where the reviewing process is repeated a number of times before the paper is published.

Peter Flach, Professor of Artificial Intelligence in the Department of Computer Science, said: "Artificial intelligence techniques are having a big impact in many areas of human endeavour. Our review paper shows that a range of tools for AI-supported peer review already exist and many more are around the corner. We suggest it is now time to rethink the peer review process to make best use of these exciting advances in technology."

Dr Simon Price, Research Fellow in the Department of Computer Science, added: "Profiling, matching and expert finding are key tasks that can be addressed using feature-based representations commonly used in machine learning, one of the fastest growing areas of AI research. Our review signposts ways in which the academic peer review process might develop and evolve, taking on forms that were previously thought impossible."

About the SubSift system

The SubSift system, short for 'submission sifting', was originally developed to support paper assignment at the 2009 ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, and subsequently generalised into a family of web services and re-usable web tools with funding from JISC.

The submission sifting tool composes several SubSift Web services into a workflow driven by a wizard-like user interface that takes the Program Chair through a series of Web forms of the paper-reviewer profiling and matching process.

An alternative user interface to SubSift that supports paper assignment for journals was also built. Known as MLj Matcher in its original incarnation, this tool has been used since 2010 to support paper assignment for the Machine Learning journal edited by Flach, as well as other journals.

More information: Simon Price et al. Computational support for academic peer review, Communications of the ACM (2017). DOI: 10.1145/2979672