January 15, 2018 weblog

Researchers in Japan are showing way to decode thoughts

Making news this month is a study by researchers the Advanced Telecommunications Research Institute International (ATR) and Kyoto University in Japan, having built a neural network that not only reads but re-creates what is in your mind.

Specifically, "The team has created a first-of-its-kind algorithm that can interpret and accurately reproduce images seen or imagined by a person," wrote Alexandru Micu in ZME Science.

Their paper, "Deep image reconstruction from human brain activity," is on bioRxiv. The authors are Guohua Shen, Tomoyasu Horikawa, Kei Majima, and Yukiyasu Kamitani.

Vanessa Ramirez, associate editor of Singularity Hub, was one of several writers on tech watching sites who reported on the study. The writers noted that this would mark a difference from other research involved in deconstructing images based on pixels and basic shapes.

"Trying to tame a computer to decode mental images isn't a new idea," said Micu. "However, all previous systems have been limited in scope and ability. Some can only handle narrow domains like facial shape, while others can only rebuild images from preprogrammed images or categories."

What is special here, Micu said, is that "their new algorithm can generate new, recognizable images from scratch."

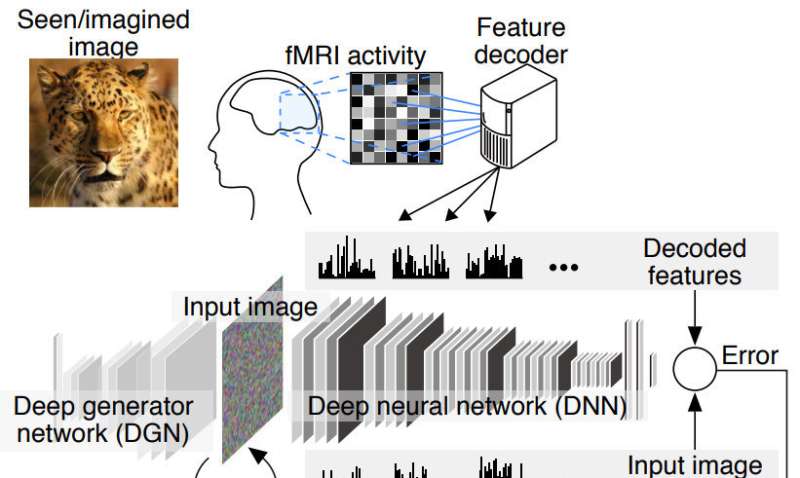

The study team has been exploring deep image reconstruction. Micu quoted the senior author of the study. "We believe that a deep neural network is good proxy for the brain's hierarchical processing," said Yukiyasu Kamitani.

"We have been studying methods to reconstruct or recreate an image a person is seeing just by looking at the person's brain activity," Kamitani, one of the scientists, told CNBC Make It.

He said where a previous approach was to assume an image consists of pixels or simple shapes, "it's known that our brain processes visual information hierarchically extracting different levels of features or components of different complexities."

In their paper too, the authors discussed methodology. They described "a novel image reconstruction method" where the image's pixel values are optimized to make its DNN features "similar to those decoded from human brain activity at multiple layers." (By DNN, they are referring to Deep Neural Network with, said Micu, "several layers of simple processing elements."

Three healthy subjects with normal or corrected-to-normal vision participated in the study and viewed images in three categories. Visual stimuli consisted of natural images, artificial geometric shapes, and alphabetical letters.

Mike James, I Programmer said, "it is important to realize right from the start that this isn't tapping into EEG data, i.e. it isn't taking electrical impulses from the cranium and working out what you are thinking." James said the study uses data from a functional MRI scan which indicates the activity of each region of the brain. "Specifically the activity of the visual cortex is fed into a neural network which is then trained to produce an output that matches the visual input that the subject is seeing."

What is functional magnetic resonance imaging (fMRI)? Micu said this is "a technique that measures blood flow in the brain and uses that to gauge neural activity."

Ramirez wrote that "Activity in the visual cortex was measured using functional magnetic resonance imaging (fMRI), which is translated into hierarchical features of a deep neural network."

Micu said, "This scan was performed several times. During every scan, each of the three subjects was asked to look at over 1000 pictures. These included a fish, an airplane, and simple colored shapes."

I Programmer's James: "You don't get exact reproduction of the image but it is close enough to see the connection."

Micu said, "the technology brings us one step closer to systems that can read and understand what's going on in our minds."

More information: Guohua Shen et al. Deep image reconstruction from human brain activity, bioRxiv (2017). DOI: 10.1101/240317

Abstract

Machine learning-based analysis of human functional magnetic resonance imaging (fMRI) patterns has enabled the visualization of perceptual content. However, it has been limited to the reconstruction with low-level image bases or to the matching to exemplars. Recent work showed that visual cortical activity can be decoded (translated) into hierarchical features of a deep neural network (DNN) for the same input image, providing a way to make use of the information from hierarchical visual features. Here, we present a novel image reconstruction method, in which the pixel values of an image are optimized to make its DNN features similar to those decoded from human brain activity at multiple layers. We found that the generated images resembled the stimulus images (both natural images and artificial shapes) and the subjective visual content during imagery. While our model was solely trained with natural images, our method successfully generalized the reconstruction to artificial shapes, indicating that our model indeed reconstructs or generates images from brain activity, not simply matches to exemplars. A natural image prior introduced by another deep neural network effectively rendered semantically meaningful details to reconstructions by constraining reconstructed images to be similar to natural images. Furthermore, human judgment of reconstructions suggests the effectiveness of combining multiple DNN layers to enhance visual quality of generated images. The results suggest that hierarchical visual information in the brain can be effectively combined to reconstruct perceptual and subjective images.

© 2018 Tech Xplore