May 30, 2019 feature

Researchers try to recreate human-like thinking in machines

Researchers at Oxford University have recently tried to recreate human thinking patterns in machines, using a language guided imagination (LGI) network. Their method, outlined in a paper pre-published on arXiv, could inform the development of artificial intelligence that is capable of human-like thinking, which entails a goal-directed flow of mental ideas guided by language.

Human thinking generally requires the brain to understand a particular language expression and use it to organize the flow of ideas in the mind. For instance, if a person leaving her house realizes that it's raining, she might internally say, "If I get an umbrella, I might avoid getting wet," and then decide to pick up an umbrella on the way out. As this thought goes through her mind, however, she will automatically know what the visual input (i.e. raindrops) she observes means, and how holding an umbrella could prevent getting wet, perhaps even imagining the feeling of holding the umbrella or getting wet under the rain.

Although some machines can now recognize images, process language or even sense raindrops, they have not yet acquired this unique and imaginative thinking ability. Humans can achieve such "continual thinking" because they are able to generate mental images guided by language and extract language representations from real or imagined situations.

In recent years, researchers have developed natural language processing (NLP) tools that can answer queries in a human-like way. However, these are merely probability models, and are thus unable to understand language in the same way and with the same depth as humans. This is because humans have an innate cumulative learning capacity that accompanies them as their brain develops. This "human thinking system" has been found to be associated with particular neural substrates in the brain, the most important of which is the prefrontal cortex (PFC).

The PFC is the region of the brain responsible for working memory (i.e., memory processes that take place as people are performing a given task), including the maintenance and manipulation of information in the mind. In an attempt to reproduce human-like thinking patterns in machines, Feng Qi and Wenchuan Wu, the two researchers who carried out the recent study, created an artificial neural network inspired by the human PFC.

"We proposed a language guided imagination (LGI) network to incrementally learn the meaning and usage of numerous words and syntaxes, aiming to form a human-like machine thinking process," the researchers explained in their paper.

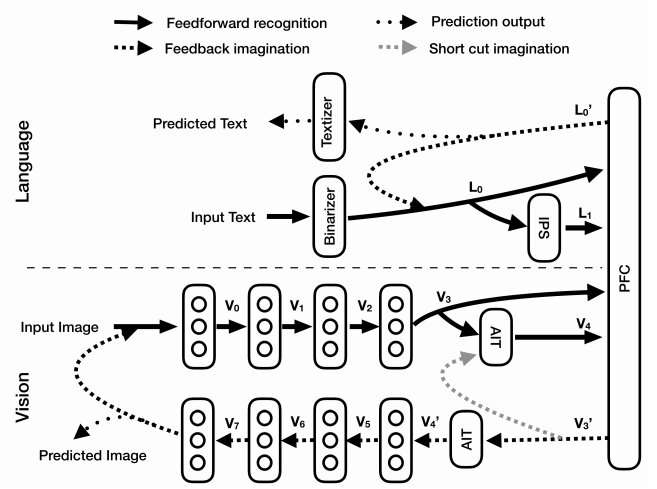

The LGI network developed by Qi and Wu has three key components: a vision system, a language system and an artificial PFC. The vision system is composed of an encoder that disentangles the input received by the network or imagined scenarios into abstract population representations, as well as an imagination decoder that reconstructs imagined scenarios from higher level representations.

The second sub-system, the language system, includes a binarizer that transfers symbol texts into binary vectors, a system that mimics the function of the human intraparietal sulcus (IPS) by extracting quantity information from input texts and a textizer that converts binary vectors into text symbols. The final component of their LGI network mimics the human PFC, combining inputs of both language and vision representations to predict text symbols and manipulated images.

Qi and Wu evaluated their LGI network in a series of experiments and found that it successfully acquired eight different syntaxes or tasks in a cumulative way. Their technique also formed the first 'machine thinking loop," showing an interaction between imagined pictures and language texts. In the future, the LGI network developed by the researchers could aid the development of more advanced AI, which is capable of human-like thinking strategies, such as visualization and imagination.

"LGI has incrementally learned eight different syntaxes (or tasks), with which a machine thinking loop has been formed and validated by the proper interaction between language and vision system," the researchers wrote. "Our paper provides a new architecture to let the machine learn, understand and use language in a human-like way that could ultimately enable a machine to construct fictitious mental scenarios and possess intelligence."

More information: Feng Qi, Wenchuan Wu. Human-like machine thinking: language guided imagination. arXiv:1905.07562 [cs.CL]. arxiv.org/abs/1905.07562

© 2019 Science X Network