May 29, 2019 feature

REPLAB: A low-cost benchmark platform for robotic learning

Researchers at UC Berkeley have developed a reproducible, low-cost and compact benchmark platform to evaluate robotic learning approaches, which they called REPLAB. Their recent study, presented in a paper pre-published on arXiv, was supported by Berkeley DeepDrive, the Office of Naval Research (ONR), Google, NVIDIA and Amazon.

"Machine learning-based approaches have begun to grow popular in robotics recently, but there is currently no easy way to compare approaches due to major differences in the hardware setups used in various labs," Brian Yang, one of the researchers who carried out the study, told TechXplore. "For example, in grasping research, everything from the type of arm or gripper down to the material that the gripper is made of affects grasping performance, so even if you get better grasping accuracy than a method from last year, it isn't clear whether that is because of better control or just better hardware."

In recent years, there has been a growing need for standardized measures and benchmark platforms to evaluate machine learning approaches for robotics. Establishing effective benchmarks can sometimes be challenging, particularly for robotics learning, where robots are expected to generalize learned models to new objects and situations. The new benchmark platform developed at UC Berkeley provides a low-cost and easily reproducible solution to test robotics object manipulation approaches.

"Other applications of machine learning such as computer vision and natural language processing have benefited greatly from having datasets and benchmarks, as they drive research focus on important problems, provide a way to chart the progress of a research community, and help to quickly identify, disseminate, and improve upon ideas that work well," Dinesh Jayaraman, another researcher involved in the study, told TechXplore. "We designed REPLAB to serve this function for the robot learning research community."

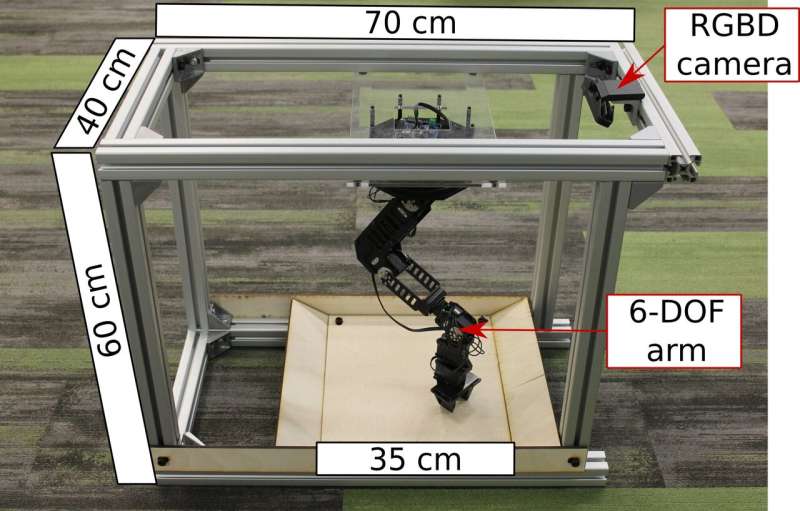

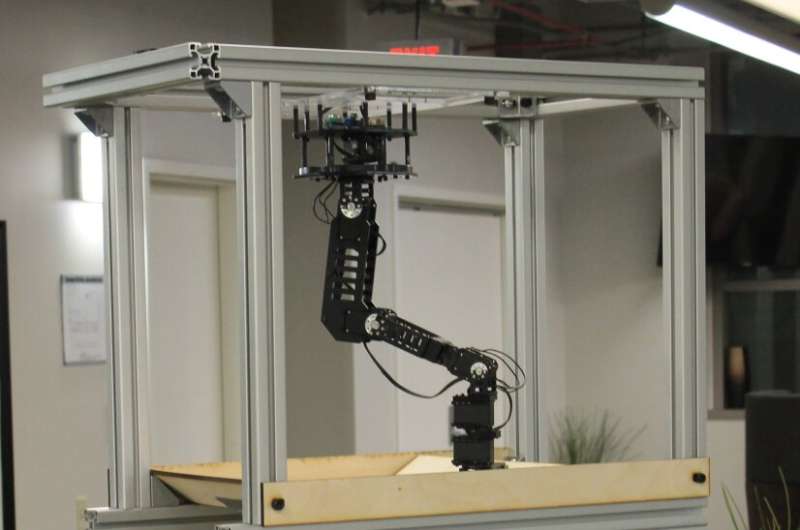

REPLAB has several components, including a robotic arm, a camera and a workspace, placed in a cuboid space of 70 x 40 x 60cm. The platform costs approximately $2000 to build and can be assembled within just a few hours. Its compact and low-cost design could allow more researchers, even those with a restricted budget, to evaluate their frameworks and approaches.

"REPLAB is a fully standardized hardware platform for robotic manipulation that is designed with easy adoption in mind," Jayaraman explained. "It contains a single low-cost arm (Trossen WidowX), an RGB-D camera (Intel Realsense SR300) and a standardized, compact workspace that is easy to assemble in a few hours using our assembly instructions. All put together, one whole REPLAB cell costs about 2k USD (compared to standard arm setups that cost 40-50k), occupying about 10x less space than a standard arm setup."

In addition to the platform itself, the researchers proposed a template for a grasping benchmark that includes a task definition and evaluation protocol, performance measures and a dataset of 92,000 grasp attempts. The baselines for this benchmark were established via the implementation, evaluation and analysis of several existing grasping approaches.

"Because we have this standardized hardware platform, we are also able to share an open-source software package with implementations of various robot learning algorithms (so far, supervised learning algorithms for grasping and reinforcement learning algorithms for 3-D point reaching)," Jayamaran said. "If you construct a REPLAB cell of your own, you can download a Docker image containing these implementations and run them out-of-the-box on your cell."

So far, the researchers have primarily carried out evaluations aimed at verifying the feasibility of REPLAB as a platform for reproducible research in robotics learning, focusing on two particular tasks: grasping and 3-D point reaching. In other words, they have used their platform to implement and evaluate multiple deep supervised learning approaches for these particular grasping tasks. Their findings suggest that the platform exposes existing algorithms to somewhat understudied challenges that are crucial for the development of robots that perform well in-the-wild, such as noisy actuation.

"We have also verified that results remain consistent across multiple REPLAB cells, which is important for thinking of REPLAB-based algorithm implementations and evaluations as reproducible," Jayamaran said. "We believe that REPLAB will facilitate consistent and reproducible progress metrics for robot learning, lower the barrier to entry into robotics for researchers in related disciplines like machine learning, and encourage shareable code and data across researchers."

The new platform introduced by Yang, Jayaraman and their colleagues could soon allow more researchers to evaluate approaches for a wide range of manipulation tasks. Like other benchmank platforms, however, in order to succeed, the use of REPLAB should involve the robot learning research community at large.

"While we are invested in maintaining the platform for many years to come, we are inviting contributions from the community, such as new algorithm implementations, datasets, and benchmarks and to our open-source platform," Jayaraman said. "The grand vision is to reach a point where if a new state-of-the-art robot learning algorithm is released, a researcher sitting anywhere in the world would be able to download, evaluate, iterate and improve upon an implementation within a few days. We think REPLAB helps accelerate research by doing two things: lowering the barrier of entry and allowing many more people to participate in state-of-the-art research, and allowing this kind of fast iteration and improvement through code-sharing."

In their future work, the researchers at UC Berkeley plan to develop their platform further, adding a complete REPLAB cell simulator and algorithms for robust control, while also tackling new manipulation challenges. They also hope to broaden the official REPLAB github repo and docker image, including implementations of more state-of-the-art algorithms.

More information: Brian Yang et al. REPLAB: A reproducible low-cost arm benchmark platform for robotic learning. arXiv:1905.07447 [cs.RO]. arxiv.org/abs/1905.07447

© 2019 Science X Network