July 17, 2019 feature

A method to reduce the number of neurons in recurrent neural networks

A team of researchers at Queen's University, in Canada, have recently proposed a new method to downsize random recurrent neural networks (rRNN), a class of artificial neural networks that is often used to make predictions from data. Their approach, presented in a paper pre-published on arXiv, allows developers to minimize the number of neurons in an rRNN's hidden layer, consequently enhancing its prediction performance.

"Our lab focuses on designing hardware for artificial intelligence applications," Bicky Marquez, one of the researchers who carried out the study, told TechXplore. "In this study, we were looking for strategies to understand the operating principles of neural networks, and at the same time, trying to reduce the number of neurons in networks that we were aiming to build without negatively affecting their performance when solving a task. The main task we wanted to tackle was prediction, as this has always been of big interest for the scientific community and society at large."

Developing machine learning tools that can predict future patterns from data has become the key focus of numerous research groups worldwide. This is far from surprising, as predicting future events could have important applications in a variety of fields, for instance, forecasting the weather, predicting stock movements, or mapping the evolution of some human pathologies.

The study conducted by Marquez and her colleagues is of an interdisciplinary nature, as it merges theories related to nonlinear dynamical systems, time series analysis, and machine learning. The researchers' primary objectives were to extend the toolkit previously available for neural network analysis, minimize the number of neurons in the hidden layer of rRNNs, and partially remove the black-box property of these networks.

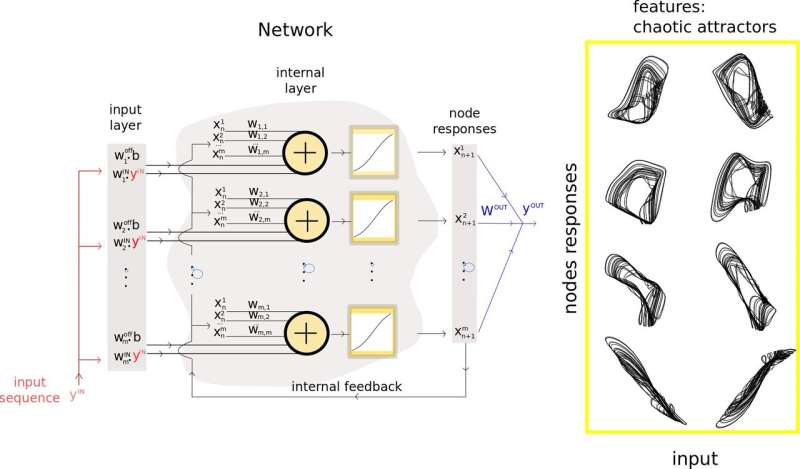

To achieve this, they introduced a new methodology that merges prediction theory and machine learning into one framework. Their technique can be used to extract and use relevant features of an rNN's input data and guide the downsizing process of its hidden layers, ultimately improving its prediction performance.

The researchers used the insights gathered in their study to develop a new artificial neural network model called a Takens-inspired processor. This model, composed of both real and virtual neurons, achieved state-of-the-art performance on challenging problems such as high-quality, long-term prediction of chaotic signals.

"The main advantage of our model is that it tackles the issues created by the massive amount of neurons that make up typical artificial neural networks," Marquez explained. "The excess of neurons in these models commonly translates into computationally expensive problems when considering the optimization of such networks to solve a task. The inclusion of the concept of virtual neurons in our design is a highly convenient step for the reduction of the amount of physical neurons."

In their study, Marquez and her colleagues also used their hybrid processor to stabilize an arrhythmic neural model of neuronal excitability called Fitz-Hugh-Nagumo. Their methodology allowed them to downsize the stabilizing neural network's size by a factor of 15 in comparison with other standard neural networks.

"Our approach allowed us to uncover some relevant features that are being created inside the networks' space, and which are the fundamental agents of successful predictions," Marquez said. "If we can identify and remove the noise around those important features, we could use them to enhance the performance of our networks."

The methodology devised by Marquez and her colleagues is an important addition to the previously available tools for rRNN development and analysis. In the future, their approach could inform the design of more effective neural networks for prediction, reducing the number of nodes and connections contained within them. Their technique could also make rRNNs more transparent, allowing users to access key insight about how a system has reached a given conclusion.

"We are focused on neuromorphic hardware," Marquez said. "Therefore, our next steps will be related to the physical implementation of such random recurrent networks. Our ultimate aim is to design brain-inspired computers that can solve artificial intelligence problems very efficiently: ultra-fast and with low energy consumption."

More information: Bicky A. Marquez, et al. Takens-inspired neuromorphic processor: a downsizing tool for random recurrent neural networks via feature extraction. arXiv:1907.03122 [cs.NE]. arxiv.org/abs/1907.03122

© 2019 Science X Network