July 13, 2022 feature

A framework that could enhance the ability of robots to use physical tools

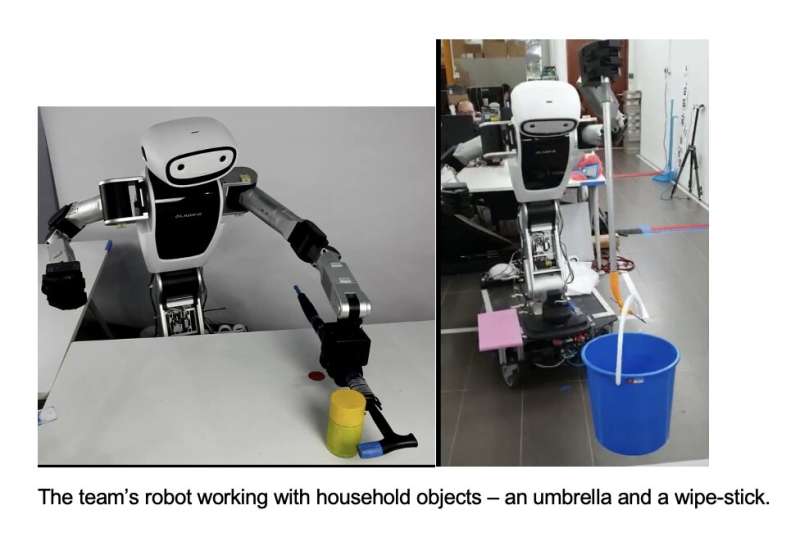

Researchers at I2R ASTAR Singapore and UM-CNRS LIRMM in France have recently developed a framework that could improve the ability of robots to identify objects in their surroundings that could be potential tools and then use them to complete manual tasks, even if they never encountered these objects before. Their approach, introduced in a paper published in Nature Machine Intelligence, could significantly enhance the ability of robotic systems to complete challenging tasks that might require tools, without the need for any prior tool training.

"Humans are amazing at recognizing random objects in their environment as potential tools, and using them as such," Ganesh Gowrishankar, one of the researchers who carried out the study, told TechXplore. "Similar abilities can be very useful for robots and can enable them to be innovative in 'unstructured' (that is non-modeled and unpredictable) environments. For example, imagine a rescue robot in a disaster scenario being able to independently (without human help) solve tasks and pass obstacles using available debris as tools."

While many past studies in the field of robotics have highlighted the vast potential of systems that can use tools to complete physical missions, all of the methods proposed so far required prior training with tools. This was achieved in simulation, using videos of humans or other robots using tools, or directly in the physical world, using a tool.

The goal of the work by Keng Peng Tee, Gowrishankar and their colleagues, on the other hand, was to create a framework that would allow robots to identify potentially useful tools on the fly based on their shape and size, even if they never encountered these objects before and were never trained on how to use them, or any other tool, before. The researchers have been working on such a framework for several years now.

"In our new paper, we combined our past work in human tool use, 'embodiment' and human tool characterization to develop a cognition framework that enables robots with zero tool experience to recognize objects (even ones seen for the first time) as tools for a given task, and use them immediately- just like a human," Gowrishankar said.

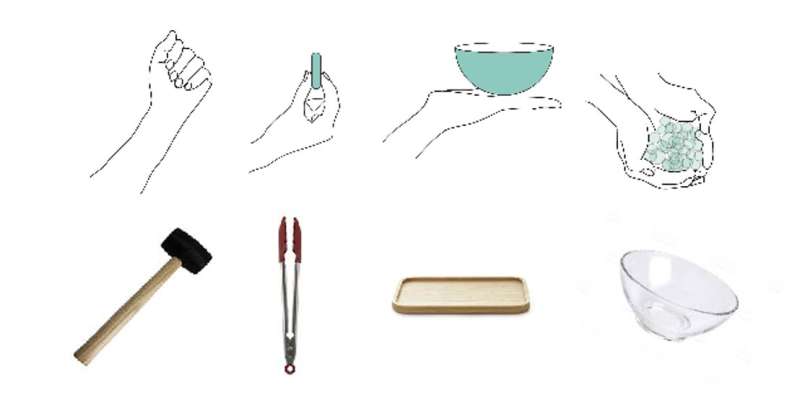

While conducting their previous studies and reviewing existing literature, Tee and Gowrishankar discovered that humans may use the shape of their own hands or arms, and the actions made with their hand/arms, as a reference to determine whether a tool can be useful for completing a particular task. The new framework they developed is based on this idea, as it encourages robots to use their limbs to determine whether objects in their surroundings could be used as tools.

"Our framework uses our discoveries to enable intuitive tool use by robots," Tee explained. "Specifically, it enables a robot to isolate the 'functionality' features of its own limb that enable a task, to use these features to recognize an object as a potential tool for the same task, and then to develop successful movements with the tool using skills (controllers) the robot already possesses."

Essentially, the researchers' framework allows robots to use tools to complete any task they are capable of completing without tools (i.e., any task for which they possessed a so-called "controller"). It only requires that the robot has integrated cameras or sensors that allow it to "visually" perceive objects in its surroundings.

The new framework developed by Tee, Gowrishankar and their colleagues could be used to enhance the capabilities of both existing and newly developed robots, allowing them to take better advantage of objects in their surroundings when completing a specific mission. As accurate visual perception in robots is still a technical challenge, however, the potential of the framework is currently limited.

"In its current form, our framework is based solely on visual perception," Tee said. "Therefore, it works for tools that enable 'kinematic augmentations'—extensions in the shape and size (which can be perceived visually) of our limbs. These include a large (probably majority) set of tools that we use in daily life—spoons, rakes, tongs, plates and even chairs (to climb up) etc."

While the researchers' framework covers many of the simple tools we use in our day-to-day lives, in its current form it would not allow robots to identify and use tools that enable so-called "dynamic/force augmentations," such as hammers or levers. The main reason for this is that recognizing these tools requires more than just looking at their shape and size, it also requires knowledge of their weight and dynamic properties.

To recognize these tools as useful when presented with them, a robot would thus need to have a further layer of perception, which allows it to connect the visual/physical features of an object with its dynamic features. To achieve this, Gowrishankar and his colleagues are now planning to develop their framework further.

"In our next studies, we would like to extend our framework to enable dynamic tool use," Gowrishankar added. "We would also like to integrate our framework with learning techniques proposed by other tool use studies. This is necessary to enable a robot to use tools for tasks it doesn't possess a controller for (something our framework does not address), thus it could bring robots closer to the tool use capabilities of humans."

More information: Keng Peng Tee et al, A framework for tool cognition in robots without prior tool learning or observation, Nature Machine Intelligence (2022). DOI: 10.1038/s42256-022-00500-9

Keng Peng Tee et al, Towards Emergence of Tool Use in Robots: Automatic Tool Recognition and Use Without Prior Tool Learning, 2018 IEEE International Conference on Robotics and Automation (ICRA) (2018). DOI: 10.1109/ICRA.2018.8460987

Laura Aymerich-Franch et al, The role of functionality in the body model for self-attribution, Neuroscience Research (2015). DOI: 10.1016/j.neures.2015.11.001

G. Ganesh et al, Immediate tool incorporation processes determine human motor planning with tools, Nature Communications (2014). DOI: 10.1038/ncomms5524

© 2022 Science X Network