Researchers' study of human-robot interactions is an early step in creating future robot 'guides'

A new study by Missouri S&T researchers shows how human subjects, walking hand-in-hand with a robot guide, stiffen or relax their arms at different times during the walk. The researchers' analysis of these movements could aid in the design of smarter, more humanlike robot guides and assistants.

"This work presents the first measurement and analysis of human arm stiffness during overground physical interaction between a robot leader and a human follower," the Missouri S&T researchers write in a paper recently published in the journal Scientific Reports.

The lead researcher, Dr. Yun Seong Song, assistant professor of mechanical and aerospace engineering at Missouri S&T, describes the findings as "an early step in developing a robot that is humanlike when it physically interacts with a human partner."

"This is related to the idea of having assistive robots that can seamlessly interact with us," Song says.

Humans frequently interact with one another without verbal communication or even visual cues when performing certain tasks, such as when one person helps another walk or when a couple dances a waltz. In their Scientific Reports paper, the researchers describe the forces that come into play when a robotic guide walks with humans along three different trajectories.

"At first glance, physical interaction is a dynamic task with power exchanges dictated by the passive properties of the interacting being," Song says. "But if you examine how humans handle physical interaction, you realize that there has to be constant processing of information and decision making to infer each other's intent. Uncovering the mechanism through which this happens will help us design future robots that can seamlessly interact with their human partners.

"Even without explicitly shared goals, two human partners can physically interact with each other to perform collaborative tasks," write Song and his fellow Missouri S&T researchers, Dr. Devin Burns, associate professor of psychological science, and Dr. Sambad Regmi, who earned a Ph.D. from S&T in May 2022. Burns helped design the experiment and "rigorously interpreted the data" while co-mentoring Regmi, Song says.

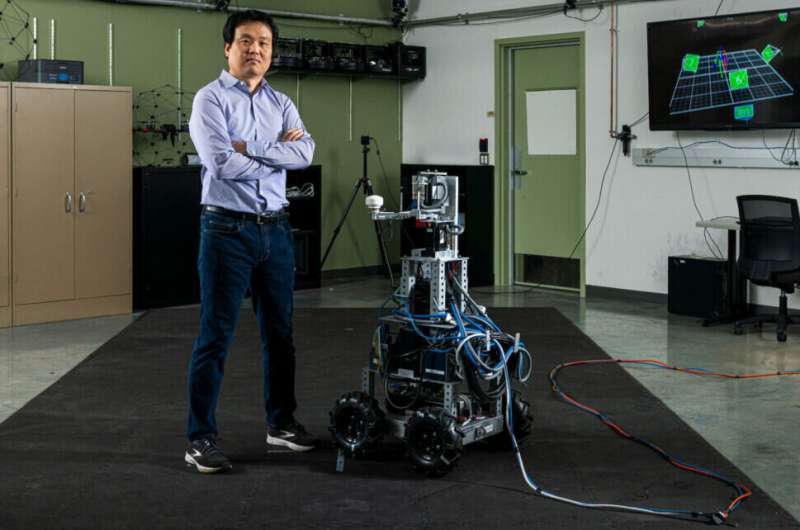

Using their recently developed interactive robot, Ophrie, the researchers simulated a guided walking task that required the human subjects to close their eyes while holding the handle on the robot's arm. Ophrie—an acronym for Overground Physical Human Robot Interaction—was programmed to move on a straight trajectory for the first 1.5 meters of the walk (roughly 5 feet), then deviate either to the left or right or continue the straight trajectory. The subjects were expected to react to haptic cues delivered through the handle to sense the robot's movement.

The S&T researchers measured arm stiffness at two points during the walk: either when the robot could take one of three divergent trajectories, at which point the human subjects were less certain of the path, or at the end of the walk, when subjects were more certain of the robot's path.

"We observed that the arm stiffness was lower at instants when the robot's upcoming trajectory was unknown compared to instants when it was predictable," the researchers write. This presents "the first evidence of arm stiffness modulation for better motor communication during overground physical interaction."

Ten human subjects in their mid-20s took part in the experiments. Nine of the 10 were male, one was left-handed and none reported any neurological disorders.

Understanding this interaction between humans and robots can lead to improvements in robot design, Song says. An interactive robot assistant could be used in elder care, physical therapy and other fields.

"Like a human partner, these robots would help a construction worker carry loads or an elderly person with mobility issues walk from the bedroom to the kitchen," Song says. "We want these robots to be effective in their performance, intuitive to use and communicate with, and safe to interact with, all while maintaining physical contact with their human partners."

More information: Sambad Regmi et al, Humans modulate arm stiffness to facilitate motor communication during overground physical human-robot interaction, Scientific Reports (2022). DOI: 10.1038/s41598-022-23496-z