January 25, 2023 feature

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

A framework that allows four-legged robots to follow a leader in both daytime and nighttime conditions

Legged robots have significant advantages over wheeled and track-based robots, particularly when it comes to moving on different types of terrains. This makes them particularly favorable for missions that involve transporting goods or traveling from one place to another.

One promising approach that allows legged robots to effectively tackle these missions, particularly those that involve long-distance traveling, entails teaching them to follow a "leader," whether a specific vehicle or human agent. However, this can be difficult to achieve, particularly under all lighting and atmospheric conditions.

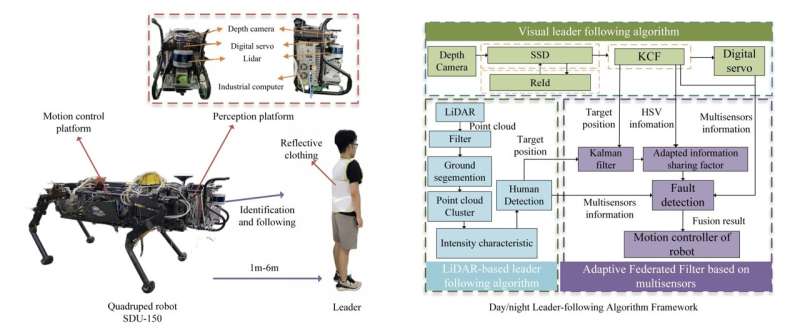

Researchers at Shandong University in China have recently developed a new framework that could provide four-legged robots with leader-following abilities in both nighttime and daytime conditions. This framework, introduced in MDPI's Biomimetics journal, is based on visual and LiDAR detection technology.

"Leader-following can help quadruped robots accomplish long-distance transportation tasks," Jialin Zhang, Jiamin Guo, Hui Chai, Qin Zhang, Yibin Li, Zhiying Wang and Qifan Zhang wrote in their paper. "However, long-term following has to face the change of day and night as well as the presence of interference. To solve this problem, we present a day/night leader–following method for quadruped robots toward robustness and fault-tolerant person following in complex environments."

To be effective, leader-following frameworks should allow robots to accurately detect and identify specific people under different lighting conditions, so that they can then follow them to a desired location. The method proposed by Zhang, Guo and their colleagues achieves this using three different modules: a person detection, a communication and a motion control module.

"We construct an Adaptive Federated Filter algorithm framework, which fuses the visual leader-following method and the LiDAR detection algorithm based on reflective intensity," Zhang and his colleagues wrote in their paper. "Moreover, the framework uses the Kalman filter and adaptively adjusts the information sharing factor according to the light condition. In particular, the framework uses fault detection and multi-sensors information to stably achieve day/night leader-following."

A unique feature of the leader-following framework introduced by the researchers is its use of a fault detection and isolation algorithm, which is designed to significantly improve its performance in both daytime and nighttime conditions. This algorithm relies on the data collected by several different sensors and on computations ran by a detection algorithm, which allow it to adapt to high-frequency vibrations, different levels of illumination and possible visual interferences caused by reflective materials in the surrounding environment.

Zhang, Guo and their colleagues evaluated their proposed framework in a series of trials using SDU-150, a quadruped robot developed at Shandong University. These tests yielded very promising results, as the robot was able to identify leaders reliably and effectively in various scenarios. The robot was tested in both indoor and outdoor environments, at day and at night and under different lighting conditions.

In the future, the leader-following framework developed by this team of researchers could help to improve the leader-following abilities of other existing and newly developed robots. In addition, it could potentially inspire the development of similar approaches designed to enhance the ability of robots to detect and track specific targets under different lighting conditions.

"The next step will combine sensor fusion with deep learning to perform data-level multisensor fusion, which greatly improves the detection accuracy and adapts to the high-precision operating situation," the researchers conclude in their paper.

More information: Jialin Zhang et al, A Day/Night Leader-Following Method Based on Adaptive Federated Filter for Quadruped Robots, Biomimetics (2023). DOI: 10.3390/biomimetics8010020

© 2023 Science X Network