This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

Echo state graph neural networks with analogue random resistive memory arrays

Graph neural networks have been widely used for studying social networks, e-commerce, drug predictions, human-computer interaction, and more.

In a new study published in Nature Machine Intelligence as the cover story, researchers from Institute of Microelectronics of the Chinese Academy of Sciences (IMECAS) and the University of Hong Kong have accelerated graph learning with random resistive memory (RRM), achieving 40.37X improvements in energy efficiency over a graphics processing unit on representative graph learning tasks.

Deep learning with graphs on traditional von Neumann computers leads to frequent data shuttling, inevitably incurring long processing times and high energy use. In-memory computing with resistive memory may provide a novel solution.

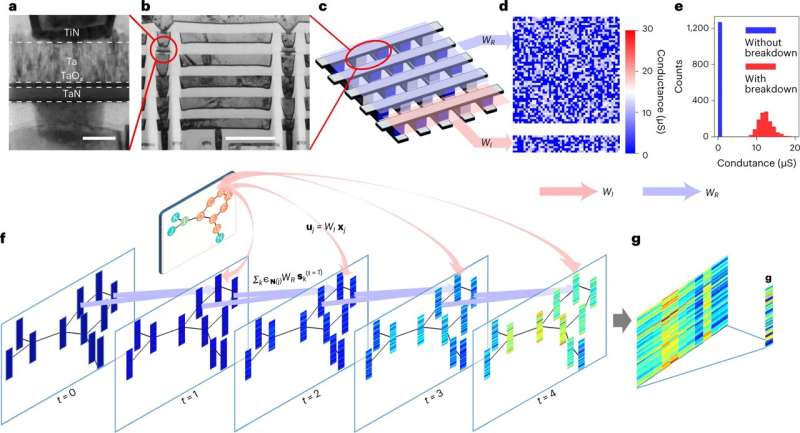

The researchers presented a novel hardware–software co-design, the RRM-based echo state graph neural network, to address those challenges.

The RRM not only harnesses low-cost, nanoscale and stackable resistors for highly efficient in-memory computing, but also leverages the intrinsic stochasticity of dielectric breakdown to implement random projections in hardware for an echo state network that effectively minimizes the training cost.

The work is significant for developing next-generation AI hardware systems.

More information: Shaocong Wang et al, Echo state graph neural networks with analogue random resistive memory arrays, Nature Machine Intelligence (2023). DOI: 10.1038/s42256-023-00609-5