April 11, 2023 feature

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

preprint

trusted source

proofread

Amazon creates a new user-centric simulation platform to develop embodied AI agents

AI-powered robots are generally trained in simulation environments before they are tested and introduced in real-world settings. These environments allow developers to safely test their machine learning techniques on a variety of robots and in numerous possible scenarios, without having to purchase hardware, assemble robots and then bring them to remote locations, or compromise on real-world safety of the deployed systems.

Amazon Alexa AI recently created a new simulation platform specifically for embodied AI research, the field specialized in the development of autonomous robots. This platform, dubbed Alexa Arena, was presented in a paper pre-published on arXiv and is publicly available on GitHub.

"Our primary objective was to develop an interactive Embodied AI framework to catalyze the creation of next-generation embodied AI agents," Govind Thattai, the lead scientist for Arena platform, told Tech Xplore. "Several embodied-AI simulation platforms have been proposed in recent years (e.g., AI2Thor, Habitat, iGibson). These platforms support simulated scenes, where embodied agents can navigate and interact with objects, yet most of them are not designed for humans to interact with agents due to the lack of user-centricity," said Qiaozi Gao, who co-developed the Arena framework.

As most available simulation platforms are not user-centric to collect data for human-robot interactions, developers often need to conduct real-world experiments, which is typically expensive and time consuming. Alternatively, some teams choose to develop a so-called "inferencing engine," a computational tool that allows humans to directly interact with a simulated environment, yet this also requires time and additional research efforts.

Embodied agents need to consistently interact with their environments, while also learning from and adapting to other agents or humans in a safe and effective manner. While current simulation platforms focus on task decomposition and navigation, Arena attempts to fill in the missing pieces that would inevitably come into play during deployment and real-time evaluation of collaborative robots.

Arena is augmented with user-centric features to not only bolster development and evaluation of EAI agents, but also to bridge the gap between the development and deployment phase. This is done by making humans an indispensable part of the EAI development and evaluation process.

"To address these challenges, we created Alexa Arena," said Suhaila Shakiah, a developer of Arena ML components. "Our platform offers a framework with user-centric capabilities, such as smooth visuals during robot navigation, continuous background animations and sounds, viewpoints in rooms to simplify room-2-room navigation, and visual hints embedded in the scene that aid human-users to generate suitable instructions for task-completion. These features enhance usability and user experience, enabling human-in-the-loop embodied AI development and evaluation."

On the Alexa Arena platform, developers can develop and test different embodied AI agents with multimodal capabilities. These agents can interact with the relevant objects or areas in the simulated environment based on the specific requests by users, a capability known as visual grounding. They can also learn to follow natural language user instructions, which is a vital aspect of human-robot interaction.

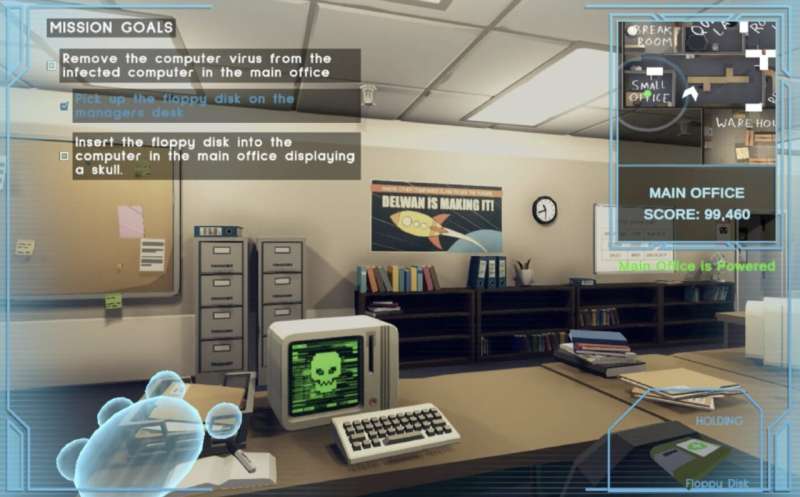

"Alexa Arena pushes the boundaries of human-robot interaction," explained Xiaofeng Gao, an Arena developer. "It offers an interactive, user-centric framework, enabling creating robotic tasks and missions that involve navigating multi-room simulated environments and real time object manipulation. In a game-like setting, users can interact with virtual robots through natural-language dialogue, providing invaluable feedback and helping the robots learn and complete their tasks."

In contrast with other existing simulation platforms, Alexa Arena has a greatly simplified interface for developers and end-users alike. Users can create specific tasks and missions for the robots in the simulation environment using in-built hints, and features that push the boundaries of human-computer interaction and embodied AI. This also helps to collect human-robot interaction data more easily and efficiently, while also training robots to effectively tackle interactive tasks using a variety of different objects and tools.

The user-centric platform could soon be used by developers and researchers worldwide to develop highly performing embodied AI agents and smart robots. Meanwhile, the team plans to further enhance Alexa Arena, adding new features and simulated scenarios.

"We will now continue to improve the Arena platform to support higher and better runtime performances, more scenes, a richer collection of objects and a wider range of interactions," Govind added. "We will also continue investing in the general Embodied AI field, by developing next-generation intelligent robots that can complete real-world tasks and engage in natural communication with humans."

More information: Qiaozi Gao et al, Alexa Arena: A User-Centric Interactive Platform for Embodied AI, arXiv (2023). DOI: 10.48550/arxiv.2303.01586

© 2023 Science X Network